Abstract

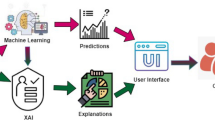

The recent development of Artificial intelligence and Machine learning, in general, has exhibited impressive results in a variety of fields, especially through the introduction of deep learning (DL). Even though they show an extraordinary performance in a substantial number of jobs and have tremendous potential. This surge in performance is usually pulled off through the increase in model complexity, giving rise to the black-box model and creating confusion about how they work and, ultimately, how they make judgments. This uncertainty has made it difficult for machine-learning programs to be used in more sensitive but essential areas, such as health care, where their benefits can be enormous, Thus giving birth to the need for Explainable AI. Explainable Artificial Intelligence (XAI) is a new machine-learning research subject aiming at decoding how AI systems make black-box decisions. This chapter focuses on the need for Explainable AI in the field of healthcare and some techniques like LIME, SHAP, PDPs, and a few others, through which complex models can be explained. We will see the use of explainable methods by analyzing two case studies. Through the use of this article, clinicians, theorists, and practitioners can get a better insight into how these models work and can help to bring a high level of accountability and transparency.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Kelly, C. J., Karthikesalingam, A., Suleyman, M., Corrado, G., & King, D. (2019). Key challenges for delivering clinical impact with artificial intelligence. BMC Medicine, 17(1), 1–9.

Tripathy, H. K., Mallick, P. K., & Mishra, S. (2021). Application and evaluation of classification model to detect autistic spectrum disorders in children. International Journal of Computer Applications in Technology, 65(4), 368–377.

Chen, L., Bentley, P., & Rueckert, D. (2017). Fully automatic acute ischemic lesion segmentation in DWI using convolutional neural networks. NeuroImage: Clinical, 15, 633–643.

Mishra, S., Dash, A., Ranjan, P., & Jena, A. K. (2021). Enhancing heart disorders prediction with attribute optimization. In Advances in Electronics, Communication and Computing (pp. 139–145). Springer Singapore.

Adadi, A., & Berrada, M. (2018). Peeking inside the black-box: A survey on explainable artificial intelligence (XAI). IEEE Access, 6, 52138–52160.

Gunning, D., & Aha, D. (2019). DARPA’s explainable artificial intelligence (XAI) program. AI Magazine, 40(2), 44–58.

Schwalbe, G., & Finzel, B. (2021). XAI method properties: A (meta-) study. arXiv preprint arXiv:2105.07190

Dilsizian, S. E., & Siegel, E. L. (2014). Artificial intelligence in medicine and cardiac imaging: Harnessing big data and advanced computing to provide personalized medical diagnosis and treatment. Current Cardiology Reports, 16(1), 441.

Patel, V. L., Shortliffe, E. H., Stefanelli, M., Szolovits, P., Berthold, M. R., Bellazzi, R., & Abu-Hanna, A. (2009). The coming of age of artificial intelligence in medicine. Artificial Intelligence in Medicine, 46(1), 5–17.

Jha, S., & Topol, E. J. (2016). Adapting to artificial intelligence: Radiologists and pathologists as information specialists. JAMA, 316(22), 2353–2354.

Strickland, E. (2019). IBM Watson, heal thyself: How IBM overpromised and underdelivered on AI health care. IEEE Spectrum, 56(4), 24–31.

Weingart, N. S., Wilson, R. M., Gibberd, R. W., & Harrison, B. (2000). Epidemiology of medical error. BMJ, 320(7237), 774–777.

Ker, J., Wang, L., Rao, J., & Lim, T. (2017). Deep learning applications in medical image analysis. IEEE Access, 6, 9375–9389.

Yang, G., Ye, Q., & Xia, J. (2021). Unbox the black-box for the medical explainable AI via multi-modal and multi-centre data fusion: A mini-review, two showcases and beyond. arXiv preprint arXiv:2102.01998

Miller, R. A. (1994). Medical diagnostic decision support systems—Past, present, and future: A threaded bibliography and brief commentary. Journal of the American Medical Informatics Association, 1(1), 8–27.

Musen, M. A., Middleton, B., & Greenes, R. A. (2021). Clinical decision-support systems. In Biomedical informatics (pp. 795–840). Springer.

Kundu, M., Nasipuri, M., & Basu, D. K. (2000). Knowledge-based ECG interpretation: A critical review. Pattern Recognition, 33(3), 351–373.

De Dombal, F. T., Leaper, D. J., Staniland, J. R., McCann, A. P., & Horrocks, J. C. (1972). Computer-aided diagnosis of acute abdominal pain. British Medical Journal, 2(5804), 9–13.

Shortliffe, E. H., Davis, R., Axline, S. G., Buchanan, B. G., Green, C. C., & Cohen, S. N. (1975). Computer-based consultations in clinical therapeutics: Explanation and rule acquisition capabilities of the MYCIN system. Computers and Biomedical Research, 8(4), 303–320.

Barnett, G. O., Cimino, J. J., Hupp, J. A., & Hoffer, E. P. (1987). DXplain: An evolving diagnostic decision-support system. JAMA, 258(1), 67–74.

Miller, R. A., McNeil, M. A., Challinor, S. M., Masarie, F. E., Jr., & Myers, J. D. (1986). The INTERNIST-1/quick medical REFERENCE project—Status report. Western Journal of Medicine, 145(6), 816.

Yu, K. H., & Snyder, M. (2016). Omics profiling in precision oncology. Molecular & Cellular Proteomics, 15(8), 2525–2536.

Deo, R. C. (2015). Machine learning in medicine. Circulation, 132(20), 1920–1930.

Mishra, S., Mohapatra, S. K., Mishra, B. K., & Sahoo, S. (2018). Analysis of mobile cloud computing: Architecture, applications, challenges, and future perspectives. In Applications of security, mobile, analytic, and cloud (SMAC) technologies for effective information processing and management (pp. 81–104). IGI Global.

Yu, K. H., Beam, A. L., & Kohane, I. S. (2018). Artificial intelligence in healthcare. Nature Biomedical Engineering, 2(10), 719–731.

Zeiler, M. D., & Fergus, R. (2014, September). Visualizing and understanding convolutional networks. In European Conference on Computer Vision (pp. 818–833). Springer.

Simonyan, K., Vedaldi, A., & Zisserman, A. (2014). Deep inside convolutional networks: Visualising image classification models and saliency maps. In Workshop at International Conference on Learning Representations.

Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., & Batra, D. (2017). Grad-CAM: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Computer Vision (pp. 618–626).

Zhang, Z., Xie, Y., Xing, F., McGough, M., & Yang, L. (2017). MDNet: A semantically and visually interpretable medical image diagnosis network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. 6428–6436).

Quinn, T. P., Jacobs, S., Senadeera, M., Le, V., & Coghlan, S. (2021). The three ghosts of medical AI: Can the black-box present deliver? Artificial Intelligence in Medicine, 102158.

Mishra, S., Panda, A., & Tripathy, K. H. (2018). Implementation of re-sampling technique to handle skewed data in tumor prediction. Journal of Advanced Research in Dynamical and Control Systems, 10, 526–530.

Chen, H., Michalopoulos, G., Subendran, S., Yang, R., Quinn, R., Oliver, M., Butt, Z., & Wong, A. (2019). Interpretability of ML models for health data—A case study.

Modhukur, V., Sharma, S., Mondal, M., Lawarde, A., Kask, K., Sharma, R., & Salumets, A. (2021). Machine learning approaches to classify primary and metastatic cancers using tissue of origin-based DNA methylation profiles. Cancers, 13(15), 3768.

Linardatos, P., Papastefanopoulos, V., & Kotsiantis, S. (2021). Explainable AI: A review of machine learning interpretability methods. Entropy, 23(1), 18.

Magesh, P. R., Myloth, R. D., & Tom, R. J. (2020). An explainable machine learning model for early detection of Parkinson’s disease using LIME on DaTSCAN imagery. Computers in Biology and Medicine, 126, 104041.

Doppalapudi, S., Qiu, R. G., & Badr, Y. (2021). Lung cancer survival period prediction and understanding: Deep learning approaches. International Journal of Medical Informatics, 148, 104371.

Poewe, W., Seppi, K., Tanner, C. M., Halliday, G. M., Brundin, P., Volkmann, J., Schrag, A.-E., & Lang, A. E. (2017). Parkinson disease. Nature Reviews Disease Primers, 3(1).

Booth, T. C., Nathan, M., Waldman, A. D., Quigley, A. M., Schapira, A. H., & Buscombe, J. (2015). The role of functional dopamine-transporter SPECT imaging in Parkinsonian syndromes, part 1. American Journal of Neuroradiology, 36(2), 229–235.

Tripathy, H. K., Mishra, S., Thakkar, H. K., & Rai, D. (2021). CARE: A collision-aware mobile robot navigation in grid environment using improved breadth first search. Computers & Electrical Engineering, 94, 107327.

Lundervold, A. S., & Lundervold, A. (2019). An overview of deep learning in medical imaging focusing on MRI. Zeitschrift für Medizinische Physik, 29(2), 102–127.

Lundberg, S. M., & Lee, S. I. (2017, December). A unified approach to interpreting model predictions. In Proceedings of the 31st International Conference on Neural Information Processing Systems (pp. 4768–4777).

Friedman, J. H. (2001). Greedy function approximation: A gradient boosting machine. Annals of Statistics, 1189–1232.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Mohanty, A., Mishra, S. (2022). A Comprehensive Study of Explainable Artificial Intelligence in Healthcare. In: Mishra, S., Tripathy, H.K., Mallick, P., Shaalan, K. (eds) Augmented Intelligence in Healthcare: A Pragmatic and Integrated Analysis. Studies in Computational Intelligence, vol 1024. Springer, Singapore. https://doi.org/10.1007/978-981-19-1076-0_25

Download citation

DOI: https://doi.org/10.1007/978-981-19-1076-0_25

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-1075-3

Online ISBN: 978-981-19-1076-0

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)