Abstract

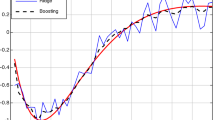

We introduce a novel, robust data-driven regularization strategy called Adaptive Regularized Boosting (AR-Boost), motivated by a desire to reduce overfitting. We replace AdaBoost’s hard margin with a regularized soft margin that trades-off between a larger margin, at the expense of misclassification errors. Minimizing this regularized exponential loss results in a boosting algorithm that relaxes the weak learning assumption further: it can use classifiers with error greater than \(\frac{1}{2}\). This enables a natural extension to multiclass boosting, and further reduces overfitting in both the binary and multiclass cases. We derive bounds for training and generalization errors, and relate them to AdaBoost. Finally, we show empirical results on benchmark data that establish the robustness of our approach and improved performance overall.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on- line learning and an application to boosting. Journal of Computer and System Sciences 55, 119–139 (1997)

Hastie, T., Tibshirani, R., Friedman, J.: The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd edn. Springer (2009)

Schapire, R.E., Freund, Y.: Boosting Foundations and Algorithms, 1st edn. MIT Press (2012)

Mease, D., Wyner, A.: Evidence contrary to the statistical view of boosting. Journal of Machine Learning Research, 131–156 (1998)

Rätsch, G., Onoda, T.: Müller, K.R.: An improvement of AdaBoost to avoid overfitting. In: Proc. ICONIP, pp. 506–509 (1998)

Zhu, J., Zhou, H., Rosset, S., Hastie, T.: Multi-class AdaBoost. Statistics and Its Inference 2, 349–360 (2009)

Jin, R., Liu, Y., Si, L., Carbonell, J., Hauptmann, A.G.: A new boosting algorithm using input-dependent regularizer. In: Proc. ICML, pp. 615–622 (2003)

Friedman, J., Hastie, T., Tibshirani, R.: Additive logistic regression: a statistical view of boosting. The Annals of Statistics 28, 2000 (1998)

Rätsch, G., Onoda, T., Müller, K.R.: Soft margins for AdaBoost. In: Machine Learning, pp. 287–320 (2000)

Sun, Y., Li, J., Hager, W.: Two new regularized AdaBoost algorithms. In: Proc. ICMLA (2004)

Bühlmann, P., Hothorn, T.: Boosting algorithms: Regularization, prediction and model fitting. Statistical Science, 131–156 (2007)

Kaji, D., Watanabe, S.: Model selection method for adaBoost using formal information criteria. In: Köppen, M., Kasabov, N., Coghill, G. (eds.) ICONIP 2008, Part II. LNCS, vol. 5507, pp. 903–910. Springer, Heidelberg (2009)

Xi, Y.T., Xiang, Z.J., Ramadge, P.J., Schapire, R.E.: Speed and sparsity of regularized boosting. In: Proc. AISTATS, pp. 615–622 (2009)

Vapnik, V.: The Nature of Statistical Learning Theory. Springer (2000)

Schapire, R.E., Freund, Y., Bartlett, P., Lee, W.S.: Boosting the margin: A new explanation for the effectiveness of voting methods (1998)

Lee, Y., Lin, Y., Wahba, G.: Multicategory support vector machines, theory, and application to the classification of microarray data and satellite radiance data. Journal of the American Statistical Association 99, 67–81 (2004)

Bache, K., Lichman, M.: UCI Machine Learning Repository (2013), http://archive.ics.uci.edu/ml

Schapire, R.E., Singer, Y.: Improved boosting algorithms using confidence-rated predictions. In: Machine Learning, vol. 37, pp. 297–336 (1999)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Saha, B.N., Kunapuli, G., Ray, N., Maldjian, J.A., Natarajan, S. (2013). AR-Boost: Reducing Overfitting by a Robust Data-Driven Regularization Strategy. In: Blockeel, H., Kersting, K., Nijssen, S., Železný, F. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2013. Lecture Notes in Computer Science(), vol 8190. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-40994-3_1

Download citation

DOI: https://doi.org/10.1007/978-3-642-40994-3_1

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-40993-6

Online ISBN: 978-3-642-40994-3

eBook Packages: Computer ScienceComputer Science (R0)