Abstract

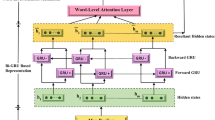

Recent neural network-based models have proven successful in summarization tasks. However, previous studies mostly focus on comparatively short texts and it is still challenging for neural models to summarize long documents such as academic papers. Because of their large size, summarization for academic papers has two obstacles: it is hard for a recurrent neural network (RNN) to squash all the information on the source document into a latent vector, and it is simply difficult to pinpoint a few correct sentences among a large number of sentences. In this paper, we present an extractive summarizer for academic papers. The idea is converting a paper into a tree structure composed of nodes corresponding to sections, paragraphs, and sentences. First, we build a hierarchical encoder-decoder model based on the tree. This design eases the load on the RNNs and enables us to effectively obtain vectors that represent paragraphs and sections. Second, we propose a tree structure-based scoring method to steer our model toward correct sentences, which also helps the model to avoid selecting irrelevant sentences. We collect academic papers available from PubMed Central, and build the training data suited for supervised machine learning-based extractive summarization. Our experimental results show that the proposed model outperforms several baselines and reduces high-impact errors.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

When we calculated ROUGE scores, positive sentences in group A were removed.

- 2.

- 3.

- 4.

Our code is available at https://github.com/kazu-kinugawa/HNES.

- 5.

- 6.

The ROUGE evaluation option is, -m -n 2 -w 1.2.

References

Rush, A.M., Chopra, S., Weston, J.: A neural attention model for sentence summarization. In: Proceedings of EMNLP, pp. 17–21 (2015)

Zhou, Q., Yang, N., Wei, F., Zhou, M.: Selective encoding for abstractive sentence summarization. In: Proceedings of ACL, pp. 1095–1104 (2017)

See, A., Liu, P.J., Manning, C.D.: Get to the point: summarization with pointer-generator networks. In: Proceedings of ACL, pp. 1073–1083 (2017)

Nallapati, R., Zhai, F., Zhou, B.: SummaRuNNer: an interpretable recurrent neural network model for extractive summarization. In: Proceedings of AAAI, pp. 3075–3081 (2017)

Isonuma, M., Fujino, T., Mori, J., Matsuo, Y., Sakata, I.: Extractive summarization using multi-task learning with document classification. In: Proceedings of EMNLP, pp. 2101–2110 (2017)

Cheng, J., Lapata, M.: Neural summarization by extracting sentences and words. In: Proceedings of ACL, pp. 484–494 (2016)

Contractor, D., Guo, Y., Korhonen, A.: Using argumentative zones for extractive summarization of scientific articles. In: Proceedings of COLING, pp. 663–678 (2012)

Collins, E., Augenstein, I., Riedel, S.: A supervised approach to extractive summarisation of scientific papers. In: Proceedings of CoNLL, pp. 195–205 (2017)

Li, J., Luong, M.T., Jurafsky, D.: A hierarchical neural autoencoder for paragraphs and documents. In: Proceedings of ACL, pp. 1106–1115 (2015)

Lin, C.Y.: Rouge: a package for automatic evaluation of summaries. In: Proceedings of the ACL 2004 Workshop on Text Summarization Branches Out, pp. 74–81 (2004)

Kim, Y.: Convolutional neural networks for sentence classification. In: Proceedings of EMNLP, pp. 1746–1751 (2014)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Yang, Z., Yang, D., Dyer, C., He, X., Smola, A., Hovy, E.: Hierarchical attention networks for document classification. In: Proceedings of NAACL, pp. 1480–1489 (2016)

Nakasuka, K., Tsuruoka, Y.: Auto summarization for academic papers based on discourse structure. In: Proceedings of ANLP 2015, pp. 569–572 (2015). (in Japanese)

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: Advances in NIPS, pp. 3111–3119 (2013)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. In: Proceedings of ICLR (2015)

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 15(1), 1929–1958 (2014)

Chen, Q., Zhu, X., Ling, Z., Wei, S., Jiang, H.: Distraction-based neural networks for modeling documents. In: Proceedings of IJCAI-2016, pp. 2754–2760 (2016)

Narayan, S., Papasarantopoulos, N., Lapata, M., Cohen, S.B.: Neural extractive summarization with side information. arXiv:1704.04530 (2017)

Tai, K.S., Socher, R., Manning, C.D.: Improved semantic representations from tree-structured long short-term memory networks. In: Proceedings of ACL, pp. 1556–1566 (2015)

Gur, I., Hewlett, D., Lacoste, A., Jones, L.: Accurate supervised and semi-supervised machine reading for long documents. In: Proceedings of EMNLP, pp. 2011–2020 (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer International Publishing AG, part of Springer Nature

About this paper

Cite this paper

Kinugawa, K., Tsuruoka, Y. (2018). A Hierarchical Neural Extractive Summarizer for Academic Papers. In: Arai, S., Kojima, K., Mineshima, K., Bekki, D., Satoh, K., Ohta, Y. (eds) New Frontiers in Artificial Intelligence. JSAI-isAI 2017. Lecture Notes in Computer Science(), vol 10838. Springer, Cham. https://doi.org/10.1007/978-3-319-93794-6_25

Download citation

DOI: https://doi.org/10.1007/978-3-319-93794-6_25

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-93793-9

Online ISBN: 978-3-319-93794-6

eBook Packages: Computer ScienceComputer Science (R0)