Abstract

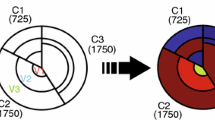

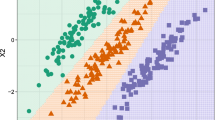

The most common approach to build a decision tree is based on a two-step procedure: growing a full tree and then prune it back. The goal is to identify the tree with the lowest error rate. Alternative pruning criteria have been proposed in literature. Within the framework of recursive partitioning algorithms by tree-based methods, this paper provides a contribution on both the visual representation of the data partition in a geometrical space and the selection of the decision tree. In our visual approach the identification of the best tree and of the weakest links is immediately evaluable by the graphical analysis of the tree structure without considering the pruning sequence. The results in terms of error rate are really similar to the ones returned by the classification and regression trees (CART) procedure, showing how this new way to select the best tree is a valid alternative to the well-known cost-complexity pruning.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ankerst, M., Ester, M., Kriegel, H.P.: Towards an effective cooperation of the computer and the user for classificaton. In: Proceedings of the Sixth International Conference on Knowledge Discovery and Data Mining, Boston, pp. 178–188 (2000)

Ankerst, M., Keim, D.A., Kriegel, H.P.: Circle segments: a technique for visually exploring large multidimensional datasets. In: Proceedings of IEEE Visualization, Hot Topic Session, Sab Francisco (1996)

Apté, C., Weiss, S.: Data mining with decision trees and decision rules. Future Gener. Comput. Syst. 13, 197–210 (1997)

Aria, M., Siciliano, R.: Learning from trees: two-stage enhancements. In: Proceedings of Classification and Data Analysis Group (CLADAG 2003), Cleub, pp. 22–24 (2003)

Barlow, S.T., Neville, P.A.: Comparison of 2-D visualization of hierarchies. In: Proceedings of the IEEE Symposium on Information Visualization, San Diego, pp. 131–138 (2001)

Breiman, L., Friedman, J.H., Olshen, R.A., Stone, C.J.: Classification and Regression Trees. Wadsworth International Group, Belmont (1984)

Cappelli, C., Mola, F., Siciliano, R.: An alternative pruning method based on the impurity-complexity measure. In: Rayne, R., Green, P. (eds.) Proceedings in Computational Statistics 13th Symposium, pp. 221–226. Springer, New York (1998)

Esposito, F., Malerba, D., Semeraro, G., Kay, J.: A comparative analysis of methods for pruning decision trees. IEEE Trans. Pattern Anal. Mach. Intell. 19, 476–491 (1997)

Fayyad, U.M., Grinstein, G., Wierse, A.: Information Visualization in Data Mining and Knowledge Discovery. Morgan Kaufmann Publishers, San Francisco (2002)

Hastie, T., Tibshirani, R., Friedman, J.: The Elements of Statistical Learning. Springer, New York (2009)

Kass, G.V.: An exploratory technique for investigating large quantities of categorical data. J. Appl. Stat. 29, 119–127 (1980)

Liu, Y., Salvendy, G.: Design and evaluation of visualization support to facilitate decision trees classifications. Int. J. Hum. Comput. Stud. 65, 95–110 (2007)

Messenger, R., Mandell, L.: A modal search technique for predictive nominal scale multivariate analysis. J. Am. Stat. Assoc. 67, 768–772 (1972)

Mola, F., Siciliano, R.: A fast splitting procedures for classification and regression trees. Stat. Comput. 7, 208–216 (1997)

Morgan, J.N., Messenger, R.C.: THAID a Sequential Analysis Program for Analysis of Nominal Scale Dependent Variables. Survey Research Center, Institute for Social Research, University of Michigan, Ann Arbor (1973)

Morgan, J.N., Sonquist, J.A.: Problems in the analysis of survey data and a proposal. J. Am. Stat. Assoc. 58, 415–434 (1963)

Quinlan, J.R.: Discovering rules by induction from large collections of examples. In: Michie, D. (ed.) Expert Systems in the Micro Electronic AgeSoftware Pioneers, pp. 168–201. Edinburgh University Press, Edinburgh (1979)

Quinlan, J.R.: Simplifying decision trees. Int. J. Man Mach. Stud. 27, 221–234 (1987)

Quinlan, J.R.: C.4.5: Programs for Machine Learning. Morgan Kaufmann, San Mateo (1993)

Shneiderman, B.: Tree visualization with tree-maps: 2-d space. J. ACM Trans. Graphs (TOG) 11, 92–99 (1992)

Siciliano, R., Aria, M.: TWO-CLASS trees for non parametric regression analysis. In: Fichet, B., Piccolo, D., Verde, R., Vichi, M. (eds.) Classification and Multivariate Analysis for Complex Data Structures. Series of Studies in Classification, Data Analysis and Knowledge Organizations, pp. 63–71. Springer, Heidelberg (2011)

Siciliano, R., Aria, M., D’Ambrosio, A.: Posterior prediction modelling of optimal trees. In: Brito, P. (ed.), Proceedings in Computational Statistics (COMPSTAT 2008), 18th Symposium, pp. 323–334. Springer, New York (2008)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Iorio, C., Aria, M., D’Ambrosio, A. (2015). A New Proposal for Tree Model Selection and Visualization. In: Morlini, I., Minerva, T., Vichi, M. (eds) Advances in Statistical Models for Data Analysis. Studies in Classification, Data Analysis, and Knowledge Organization. Springer, Cham. https://doi.org/10.1007/978-3-319-17377-1_16

Download citation

DOI: https://doi.org/10.1007/978-3-319-17377-1_16

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-17376-4

Online ISBN: 978-3-319-17377-1

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)