Abstract

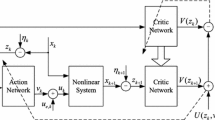

In this paper, a new generalized policy iteration (GPI) based adaptive dynamic programming (ADP) algorithm is developed to solve optimal control problems for infinite horizon discrete-time nonlinear systems. The GPI algorithm is a general idea of interacting policy and value iteration algorithms of ADP. There are two iteration indices, which iterate for policy improvement and policy evaluation, respectively, in the GPI algorithm. The convergence properties of the GPI algorithm are developed. Finally, simulation results are presented to illustrate the performance of the developed algorithm.

This work was supported in part by the National Natural Science Foundation of China under Grants 61034002, 61233001, 61273140, 61304086, and 61374105, and in part by Beijing Natural Science Foundation under Grant 4132078.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Al-Tamimi, A., Abu-Khalaf, M., Lewis, F.L.: Adaptive critic designs for discrete-time zero-sum games with application to H ∞ control. IEEE Transactions on Systems, Man, and Cybernetics-Part B: Cybernetics 37, 240–247 (2007)

Beard, R.: Improving the closed-loop performance of nonlinear systems, Ph.D. Thesis, Rensselaer Polytechnic Institute, Troy, NY (1995)

Lewis, F.L., Vrabie, D., Vamvoudakis, K.G.: Reinforcement learning and feedback control: using natural decision methods to design optimal adaptive controllers. IEEE Control Systems 32, 76–105 (2012)

Liu, D., Wei, Q.: Policy iteration adaptive dynamic programming algorithm for discrete-time nonlinear systems. IEEE Transactions on Neural Networks and Learning Systems 25, 621–634 (2014)

Liu, D., Wei, Q.: Multi-person zero-sum differential games for a class of uncertain nonlinear systems. International Journal of Adaptive Control and Signal Processing 28, 205–231 (2014)

Liu, D., Wei, Q.: Finite-approximation-error based optimal control approach for discrete-time nonlinear systems. IEEE Transactions on Cybernetics 43, 779–789 (2013)

Liu, D., Zhang, Y., Zhang, H.: A self-learning call admission control scheme for CDMA cellular networks. IEEE Transactions on Neural Networks 16, 1219–1228 (2005)

Prokhorov, D.V., Wunsch, D.C.: Adaptive critic designs. IEEE Transactions on Neural Networks 8, 997–1007 (1997)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (1998)

Wang, F., Jin, N., Liu, D., Wei, Q.: Adaptive dynamic programming for finite-horizon optimal control of discrete-time nonlinear systems with ε-error bound. IEEE Transactions on Neural Networks 22, 24–36 (2011)

Wei, Q., Liu, D.: Stable iterative adaptive dynamic programming algorithm with approximation errors for discrete-time nonlinear systems. Neural Computing & Applications 24, 1355–1367 (2014)

Wei, Q., Wang, D., Zhang, D.: Dual iterative adaptive dynamic programming for a class of discrete-time nonlinear systems with time-delays. Neural Computing & Applications 23, 1851–1863 (2013)

Wei, Q., Liu, D.: Numerically adaptive learning control scheme for discrete-time nonlinear systems. IET Control Theory & Applications 7, 1472–1486 (2013)

Wei, Q., Liu, D.: An iterative ε-optimal control scheme for a class of discrete-time nonlinear systems with unfixed initial state. Neural Networks 32, 236–244 (2012)

Wei, Q., Zhang, H., Dai, J.: Model-free multiobjective approximate dynamic programming for discrete-time nonlinear systems with general performance index functions. Neurocomputing 72, 1839–1848 (2009)

Wei, Q., Zhang, H., Liu, D., Zhao, Y.: An optimal control scheme for a class of discrete-time nonlinear systems with time delays using adaptive critic programming. ACTA Automatica Sinica 36, 121–129 (2010)

Werbos, P.J.: Advanced forecasting methods for global crisis warning and models of intelligence. General Systems Yearbook 22, 25–38 (1977)

Werbos, P.J.: A menu of designs for reinforcement learning over time. In: Miller, W.T., Sutton, R.S., Werbos, P.J. (eds.) Neural Networks for Control, pp. 67–95. MIT Press, Cambridge (1991)

Zhang, H., Wei, Q., Luo, Y.: A novel infinite-time optimal tracking control scheme for a class of discrete-time nonlinear systems via the greedy HDP iteration algorithm. IEEE Transactions on System, Man, and cybernetics-Part B: Cybernetics 38, 937–942 (2008)

Zhang, H., Wei, Q., Liu, D.: An iterative adaptive dynamic programming method for solving a class of nonlinear zero-sum differential games. Automatica 47, 207–214 (2011)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Wei, Q., Liu, D., Yang, X. (2014). Discrete-Time Nonlinear Generalized Policy Iteration for Optimal Control Using Neural Networks. In: Loo, C.K., Yap, K.S., Wong, K.W., Teoh, A., Huang, K. (eds) Neural Information Processing. ICONIP 2014. Lecture Notes in Computer Science, vol 8834. Springer, Cham. https://doi.org/10.1007/978-3-319-12637-1_49

Download citation

DOI: https://doi.org/10.1007/978-3-319-12637-1_49

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-12636-4

Online ISBN: 978-3-319-12637-1

eBook Packages: Computer ScienceComputer Science (R0)