Abstract

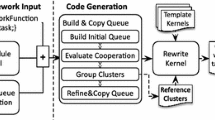

On the work sharing among GPUs and CPU cores on GPU equipped clusters, it is a critical issue to keep load balance among these heterogeneous computing resources. We have been developing a run-time system for this problem on PGAS language named XcalableMP-dev/StarPU [1]. Through the development, we found the necessity of adaptive load balancing for GPU/CPU work sharing to achieve the best performance for various application codes.

In this paper, we enhance our language system XcalableMP-dev/ StarPU to add a new feature which can control the task size to be assigned to these heterogeneous resources dynamically during application execution. As a result of performance evaluation on several benchmarks, we confirmed the proposed feature correctly works and the performance with heterogeneous work sharing provides up to about 40% higher performance than GPU-only utilization even for relatively small size of problems.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Odajima, T., Boku, T., Hanawa, T., Lee, J., Sato, M.: GPU/CPU Work Sharing with Parallel Language XcalableMP-dev for Parallelized Accelerated Computing. In: Sixth International Workshop on Parallel Programming Models and Systems Software for High-End Computing (P2S2), pp. 97–106 (September 2012)

XcalableMP, http://www.xcalablemp.org/

Lee, J., MinhTuan, T., Odajima, T., Boku, T., Sato, M.: An Extension of XcalableMP PGAS Lanaguage for Multi-node GPU Clusters. In: HeteroPar 2011 (with EuroPar 2011), pp. 429–439 (2011)

Lee, J., Sato, M.: Implementation and Performance Evaluation of XcalableMP: A Parallel Programming Language for Distributed Memory Systems. In: Third International Workshop on Parallel Programming Models and Systems Software for High-End Computing (P2S2), pp. 413–420 (September 2010)

High Performance Fortran Version 2.0, http://www.hpfpc.org/jahpf/spec/hpf-v20-j10.pdf

Texas Advanced Computing Center - GotoBlas2, http://www.tacc.utexas.edu/tacc-projects/gotoblas2

PGI Accelerator Compiler, http://www.softek.co.jp/SPG/Pgi/Accel/index.html

HMPP Workbench, http://www.caps-entreprise.com/hmpp.html

Agullo, E., Augonnet, C., Dongarra, J., Ltaief, H., Namyst, R., Thibault, S., Tomov, S.: Faster, Cheaper, Better - a Hybridization Methodology to Develop Linear Algebra Software for GPUs. In: GPU Computing Gems, vol. 2 (September 2010)

Augonnet, C., Thibault, S., Namyst, R.: StarPU: a Runtime System for Scheduling Tasks over Accelerator-Based Multicore Machines. Concurrency Computat.: Pract. Exper. (March 2010)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer International Publishing Switzerland

About this paper

Cite this paper

Odajima, T. et al. (2013). Adaptive Task Size Control on High Level Programming for GPU/CPU Work Sharing. In: Aversa, R., Kołodziej, J., Zhang, J., Amato, F., Fortino, G. (eds) Algorithms and Architectures for Parallel Processing. ICA3PP 2013. Lecture Notes in Computer Science, vol 8286. Springer, Cham. https://doi.org/10.1007/978-3-319-03889-6_7

Download citation

DOI: https://doi.org/10.1007/978-3-319-03889-6_7

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-03888-9

Online ISBN: 978-3-319-03889-6

eBook Packages: Computer ScienceComputer Science (R0)