Abstract

The problems of boundary interruption and missing internal texture feature have not been well solved in the current camouflaged object detection model, and the parameters of the model are generally large. To overcome these challenges, we propose a fusion boundary and gradient enhancement networks BGENet, which guides the context features by gradient features and boundary features together. BGENet is divided into three branches, context feature branch, boundary feature branch and gradient feature branch. Furthermore, a parallel context information enhancement module is introduced to enhance the context features. The designed pre-background information interaction module is used to highlight the boundary features of the camouflaged object and guide the context features to compensate for the boundary breaks in the context features, while we use the learned gradient features to guide the context features through the proposed gradient guidance module, and enhances internal information about context features. Experiments on CAMO, COD10K and NC4K three datasets confirm the effectiveness of our BGENet, which uses only 20.81M parameters and achieves superior performance compared with traditional and SOTA methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

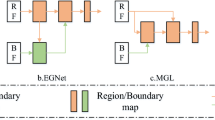

Chen, G., Liu, S.J., Sun, Y.J., Ji, G.P., Wu, Y.F., Zhou, T.: Camouflaged object detection via context-aware cross-level fusion. IEEE Trans. Circuits Syst. Video Technol. 32(10), 6981–6993 (2022)

Chen, S., Tan, X., Wang, B., Hu, X.: Reverse attention for salient object detection. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11213, pp. 236–252. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01240-3_15

Dai, Y., Gieseke, F., Oehmcke, S., Wu, Y., Barnard, K.: Attentional feature fusion. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 3560–3569 (2021)

Fan, C., Zeng, Z., Xiao, L., Qu, X.: GFNet: automatic segmentation of COVID-19 lung infection regions using CT images based on boundary features. Pattern Recogn. 132, 108963 (2022)

Fan, D.P., Cheng, M.M., Liu, Y., Li, T., Borji, A.: Structure-measure: a new way to evaluate foreground maps. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 4548–4557 (2017)

Fan, D.P., Gong, C., Cao, Y., Ren, B., Cheng, M.M., Borji, A.: Enhanced-alignment measure for binary foreground map evaluation. arXiv preprint arXiv:1805.10421 (2018)

Fan, D.P., Ji, G.P., Cheng, M.M., Shao, L.: Concealed object detection. IEEE Trans. Pattern Anal. Mach. Intell. 44(10), 6024–6042 (2021)

Fan, D.P., Ji, G.P., Sun, G., Cheng, M.M., Shen, J., Shao, L.: Camouflaged object detection. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2777–2787 (2020)

Fan, D.-P., et al.: PraNet: parallel reverse attention network for polyp segmentation. In: Martel, A.L., et al. (eds.) MICCAI 2020. LNCS, vol. 12266, pp. 263–273. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-59725-2_26

Gallego, J., Bertolino, P.: Foreground object segmentation for moving camera sequences based on foreground-background probabilistic models and prior probability maps. In: 2014 IEEE International Conference on Image Processing (ICIP), pp. 3312–3316. IEEE (2014)

Huang, Z., et al.: Feature shrinkage pyramid for camouflaged object detection with transformers. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5557–5566 (2023)

Ji, G.P., Fan, D.P., Chou, Y.C., Dai, D., Liniger, A., Van Gool, L.: Deep gradient learning for efficient camouflaged object detection. Mach. Intell. Res. 20(1), 92–108 (2023)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014)

Le, T.N., Nguyen, T.V., Nie, Z., Tran, M.T., Sugimoto, A.: Anabranch network for camouflaged object segmentation. Comput. Vis. Image Underst. 184, 45–56 (2019)

Li, A., Zhang, J., Lv, Y., Liu, B., Zhang, T., Dai, Y.: Uncertainty-aware joint salient object and camouflaged object detection. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 10071–10081 (2021)

Liu, M., Di, X.: Extraordinary MHNet: military high-level camouflage object detection network and dataset. Neurocomputing. 126466 (2023)

Liu, Z., Huang, K., Tan, T.: Foreground object detection using top-down information based on EM framework. IEEE Trans. Image Process. 21(9), 4204–4217 (2012)

Lv, Y., et al.: Simultaneously localize, segment and rank the camouflaged objects. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11591–11601 (2021)

Mao, Y., et al.: Transformer transforms salient object detection and camouflaged object detection. arXiv preprint arXiv:2104.10127 1(2), 5 (2021)

Margolin, R., Zelnik-Manor, L., Tal, A.: How to evaluate foreground maps? In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255 (2014)

Mei, H., Ji, G.P., Wei, Z., Yang, X., Wei, X., Fan, D.P.: Camouflaged object segmentation with distraction mining. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8772–8781 (2021)

Pan, Y., Chen, Y., Fu, Q., Zhang, P., Xu, X., et al.: Study on the camouflaged target detection method based on 3d convexity. Mod. Appl. Sci. 5(4), 152 (2011)

Paszke, A., et al.: Pytorch: an imperative style, high-performance deep learning library. In: Advances in Neural Information Processing Systems, vol. 32 (2019)

Sengottuvelan, P., Wahi, A., Shanmugam, A.: Performance of decamouflaging through exploratory image analysis. In: 2008 First International Conference on Emerging Trends in Engineering and Technology, pp. 6–10. IEEE (2008)

Sun, Y., Wang, S., Chen, C., Xiang, T.Z.: Boundary-guided camouflaged object detection. arXiv preprint arXiv:2207.00794 (2022)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Tan, M., Le, Q.: Efficientnetv2: smaller models and faster training. In: International Conference on Machine Learning, pp. 10096–10106. PMLR (2021)

Wang, J., et al.: Multi-feature information complementary detector: a high-precision object detection model for remote sensing images. Remote Sens. 14(18), 4519 (2022)

Yang, F., et al.: Uncertainty-guided transformer reasoning for camouflaged object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4146–4155 (2021)

Zheng, D., Zheng, X., Yang, L.T., Gao, Y., Zhu, C., Ruan, Y.: MFFN: multi-view feature fusion network for camouflaged object detection. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 6232–6242 (2023)

Zhu, H., et al.: I can find you! boundary-guided separated attention network for camouflaged object detection. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 36, pp. 3608–3616 (2022)

Zhuge, M., Lu, X., Guo, Y., Cai, Z., Chen, S.: Cubenet: x-shape connection for camouflaged object detection. Pattern Recogn. 127, 108644 (2022)

Acknowledgements

This work is supported by the Inner Mongolia Science and Technology Project No.2021GG0166.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Liu, G., Wu, W. (2024). Fusion Boundary and Gradient Enhancement Network for Camouflage Object Detection. In: Rudinac, S., et al. MultiMedia Modeling. MMM 2024. Lecture Notes in Computer Science, vol 14555. Springer, Cham. https://doi.org/10.1007/978-3-031-53308-2_14

Download citation

DOI: https://doi.org/10.1007/978-3-031-53308-2_14

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-53307-5

Online ISBN: 978-3-031-53308-2

eBook Packages: Computer ScienceComputer Science (R0)