Abstract

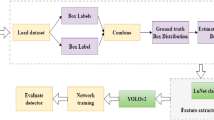

Now-a-days, Object detection algorithms becomes more popular because of their significant contribution to the field of computer vision. Object detection algorithms are divided into two approaches i) region-based approach and ii) region-free approach. In this paper, we implemented YOLOv4 and YOLOv5 techniques of region-free approach due to their high detection speed and accuracy. The objective of this paper is to identify the relevant and non-relevant parts of surveillance videos as watching entire video footage is a time-consuming process. The use case for this research work is ATM surveillance footage, where the dataset is publicly not available, to train the network we developed our data set and then train and compare the proposed models. After experimental results, it is observed that YOLOv5 archives 84% accuracy and give better results than YOLOv4 which achieve 56% accuracy only.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bochkovskiy, A., Wang, C.Y., Liao, H.Y.M.: Yolov4: optimal speed and accuracy of object detection. arXiv preprint arXiv:2004.10934 (2020)

Chen, L.C., et al.: Masklab: instance segmentation by refining object detection with semantic and direction features. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4013–4022 (2018)

Girshick, R.: Fast r-cnn. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1440–1448 (2015)

Girshick, R., Donahue, J., Darrell, T., Malik, J.: Rich feature hierarchies for accurate object detection and semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 580–587 (2014)

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask r-cnn. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2961–2969 (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 37(9), 1904–1916 (2015)

Jana, A.P., Biswas, A., et al.: Yolo based detection and classification of objects in video records. In: 2018 3rd IEEE International Conference on Recent Trends in Electronics, Information & Communication Technology (RTEICT), pp. 2448–2452. IEEE (2018)

Jocher, G.: ultralytics/yolov5: v6.0 - YOLOv5n ‘Nano’ models, Roboflow integration, TensorFlow export, OpenCV DNN support (2021). https://doi.org/10.5281/zenodo.5563715

Kim, K.H., Hong, S., Roh, B., Cheon, Y., Park, M.: Pvanet: deep but lightweight neural networks for real-time object detection. arXiv preprint arXiv:1608.08021 (2016)

Lin, T.Y., Dollár, P., Girshick, R., He, K., Hariharan, B., Belongie, S.: Feature pyramid networks for object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2117–2125 (2017)

Liu, W., et al.: SSD: single shot multibox detector. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 21–37. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_2

Lu, X., Li, B., Yue, Y., Li, Q., Yan, J.: Grid r-cnn. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7363–7372 (2019)

Redmon, J., Divvala, S., Girshick, R., Farhadi, A.: You only look once: unified, real-time object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 779–788 (2016)

Redmon, J., Farhadi, A.: Yolo9000: better, faster, stronger. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7263–7271 (2017)

Redmon, J., Farhadi, A.: Yolov3: An incremental improvement. arXiv preprint arXiv:1804.02767 (2018)

Ren, S., He, K., Girshick, R., Sun, J.: Faster r-cnn: towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 28 (2015)

Tian, Y., Yang, G., Wang, Z., Wang, H., Li, E., Liang, Z.: Apple detection during different growth stages in orchards using the improved yolo-v3 model. Comput. Electron. Agric. 157, 417–426 (2019)

Wang, H., Wang, P., Qian, X.: MPNET: an end-to-end deep neural network for object detection in surveillance video. IEEE Access 6, 30296–30308 (2018)

Wu, F., Jin, G., Gao, M., Zhiwei, H., Yang, Y.: Helmet detection based on improved yolo v3 deep model. In: 2019 IEEE 16th International Conference on Networking, Sensing and Control (ICNSC), pp. 363–368. IEEE (2019)

Yang, L., Fan, Y., Xu, N.: Video instance segmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 5188–5197 (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Mohod, N., Agrawal, P., Madaan, V. (2023). YOLOv4 Vs YOLOv5: Object Detection on Surveillance Videos. In: Woungang, I., Dhurandher, S.K., Pattanaik, K.K., Verma, A., Verma, P. (eds) Advanced Network Technologies and Intelligent Computing. ANTIC 2022. Communications in Computer and Information Science, vol 1798. Springer, Cham. https://doi.org/10.1007/978-3-031-28183-9_46

Download citation

DOI: https://doi.org/10.1007/978-3-031-28183-9_46

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-28182-2

Online ISBN: 978-3-031-28183-9

eBook Packages: Computer ScienceComputer Science (R0)