Abstract

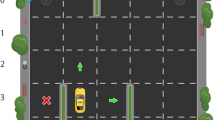

Deep reinforcement learning (RL) commonly suffers from high sample complexity and poor generalisation, especially with high-dimensional (image-based) input. Where available (such as some robotic control domains), low dimensional vector inputs outperform their image based counterparts, but it is challenging to represent complex dynamic environments in this manner. Relational reinforcement learning instead represents the world as a set of objects and the relations between them; offering a flexible yet expressive view which provides structural inductive biases to aid learning. Recently relational RL methods have been extended with modern function approximation using graph neural networks (GNNs). However, inherent limitations in the processing model for GNNs result in decreased returns when important information is dispersed widely throughout the graph. We outline a hybrid learning and planning model which uses reinforcement learning to propose and select subgoals for a planning model to achieve. This includes a novel action selection mechanism and loss function to allow training around the non-differentiable planner. We demonstrate our algorithms effectiveness on a range of domains, including MiniHack and a challenging extension of the classic taxi domain.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

Code available at https://github.com/AndrewPaulChester/oracle-sage.

References

Ammanabrolu, P., Riedl, M.: Playing text-adventure games with graph-based deep reinforcement learning. In: NAACL (2019)

Battaglia, P., et al.: Relational inductive biases, deep learning, and graph networks. arXiv preprint arXiv:1806.01261 (2018)

Beeching, E., et al.: Graph augmented deep reinforcement learning in the GameRLand3D environment. arXiv preprint arXiv:2112.11731 (2021)

Berry, D.A., Fristedt, B.: Bandit problems: sequential allocation of experiments (Monographs on Statistics and Applied Probability) (1985)

Chester, A., Dann, M., Zambetta, F., Thangarajah, J.: SAGE: generating symbolic goals for myopic models in deep reinforcement learning. arXiv preprint arXiv2203.05079 (2022)

Dietterich, T.G.: Hierarchical reinforcement learning with the MAXQ value function decomposition. JAIR 13, 227–303 (2000)

Džeroski, S., De Raedt, L., Driessens, K.: Relational reinforcement learning. Mach. Learn. 43(1), 7–52 (2001)

Garg, S., Bajpai, A., Mausam, M.: Size independent neural transfer for RDDL planning. In: ICAPS (2019)

Garg, S., Bajpai, A., Mausam, M.: Symbolic network: generalized neural policies for relational MDPs. In: ICML (2020)

Geffner, H.: Model-free, model-based, and general intelligence. arXiv preprint arXiv:1806.02308 (2018)

Godwin, J., et al.: Simple GNN regularisation for 3D molecular property prediction and beyond. In: ICLR (2022)

Ha, D., Schmidhuber, J.: Recurrent world models facilitate policy evolution. In: NeurIPS (2018)

Hafner, D., Lillicrap, T., Norouzi, M., Ba, J.: Mastering atari with discrete world models. In: ICLR (2021)

Hamrick, J.B., et al.: Relational inductive bias for physical construction in humans and machines. In: Proceedings of the Annual Meeting of the Cognitive Science Society (2018)

Henderson, P., Islam, R., Bachman, P., Pineau, J., Precup, D., Meger, D.: Deep reinforcement learning that matters. In: AAAI (2018)

Illanes, L., Yan, X., Icarte, R.T., McIlraith, S.A.: Symbolic plans as high-level instructions for reinforcement learning. In: ICAPS (2020)

Janisch, J., Pevný, T., Lisý, V.: Symbolic relational deep reinforcement learning based on graph neural networks. arXiv preprint arXiv:2009.12462 (2020)

Jiang, J., Dun, C., Huang, T., Lu, Z.: Graph convolutional reinforcement learning. In: ICLR (2019)

Kemp, C., Tenenbaum, J.B.: The discovery of structural form. PNAS 105(31), 10687–10692 (2008)

Kerkkamp, D., Bukhsh, Z., Zhang, Y., Jansen, N.: Grouping of maintenance actions with deep reinforcement learning and graph convolutional networks. In: ICAART (2022)

Kokel, H., Manoharan, A., Natarajan, S., Ravindran, B., Tadepalli, P.: RePReL: integrating relational planning and reinforcement learning for effective abstraction. In: ICAPS (2021)

Küttler, H., et al.: The NetHack learning environment. In: NeurIPS (2020)

Leonetti, M., Iocchi, L., Stone, P.: A synthesis of automated planning and reinforcement learning for efficient, robust decision-making. AIJ 241, 103–130 (2016)

Li, R., Jabri, A., Darrell, T., Agrawal, P.: Towards practical multi-object manipulation using relational reinforcement learning. In: ICRA (2020)

Liu, Z., Chen, C., Li, L., Zhou, J., Li, X., Song, L., Qi, Y.: GeniePath: graph neural networks with adaptive receptive paths. In: AAAI (2019)

Lyu, D., Yang, F., Liu, B., Gustafson, S.: SDRL: interpretable and data-efficient deep reinforcement learning leveraging symbolic planning. In: AAAI (2019)

McDermott, D., et al.: PDDL - the planning domain definition language. Technical Report, Yale Center for Computational Vision and Control (1998)

Mnih, V., et al.: Human-level control through deep reinforcement learning. Nature 518(7540), 529 (2015)

Navon, D.: Forest before trees: the precedence of global features in visual perception. Cogn. Psychol. 9(3), 353–383 (1977)

Roderick, M., Grimm, C., Tellex, S.: Deep abstract Q-networks. In: AAMAS (2018)

Rong, Y., Huang, W., Xu, T., Huang, J.: DropEdge: towards deep graph convolutional networks on node classification. In: ICLR (2020)

Samvelyan, M., et al.: MiniHack the planet: a sandbox for open-ended reinforcement learning research. In: NeurIPS Track on Datasets and Benchmarks (2021)

Schrittwieser, J., et al.: Mastering Atari, Go, chess and shogi by planning with a learned model. Nature 588(7839), 604–609 (2020)

Sievers, S., Röger, G., Wehrle, M., Katz, M.: Theoretical foundations for structural symmetries of lifted PDDL tasks. In: ICAPS (2019)

Topping, J., Di Giovanni, F., Chamberlain, B.P., Dong, X., Bronstein, M.M.: Understanding over-squashing and bottlenecks on graphs via curvature. arXiv preprint arXiv:2111.14522 (2021)

Wang, T., Liao, R., Ba, J., Fidler, S.: NerveNet: learning structured policy with graph neural networks. In: ICLR (2018)

Winder, J., et al.: Planning with abstract learned models while learning transferable subtasks. In: AAAI (2020)

Wöhlke, J., Schmitt, F., van Hoof, H.: Hierarchies of planning and reinforcement learning for robot navigation. In: ICRA (2021)

Zhou, J., et al.: Graph neural networks: a review of methods and applications. AI Open 1, 57–81 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Chester, A., Dann, M., Zambetta, F., Thangarajah, J. (2023). Oracle-SAGE: Planning Ahead in Graph-Based Deep Reinforcement Learning. In: Amini, MR., Canu, S., Fischer, A., Guns, T., Kralj Novak, P., Tsoumakas, G. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2022. Lecture Notes in Computer Science(), vol 13716. Springer, Cham. https://doi.org/10.1007/978-3-031-26412-2_4

Download citation

DOI: https://doi.org/10.1007/978-3-031-26412-2_4

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-26411-5

Online ISBN: 978-3-031-26412-2

eBook Packages: Computer ScienceComputer Science (R0)