Abstract

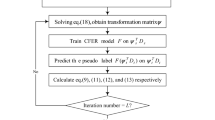

Facial Expression Recognition (FER) models trained on one dataset (source) usually do not perform well on a different dataset (target) due to the implicit domain shift between different datasets. In addition, FER data is naturally highly imbalanced, with a majority of the samples belonging to few expressions like neutral, happy and relatively fewer samples coming from expressions like disgust, fear, etc., which makes the FER task even more challenging. This class imbalance of the source and target data (which may be different), along with other factors like similarity of few expressions, etc., can result in unsatisfactory target classification performance due to confusion between the different classes. In this work, we propose an integrated module, termed DIFC, which can not only handle the source Data Imbalance, but also the Feature Confusion of the target data for improved classification of the target expressions.We integrate this DIFC module with an existing Unsupervised Domain Adaptation (UDA) approach to handle the domain shift and show that the proposed simple yet effective module can result in significant performance improvement on four benchmark datasets for Cross-Dataset FER (CD-FER) task. We also show that the proposed module works across different architectures and can be used with other UDA baselines to further boost their performance.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Al-Modwahi, A.A.M., Sebetela, O., Batleng, L.N., Parhizkar, B., Lashkari, A.H.: Facial expression recognition intelligent security system for real time surveillance. In: Proceedings of World Congress in Computer Science, Computer Engineering, and Applied Computing (2012)

Barandela, R., Rangel, E., Sánchez, J.S., Ferri, F.J.: Restricted decontamination for the imbalanced training sample problem. In: Sanfeliu, A., Ruiz-Shulcloper, J. (eds.) CIARP 2003. LNCS, vol. 2905, pp. 424–431. Springer, Heidelberg (2003). https://doi.org/10.1007/978-3-540-24586-5_52

Brooks, J.A., Chikazoe, J., Sadato, N., Freeman, J.B.: The neural representation of facial-emotion categories reflects conceptual structure. Proc. Natl. Acad. Sci. 116(32), 15861–15870 (2019)

Cao, K., Wei, C., Gaidon, A., Arechiga, N., Ma, T.: Learning imbalanced datasets with label-distribution-aware margin loss. In: NeurIPS, vol. 32 (2019)

Chen, T., Pu, T., Wu, H., Xie, Y., Liu, L., Lin, L.: Cross-domain facial expression recognition: A unified evaluation benchmark and adversarial graph learning. IEEE Trans. Pattern Anal. Mach. Intell. (2021)

Cui, Y., Jia, M., Lin, T.Y., Song, Y., Belongie, S.: Class-balanced loss based on effective number of samples. In: CVPR, pp. 9268–9277 (2019)

Dhall, A., Goecke, R., Lucey, S., Gedeon, T.: Static facial expression analysis in tough conditions: data, evaluation protocol and benchmark. In: ICCV Workshops, pp. 2106–2112 (2011)

Edwards, J., Jackson, H., Pattison, P.: Erratum to “emotion recognition via facial expression and affective prosody in schizophrenia: a methodological review” [clinical psychology review 22 (2002) 789–832]. Clin. Psychol. Rev. 22, 1267–1285 (2002)

Fatras, K., Sejourne, T., Flamary, R., Courty, N.: Unbalanced minibatch optimal transport; applications to domain adaptation. In: Meila, M., Zhang, T. (eds.) ICML, pp. 3186–3197 (2021)

Fragopanagos, N., Taylor, J.: Emotion recognition in human-computer interaction. Neural Netw. 18(4), 389–405 (2005)

Ganin, Y., et al.: Domain-adversarial training of neural networks. J. Mach. Learn. Res. 17(59), 1–35 (2016)

Goodfellow, I., et al.: Generative adversarial nets. In: NeurIPS, vol. 27 (2014)

Goodfellow, I.J., et al.: Challenges in representation learning: a report on three machine learning contests. Neural Netw. 64, 59–63 (2015)

Hayat, M., Khan, S., Zamir, S.W., Shen, J., Shao, L.: Gaussian affinity for max-margin class imbalanced learning. In: ICCV (2019)

Hoffman, J., et al.: CyCADA: cycle-consistent adversarial domain adaptation. In: ICML, vol. 80, pp. 1989–1998 (2018)

Jack, R.E., Garrod, O.G.B., Yu, H., Caldara, R., Schyns, P.G.: Facial expressions of emotion are not culturally universal. Proc. Natl. Acad. Sci. 109(19), 7241–7244 (2012)

Lee, C.Y., Batra, T., Baig, M.H., Ulbricht, D.: Sliced wasserstein discrepancy for unsupervised domain adaptation. In: CVPR, pp. 10277–10287 (2019)

Li, M., Zhai, Y.M., Luo, Y.W., Ge, P.F., Ren, C.X.: Enhanced transport distance for unsupervised domain adaptation. In: CVPR (2020)

Li, S., Deng, W.: Deep emotion transfer network for cross-database facial expression recognition. In: ICPR, pp. 3092–3099 (2018)

Li, S., Deng, W.: A deeper look at facial expression dataset bias. IEEE Trans. Affect. Comput. (2020)

Li, S., Deng, W., Du, J.: Reliable crowdsourcing and deep locality-preserving learning for expression recognition in the wild. In: CVPR, pp. 2584–2593. IEEE (2017)

Li, S., Deng, W., Du, J.: Reliable crowdsourcing and deep locality-preserving learning for expression recognition in the wild. In: CVPR, pp. 2584–2593 (2017)

Long, M., CAO, Z., Wang, J., Jordan, M.I.: Conditional adversarial domain adaptation. In: NeurIPS, vol. 31 (2018)

Lu, Z., Yang, Y., Zhu, X., Liu, C., Song, Y.Z., Xiang, T.: Stochastic classifiers for unsupervised domain adaptation. In: CVPR, pp. 9108–9117 (2020)

Lyons, M., Akamatsu, S., Kamachi, M., Gyoba, J.: Coding facial expressions with gabor wavelets. In: Proceedings Third IEEE International Conference on Automatic Face and Gesture Recognition, pp. 200–205 (1998)

Murez, Z., Kolouri, S., Kriegman, D., Ramamoorthi, R., Kim, K.: Image to image translation for domain adaptation. In: CVPR, pp. 4500–4509 (2018)

Saito, K., Watanabe, K., Ushiku, Y., Harada, T.: Maximum classifier discrepancy for unsupervised domain adaptation. In: CVPR (2018)

Sajjad, M., Zahir, S., Ullah, A., Akhtar, Z., Muhammad, K.: Human behavior understanding in big multimedia data using CNN based facial expression recognition. Mob. Netw. Appl. 25(4), 1611–1621 (2020)

Wang, X., Wang, X., Ni, Y.: Unsupervised domain adaptation for facial expression recognition using generative adversarial networks. Comput. Intell. Neurosci. (2018)

Xie, Y., Chen, T., Pu, T., Wu, H., Lin, L.: Adversarial graph representation adaptation for cross-domain facial expression recognition. In: Proceedings of the 28th ACM International conference on Multimedia (2020)

Xu, R., Li, G., Yang, J., Lin, L.: Larger norm more transferable: an adaptive feature norm approach for unsupervised domain adaptation. In: ICCV (2019)

Yan, K., Zheng, W., Cui, Z., Zong, Y.: Cross-database facial expression recognition via unsupervised domain adaptive dictionary learning. In: NeurIPS, pp. 427–434 (2016)

Zhang, K., Zhang, Z., Li, Z., Qiao, Y.: Joint face detection and alignment using multitask cascaded convolutional networks. IEEE Signal Process. Lett. 23(10), 1499–1503 (2016)

Zhang, Y., Liu, T., Long, M., Jordan, M.: Bridging theory and algorithm for domain adaptation. In: ICML, pp. 7404–7413 (2019)

Zhang, Z., Luo, P., Loy, C.C., Tang, X.: Learning social relation traits from face images. In: ICCV, pp. 3631–3639 (2015)

Zheng, W., Zong, Y., Zhou, X., Xin, M.: Cross-domain color facial expression recognition using transductive transfer subspace learning. IEEE Trans. Affect. Comput. 9(1), 21–37 (2016)

Zhou, L., Fan, X., Ma, Y., Tjahjadi, T., Ye, Q.: Uncertainty-aware cross-dataset facial expression recognition via regularized conditional alignment. In: Proceedings of the 28th ACM International Conference on Multimedia, pp. 2964–2972 (2020)

Acknowledgements

This work is partly supported through a research grant from AISIN.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Sreenivas, M., Takamuku, S., Biswas, S., Chepuri, A., Vengatesan, B., Natori, N. (2023). Improved Cross-Dataset Facial Expression Recognition by Handling Data Imbalance and Feature Confusion. In: Karlinsky, L., Michaeli, T., Nishino, K. (eds) Computer Vision – ECCV 2022 Workshops. ECCV 2022. Lecture Notes in Computer Science, vol 13805. Springer, Cham. https://doi.org/10.1007/978-3-031-25072-9_17

Download citation

DOI: https://doi.org/10.1007/978-3-031-25072-9_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-25071-2

Online ISBN: 978-3-031-25072-9

eBook Packages: Computer ScienceComputer Science (R0)