Abstract

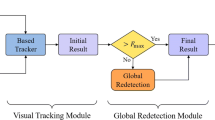

The tracking algorithm based on Siamese networks cannot change the corresponding templates according to appearance changes of targets. Therefore, taking convolution as a similarity measure finds it difficult to collect background information and discriminate background interferents similar to templates, showing poor tracking robustness. In view of this problem, a two-stage tracking algorithm based on the similarity measure for fused features of positive and negative samples is proposed. In accordance with positive and negative sample libraries established online, a discriminator based on measurement for fused features of positive and negative samples is learned to quadratically discriminate a candidate box of hard sample frames. The tracking accuracy and success rate of the algorithm in the OTB2015 benchmark dataset separately reach 92.4% and 70.7%. In the VOT2018 dataset, the algorithm improves the accuracy by nearly 0.2%, robustness by 4.0% and expected average overlap (EAO) by 2.0% compared with the benchmark network SiamRPN++. In terms of the LaSOT dataset, the algorithm is superior to all algorithms compared. Compared with the basic network, its success rate increases by nearly 3.0%, and the accuracy rises by more than 1.0%. Conclusions: The experimental results in the OTB2015, VOT2018 and LaSOT datasets show that the proposed method has a great improvement in the tracking success rate and robustness compared with algorithms based on Siamese networks and particularly, it performs excellently in the LaSOT dataset with a long sequence, occlusion and large appearance changes.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bolme, D.S., Beveridge, J.R., Draper, B.A., Lui, Y.M.: Visual object tracking using adaptive correlation filters. In: 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, pp. 2544–2550. IEEE (2010)

Li, B., Yan, J., Wu, W., Zhu, Z., Hu, X.: High performance visual tracking with Siamese region proposal network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8971–8980 (2018)

Zhu, Z., Wang, Q., Li, B., Wu, W., Yan, J., Hu, W.: Distractor-aware Siamese networks for visual object tracking. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11213, pp. 103–119. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01240-3_7

Zhang, L., Gonzalez-Garcia, A., Weijer, J.v.d., Danelljan, M., Khan, F.S.: Learning the model update for Siamese trackers. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4010–4019 (2019)

Bertinetto, L., Valmadre, J., Henriques, J.F., Vedaldi, A., Torr, P.H.S.: Fully-convolutional Siamese networks for object tracking. In: Hua, G., Jégou, H. (eds.) ECCV 2016. LNCS, vol. 9914, pp. 850–865. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-48881-3_56

Danelljan, M., Bhat, G., Khan, F.S., Felsberg, M.: Atom: accurate tracking by overlap maximization. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4660–4669 (2019)

Li, B., Wu, W., Wang, Q., Zhang, F., Xing, J., Yan, J.: Siamrpn++: evolution of Siamese visual tracking with very deep networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4282–4291 (2019)

Xu, Y., Wang, Z., Li, Z., Yuan, Y., Yu, G.: Siamfc++: towards robust and accurate visual tracking with target estimation guidelines. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 12549–12556 (2020)

Zhang, Z., Peng, H., Fu, J., Li, B., Hu, W.: Ocean: object-aware anchor-free tracking. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12366, pp. 771–787. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58589-1_46

Chen, X., Yan, B., Zhu, J., Wang, D., Yang, X., Lu, H.: Transformer tracking. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8126–8135 (2021)

Wang, M., Liu, Y., Huang, Z.: Large margin object tracking with circulant feature maps. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4021–4029 (2017)

Zhang, Z., Peng, H.: Deeper and wider Siamese networks for real-time visual tracking. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4591–4600. IEEE (2019)

Guo, D., Wang, J., Cui, Y., Wang, Z., Chen, S.: SIAMcar: Siamese fully convolutional classification and regression for visual tracking. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6269–6277. IEEE (2020)

Chen, Z., Zhong, B., Li, G., Zhang, S., Ji, R.: Siamese box adaptive network for visual tracking. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6668–6677. IEEE (2020)

Voigtlaender, P., Luiten, J., Torr, P.H., Leibe, B.: SIAM R-CNN: visual tracking by re-detection. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6578–6588. IEEE (2020)

Danelljan, M., Bhat, G., Shahbaz Khan, F., Felsberg, M.: Eco: efficient convolution operators for tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6638–6646. IEEE (2017)

Guo, Q., Feng, W., Zhou, C., Huang, R., Wan, L., Wang, S.: Learning dynamic Siamese network for visual object tracking. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1763–1771. IEEE (2017)

Nam, H., Han, B.: Learning multi-domain convolutional neural networks for visual tracking. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4293–4302. IEEE (2016)

Acknowledgments

This research was funded by NSFC (No. 62162045, 61866028), Technology Innovation Guidance Program Project (No. 20212BDH81003) and Postgraduate Innovation Special Fund Project (No. YC2021133).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Huang, K., Chu, J., Qin, P. (2022). Two-stage Object Tracking Based on Similarity Measurement for Fused Features of Positive and Negative Samples. In: Yu, S., et al. Pattern Recognition and Computer Vision. PRCV 2022. Lecture Notes in Computer Science, vol 13537. Springer, Cham. https://doi.org/10.1007/978-3-031-18916-6_49

Download citation

DOI: https://doi.org/10.1007/978-3-031-18916-6_49

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-18915-9

Online ISBN: 978-3-031-18916-6

eBook Packages: Computer ScienceComputer Science (R0)