Abstract

In this work, a kernel attention module is presented for the task of EEG-based emotion classification with neural networks . The proposed module utilizes a self-attention mechanism by performing a kernel trick, demanding significantly fewer trainable parameters and computations than standard attention modules. The design also provides a scalar for quantitatively examining the amount of attention assigned during deep feature refinement, hence help better interpret a trained model. Using EEGNet as the backbone model, extensive experiments are conducted on the SEED dataset to assess the module’s performance on within-subject classification tasks compared to other SOTA attention modules. Requiring only one extra parameter, the inserted module is shown to boost the base model’s mean prediction accuracy up to more than 1% across 15 subjects. A key component of the method is the interpretability of solutions, which is addressed using several different techniques, and is included throughout as part of the dependency analysis.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

These are kernel weights in the first depthwise convolutional layer. The shape is of (1, 62) and can be directly associated with the 62 EEG sensor locations on scalp.

- 2.

This might be an interesting coincidence since we also had other cases in our experiments where they do not meet exactly.

References

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate (2016)

Blankertz, B., et al.: Invariant common spatial patterns: alleviating nonstationarities in brain-computer interfacing. In: Advances in neural Information Processing Systems, pp. 113–120 (2008)

Blankertz, B., Lemm, S., Treder, M., Haufe, S., Müller, K.R.: Single-trial analysis and classification of ERP components–a tutorial. Neuroimage 56(2), 814–825 (2011)

Cecotti, H., Graser, A.: Convolutional neural networks for p300 detection with application to brain-computer interfaces. IEEE Trans. Pattern Anal. Mach. Intell. 33(3), 433–445 (2010)

Congedo, M., Barachant, A., Bhatia, R.: Riemannian geometry for EEG-based brain-computer interfaces; a primer and a review. Brain-Comput. Interf. 4(3), 155–174 (2017)

Dosovitskiy, A., et al.: An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020)

Fazli, S., Popescu, F., Danóczy, M., Blankertz, B., Müller, K.R., Grozea, C.: Subject-independent mental state classification in single trials. Neural Netw. 22(9), 1305–1312 (2009)

Goghari, V.M., MacDonald, A.W., III., Sponheim, S.R.: Temporal lobe structures and facial emotion recognition in schizophrenia patients and nonpsychotic relatives. Schizophr. Bull. 37(6), 1281–1294 (2011)

He, K., Chen, X., Xie, S., Li, Y., Dollár, P., Girshick, R.: Masked autoencoders are scalable vision learners. arXiv preprint arXiv:2111.06377 (2021)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141 (2018)

Kumfor, F., Irish, M., Hodges, J.R., Piguet, O.: Frontal and temporal lobe contributions to emotional enhancement of memory in behavioral-variant frontotemporal dementia and Alzheimer’s disease. Front. Behav. Neurosci. 8, 225 (2014)

Lawhern, V.J., Solon, A.J., Waytowich, N.R., Gordon, S.M., Hung, C.P., Lance, B.J.: EEGNet: a compact convolutional neural network for EEG-based brain-computer interfaces. J. Neural Eng. 15(5), 056013 (2018)

Li, J., Zhang, L.: Bilateral adaptation and neurofeedback for brain computer interface system. J. Neurosci. Methods 193(2), 373–379 (2010)

Liu, G., Huang, G., Meng, J., Zhang, D., Zhu, X.: Improved GMM with parameter initialization for unsupervised adaptation of brain-computer interface. Int. J. Num. Methods Biomed. Eng. 26(6), 681–691 (2010)

Liu, G., Zhang, D., Meng, J., Huang, G., Zhu, X.: Unsupervised adaptation of electroencephalogram signal processing based on fuzzy c-means algorithm. Int. J. Adapt. Control Signal Process. 26(6), 482–495 (2012)

Liu, Z., et al.: Swin transformer: hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10012–10022 (2021)

Lotte, F., Bougrain, L., Cichocki, A., Clerc, M., Congedo, M., Rakotomamonjy, A., Yger, F.: A review of classification algorithms for EEG-based brain-computer interfaces: a 10 year update. J. Neural Eng. 15(3), 031005 (2018)

Lotte, F., Congedo, M., Lécuyer, A., Lamarche, F., Arnaldi, B.: A review of classification algorithms for EEG-based brain-computer interfaces. J. Neural Eng. 4(2), R1 (2007)

Lu, N., Li, T., Ren, X., Miao, H.: A deep learning scheme for motor imagery classification based on restricted Boltzmann machines. IEEE Trans. Neural Syst. Rehabil. Eng. 25(6), 566–576 (2016)

Schlögl, A., Vidaurre, C., Müller, K.R.: Adaptive methods in BCI research-an introductory tutorial. In: Graimann, B., Pfurtscheller, G., Allison, B. (eds.) Brain-Computer Interfaces, pp. 331–355. Springer, Heidelberg (2009). https://doi.org/10.1007/978-3-642-02091-9_18

Steyrl, D., Scherer, R., Faller, J., Müller-Putz, G.R.: Random forests in non-invasive sensorimotor rhythm brain-computer interfaces: a practical and convenient non-linear classifier. Biomed. Eng. Biomedizinische Technik 61(1), 77–86 (2016)

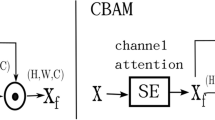

Woo, S., Park, J., Lee, J.Y., Kweon, I.S.: CBAM: convolutional block attention module. In: Proceedings of the European Conference on Computer Vision (ECCV), pp. 3–19 (2018)

Zheng, W.L., Lu, B.L.: Investigating critical frequency bands and channels for EEG-based emotion recognition with deep neural networks. IEEE Trans. Auton. Ment. Dev. 7(3), 162–175 (2015)

Acknowledgements

This work was partially supported by the Fundamental Research Funds for the Central Universities, Sun Yat-sen University (22qntd2901).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Kuang, D., Michoski, C. (2022). KAM - A Kernel Attention Module for Emotion Classification with EEG Data. In: Reyes, M., Henriques Abreu, P., Cardoso, J. (eds) Interpretability of Machine Intelligence in Medical Image Computing. iMIMIC 2022. Lecture Notes in Computer Science, vol 13611. Springer, Cham. https://doi.org/10.1007/978-3-031-17976-1_9

Download citation

DOI: https://doi.org/10.1007/978-3-031-17976-1_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-17975-4

Online ISBN: 978-3-031-17976-1

eBook Packages: Computer ScienceComputer Science (R0)