Abstract

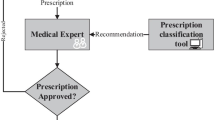

In the last decades, Artificial intelligence (AI) systems have been increasingly adopted in assistive (possibly collaborative) decision-making tools. In particular, AI-based persuasive technologies are designed to steer/influence users’ behaviour, habits, and choices to facilitate the achievement of their own - predetermined - goals. Nowadays, the inputs received by the assistive systems leverage heavily AI data-driven approaches. Thus, it is imperative to have transparent and understandable (to the user) both the process leading to the recommendations and the recommendations. The Explainable AI (XAI) community has progressively contributed to “opening the black box”, ensuring the interaction’s effectiveness, and pursuing the safety of the individuals involved. However, principles and methods ensuring the efficacy and information retain on the human have not been introduced yet. The risk is to underestimate the context dependency and subjectivity of the explanations’ understanding, interpretation, and relevance. Moreover, even a plausible (and possibly expected) explanation can lead to an imprecise or incorrect outcome or its understanding. This can lead to unbalanced and unfair circumstances, such as giving a financial advantage to the system owner/provider and the detriment of the user.

This paper highlights that the sole explanations - especially in the context of persuasive technologies - are not self-sufficient to protect users’ psychological and physical integrity. Conversely, explanations could be misused, becoming themselves a tool of manipulation. Therefore, we suggest characteristics safeguarding the explanation from being manipulative and legal principles to be used as criteria for evaluating the operation of XAI systems, both from an ex-ante and ex-post perspective.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

AI, H.: High-level expert group on artificial intelligence (2019)

Albert, E.T.: AI in talent acquisition: a review of AI-applications used in recruitment and selection. Strategic HR Review (2019)

Anjomshoae, S., Najjar, A., Calvaresi, D., Främling, K.: Explainable agents and robots: results from a systematic literature review. In: 18th International Conference on Autonomous Agents and Multiagent Systems (AAMAS 2019), Montreal, Canada, 13–17 May 2019, pp. 1078–1088. International Foundation for Autonomous Agents and Multiagent Systems (2019)

Antonov, A., Kerikmäe, T.: Trustworthy AI as a future driver for competitiveness and social change in the EU. In: Ramiro Troitiño, D., Kerikmäe, T., de la Guardia, R.M., Pérez Sánchez, G.Á. (eds.) The EU in the 21st Century, pp. 135–154. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-38399-2_9

Bertolini, A.: Insurance and risk management for robotic devices: identifying the problems. Glob. Jurist 16(3), 291–314 (2016)

Bjørlo, L., Moen, Ø., Pasquine, M.: The role of consumer autonomy in developing sustainable Ai: a conceptual framework. Sustainability 13(4), 2332 (2021)

Blumenthal-Barby, J.S.: Biases and heuristics in decision making and their impact on autonomy. Am. J. Bioeth. 16(5), 5–15 (2016)

Brandeis, L.D.: Other People’s Money and How the Bankers Use It, 1914. Bedford/St. Martin’s, Boston (1995)

Calderai, V.: Consenso informato (2015)

Calvaresi, D., Cesarini, D., Sernani, P., Marinoni, M., Dragoni, A.F., Sturm, A.: Exploring the ambient assisted living domain: a systematic review. J. Ambient Intell. Humanized Comput. 8(2), 239–257 (2017)

Ciatto, G., Schumacher, M.I., Omicini, A., Calvaresi, D.: Agent-based explanations in AI: towards an abstract framework. In: Calvaresi, D., Najjar, A., Winikoff, M., Främling, K. (eds.) EXTRAAMAS 2020. LNCS (LNAI), vol. 12175, pp. 3–20. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-51924-7_1

Confalonieri, R., Coba, L., Wagner, B., Besold, T.R.: A historical perspective of explainable artificial intelligence. Wiley Interdis. Rev.: Data Min. Knowl. Discov. 11(1), e1391 (2021)

Contissa, G., et al.: Claudette meets GDPR: automating the evaluation of privacy policies using artificial intelligence. Available at SSRN 3208596 (2018)

Coons, C., Weber, M.: Manipulation: Theory and Practice. Oxford University Press, Oxford (2014)

Craven, M., Shavlik, J.: Extracting tree-structured representations of trained networks. In: Advances in Neural Information Processing Systems, vol. 8 (1995)

Crawford, K., Schultz, J.: Big data and due process: toward a framework to redress predictive privacy harms. BCL Rev. 55, 93 (2014)

De Jong, R.: The retribution-gap and responsibility-loci related to robots and automated technologies: a reply to Nyholm. Sci. Eng. Ethics 26(2), 727–735 (2020). https://doi.org/10.1007/s11948-019-00120-4

Directive, C.: 88/627/eec of 12 december 1988 on the information to be published when a major holding in a listed company is acquired or disposed of. OJ L348, 62–65 (1988)

Directive, T.: Directive 2004/109/EC of the European parliament and of the council of 15 december 2004 on the harmonisation of transparency requirements in relation to information about issuers whose securities are admitted to trading on a regulated market and amending directive 2001/34/ec. OJ L 390(15.12) (2004)

Druce, J., Niehaus, J., Moody, V., Jensen, D., Littman, M.L.: Brittle AI, causal confusion, and bad mental models: challenges and successes in the XAI program. arXiv preprint arXiv:2106.05506 (2021)

Emilien, Gerard, Weitkunat, Rolf, Lüdicke, Frank (eds.): Consumer Perception of Product Risks and Benefits. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-50530-5

Fischer, P., Schulz-Hardt, S., Frey, D.: Selective exposure and information quantity: how different information quantities moderate decision makers’ preference for consistent and inconsistent information. J. Pers. Soc. Psychol. 94(2), 231 (2008)

Fox, M., Long, D., Magazzeni, D.: Explainable planning. arXiv preprint arXiv:1709.10256 (2017)

Gandy, O.H.: Coming to Terms with Chance: Engaging Rational Discrimination and Cumulative Disadvantage. Routledge, Milton Park (2016)

Goodfellow, I.J., Shlens, J., Szegedy, C.: Explaining and harnessing adversarial examples. arXiv preprint arXiv:1412.6572 (2014)

Guidotti, R., Monreale, A., Ruggieri, S., Turini, F., Giannotti, F., Pedreschi, D.: A survey of methods for explaining black box models. ACM Comput. surv. (CSUR) 51(5), 1–42 (2018)

Gunning, D., Stefik, M., Choi, J., Miller, T., Stumpf, S., Yang, G.Z.: XAI-explainable artificial intelligence. Sci. Rob. 4(37), eaay7120 (2019)

Hasling, D.W., Clancey, W.J., Rennels, G.: Strategic explanations for a diagnostic consultation system. Int. J. Man Mach. Stud. 20(1), 3–19 (1984)

Hellström, T., Bensch, S.: Understandable robots-what, why, and how. Paladyn, J. Behav. Rob. 9(1), 110–123 (2018)

Hoffman, R.R., Klein, G., Mueller, S.T.: Explaining explanation for explainable AI. In: Proceedings of the Human Factors and Ergonomics Society Annual Meeting, vol. 62, pp. 197–201. SAGE Publications Sage CA: Los Angeles, CA (2018)

Hoffman, R.R., Mueller, S.T., Klein, G., Litman, J.: Metrics for explainable AI: challenges and prospects. arXiv preprint arXiv:1812.04608 (2018)

Holzinger, A., Biemann, C., Pattichis, C.S., Kell, D.B.: What do we need to build explainable AI systems for the medical domain? arXiv preprint arXiv:1712.09923 (2017)

Jones, M.L.: The right to a human in the loop: political constructions of computer automation and personhood. Soc. Stud. Sci. 47(2), 216–239 (2017)

Kool, W., Botvinick, M.: Mental labour. Nat. Hum. Behav. 2(12), 899–908 (2018)

Kroll, J.A.: The fallacy of inscrutability. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 376(2133), 20180084 (2018)

Kroll, J.A.: Accountable algorithms. Ph.D. thesis, Princeton University (2015)

Lam, S.K.T., Frankowski, D., Riedl, J.: Do you trust your recommendations? an exploration of security and privacy issues in recommender systems. In: Müller, G. (ed.) ETRICS 2006. LNCS, vol. 3995, pp. 14–29. Springer, Heidelberg (2006). https://doi.org/10.1007/11766155_2

Lanzing, M.: The transparent self. Ethics Inf. Technol. 18(1), 9–16 (2016). https://doi.org/10.1007/s10676-016-9396-y

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436–444 (2015)

Leonard, T.C.: Richard h. thaler, cass r. sunstein, nudge: improving decisions about health, wealth, and happiness (2008)

Li, Y.: Deep reinforcement learning: an overview. arXiv preprint arXiv:1701.07274 (2017)

Lundberg, S.M., Lee, S.I.: A unified approach to interpreting model predictions. In: Guyon, I., et al. (eds.) Advances in Neural Information Processing Systems 30, pp. 4765–4774. Curran Associates, Inc. (2017). http://papers.nips.cc/paper/7062-a-unified-approach-to-interpreting-model-predictions.pdf

Mackenzie, C., Stoljar, N.: Relational Autonomy: Feminist Perspectives on Autonomy, Agency, and The Social Self. Oxford University Press, Oxford (2000)

Margalit, A.: Autonomy: errors and manipulation. Jerusalem Rev. Leg. Stud. 14(1), 102–112 (2016)

Margetts, H.: The internet and transparency. Polit. Q. 82(4), 518–521 (2011)

Margetts, H., Dorobantu, C.: Rethink government with AI (2019)

Matulionyte, R., Hanif, A.: A call for more explainable AI in law enforcement. In: 2021 IEEE 25th International Enterprise Distributed Object Computing Workshop (EDOCW), pp. 75–80. IEEE (2021)

Miller, T.: Explanation in artificial intelligence: insights from the social sciences. Artif. Intell. 267, 1–38 (2019)

Mualla, Y., et al.: The quest of parsimonious XAI: a human-agent architecture for explanation formulation. Artif. Intell. 302, 103573 (2022)

Obar, J.A., Oeldorf-Hirsch, A.: The biggest lie on the internet: ignoring the privacy policies and terms of service policies of social networking services. Inf. Commun. Soc. 23(1), 128–147 (2020)

Phillips, P.J., Przybocki, M.: Four principles of explainable AI as applied to biometrics and facial forensic algorithms. arXiv preprint arXiv:2002.01014 (2020)

Rai, A.: Explainable AI: from black box to glass box. J. Acad. Mark. Sci. 48(1), 137–141 (2020)

Raz, J.: The Morality of Freedom. Clarendon Press, Oxford (1986)

Regulation, P.: Regulation (EU) 2016/679 of the European parliament and of the council. Regulation (EU) 679, 2016 (2016)

Ribeiro, M.T., Singh, S., Guestrin, C.: why should i trust you? explaining the predictions of any classifier. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 1135–1144 (2016)

Rudinow, J.: Manipulation. Ethics 88(4), 338–347 (1978)

Sadek, I., Rehman, S.U., Codjo, J., Abdulrazak, B.: Privacy and security of IoT based healthcare systems: concerns, solutions, and recommendations. In: Pagán, J., Mokhtari, M., Aloulou, H., Abdulrazak, B., Cabrera, M.F. (eds.) ICOST 2019. LNCS, vol. 11862, pp. 3–17. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32785-9_1

Skouby, K.E., Lynggaard, P.: Smart home and smart city solutions enabled by 5G, IoT, AAI and CoT services. In: 2014 International Conference on Contemporary Computing and Informatics (IC3I), pp. 874–878. IEEE (2014)

Smuha, N.A.: The EU approach to ethics guidelines for trustworthy artificial intelligence. Comput. Law Rev. Int. 20(4), 97–106 (2019)

Strünck, C., et al.: The maturity of consumers: a myth? towards realistic consumer policy (2012)

Susser, D., Roessler, B., Nissenbaum, H.: Technology, autonomy, and manipulation. Internet Policy Rev. 8(2) (2019)

Szegedy, C., et al.: Intriguing properties of neural networks. arXiv preprint arXiv:1312.6199 (2013)

Timan, T., Mann, Z.: Data protection in the era of artificial intelligence: trends, existing solutions and recommendations for privacy-preserving technologies. In: Curry, E., Metzger, A., Zillner, S., Pazzaglia, J.-C., García Robles, A. (eds.) The Elements of Big Data Value, pp. 153–175. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-68176-0_7

Towell, G.G., Shavlik, J.W.: Extracting refined rules from knowledge-based neural networks. Mach. Learn. 13(1), 71–101 (1993)

Union, E.: Directive 2003/6/EC of the European parliament and of the council of 28 January 2003 on insider dealing and market manipulation (market abuse). Off. J. Eur. Union 50, 16–25 (2003)

Veale, M., Borgesius, F.Z.: Demystifying the draft EU artificial intelligence act-analysing the good, the bad, and the unclear elements of the proposed approach. Comput. Law Rev. Int. 22(4), 97–112 (2021)

Wick, M.R., Thompson, W.B.: Reconstructive expert system explanation. Artif. Intell. 54(1–2), 33–70 (1992)

Zarsky, T.: Transparency in data mining: from theory to practice. In: Custers, B., Calders, T., Schermer, B., Zarsky, T. (eds.) Discrimination and privacy in the information society, vol. 3, pp. 301–324. Springer, Berlin, Heidelberg (2013). https://doi.org/10.1007/978-3-642-30487-3_17

Zhang, Y., Chen, X., et al.: Explainable recommendation: a survey and new perspectives. Found. Trends® Inf. Retrieval 14(1), 1–101 (2020)

Zhang, Y., Liao, Q.V., Bellamy, R.K.: Effect of confidence and explanation on accuracy and trust calibration in AI-assisted decision making. In: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, pp. 295–305 (2020)

Acknowledgments

This work has received funding from the Joint Doctorate grant agreement No 814177 LAST-JD-Rights of Internet of Everything.

This work is partially supported by the Chist-Era grant CHIST-ERA19-XAI-005, and by (i) the Swiss National Science Foundation (G.A. 20CH21_195530), (ii) the Italian Ministry for Universities and Research, (iii) the Luxembourg National Research Fund (G.A. INTER/CHIST/19/14589586), (iv) the Scientific and Research Council of Turkey (TÜBİTAK, G.A. 120N680).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Carli, R., Najjar, A., Calvaresi, D. (2022). Risk and Exposure of XAI in Persuasion and Argumentation: The case of Manipulation. In: Calvaresi, D., Najjar, A., Winikoff, M., Främling, K. (eds) Explainable and Transparent AI and Multi-Agent Systems. EXTRAAMAS 2022. Lecture Notes in Computer Science(), vol 13283. Springer, Cham. https://doi.org/10.1007/978-3-031-15565-9_13

Download citation

DOI: https://doi.org/10.1007/978-3-031-15565-9_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-15564-2

Online ISBN: 978-3-031-15565-9

eBook Packages: Computer ScienceComputer Science (R0)