Abstract

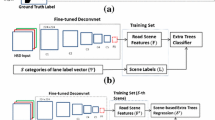

Autonomous driving tends to increase use of perception as a tool for analyzing the environment before making a decision that could impact driving. However, recent techniques based on machine learning do not provide the necessary interpretability to ensure sufficient driving safety. Combining multiple sources, deterministic or not, allows results to be cross-referenced and therefore more reliable. In this paper, we propose a novel methodology that aligns an infrastructure mapping system and point cloud analysis for railway tracks and catenaries perception to ensure autonomous train’s safety. By using a deep learning model to recognize and classify rails with the implicit knowledge of the railway infrastructure, we exceed in performance all previous systems of infrastructure: 60.9% in mIoU for tracks segmentation and 9.27 points mMink for points alignment with ground-truth, at an interesting runtime of 20 Hz. Moreover, we propose an embedded solution for automatic monitoring which avoids hours of maintenance traffic on the railway tracks. This solution is used as acquisition system feeding map and perception in real-world data for autonomous trains.

This research work is funded by the French program “Investissements d’Avenir” and is part of the French collaborative project TASV (Train Autonome Service Voyageurs), with SNCF, Alstom Crespin, Thales, Bosch, and Spirops.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Hu, Q., et al.: RandLA-Net: efficient semantic segmentation of large-scale point clouds. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11108–11117 (2020)

Zhu, X., et al.: Cylindrical and asymmetrical 3D convolution networks for LiDAR segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2021)

Behley, J., et al.: SemanticKITTI: a dataset for semantic scene understanding of LiDAR sequences. In: IEEE/CVF International Conference on Computer Vision (ICCV) (2019)

Qi, C.R., Yi, L., Su, H., Guibas, L.J.: PointNet++: deep hierarchical feature learning on point sets in a metric space. In: Neural Information Processing Systems (NeurIPS) (2017)

Qi, C.R., Su, H., Mo, K., Guibas, L.J.: PointNet: deep learning on point sets for 3D classification and segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Cheng, R., Razani, R., Taghavi, E., Li, E., Liu, B.: (AF)2–S3Net: attentive feature fusion with adaptive feature selection for sparse semantic segmentation network (2021)

Thomas, H., Qi, C.R., Deschaud, J.-E., Marcotegui, B., Goulette, F., Guibas, L.J.: KPConv: flexible and deformable convolution for point clouds. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 6411–6420 (2019)

Wang, S., Suo, S., Ma, W, Pokrovsky, A., Urtasun, R.: Deep parametric continuous convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2589–2597 (2018)

Engelmann, F., Kontogianni, T., Leibe, B.: Dilated point convolutions: on the receptive field size of point convolutions on 3D point clouds. In: International Conference on Robotics and Automation, vol. 1 (2020)

Hua, B., Tran, M., Yeung, S.: Pointwise convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 984–993 (2018)

Huang, Q., Wang, W., Neumann, U.: Recurrent slice networks for 3D segmentation of point clouds. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2626–2635 (2018)

Zeng, W., Gevers, T.: 3DContextNet: KD tree guided hierarchical learning of point clouds using local and global contextual cues. In: Proceedings of the European Conference on Computer Vision (ECCV) (2018)

Xie, Z., Chen, J., Peng, B.: Point clouds learning with attention-based graph convolution networks. Neurocomputing 402, 245–255 (2020)

He, Y., et al.: Deep learning based 3D segmentation: a survey. arXiv: 2103.05423 (2021)

Geiger, A., Lenz, P., Urtasun, R.: Are we ready for autonomous driving? The KITTI vision benchmark suite. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3354–3361 (2012)

EURO NCAP. EURO NCAP advanced: Autonomous Emergency Braking, September 2013

Commission Européene. Norme Européene NF EN 50126 Applications ferroviaires - Spécification et démonstration de la fiabilité, de la disponibilité, de la maintenabilité et de la sécurité, May 2003

UIC. Railtopomodel homepage. https://www.railtopomodel.org/en/

Eastman, C., Teicholz, P., Sacks, R., Liston, K.: BIM Handbook: A Guide to Building Information Modeling for Owners, Managers, Designers, Engineers and Contractors. Wiley, Hoboken (2008)

EULYNX Homepage. https://www.eulynx.eu/

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Mahtani, A., Chouchani, N., Herbreteau, M., Rafin, D. (2022). Enhancing Autonomous Train Safety Through A Priori-Map Based Perception. In: Collart-Dutilleul, S., Haxthausen, A.E., Lecomte, T. (eds) Reliability, Safety, and Security of Railway Systems. Modelling, Analysis, Verification, and Certification. RSSRail 2022. Lecture Notes in Computer Science, vol 13294. Springer, Cham. https://doi.org/10.1007/978-3-031-05814-1_8

Download citation

DOI: https://doi.org/10.1007/978-3-031-05814-1_8

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-05813-4

Online ISBN: 978-3-031-05814-1

eBook Packages: Computer ScienceComputer Science (R0)