Abstract

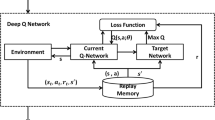

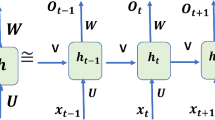

The stock market plays a vital role in the overall financial market. Financial trading has been broadly researched over the years. However, it remains challenging to obtain an optimal strategy in an environment as complex and dynamic as the stock market. Our article is interested in solving a stochastic control problem that aims at optimizing the management of a trading system in order to obtain an optimal trading strategy that would enable us to make profitable decisions by interacting directly with the environment. To do this, we explore the power of deep Reinforcement Learning that differs from traditional Machine Learning by combining the task of predicting stock behavior and analyzing the optimal course of action in a single unit, thus aligning the Machine Learning problem with the investor's objectives. As a method, we propose to use the Deep Q-Network algorithm which is a combination of Q-Learning and Deep Learning. Experiments show that the approach proposed can learn the behavior to solve a stock trading problem by producing positive results in a complex dynamic environment.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lin, L.J.: Self-improving reactive agents based on reinforcement learning, planning and teaching. Mach. Learn. 8(3–4), 293–321 (1992). https://doi.org/10.1007/BF00992699

Sutton, R.S., Barto, A.G., et al.: Introduction to Reinforcement Learning. MIT Press, Cambridge (1998)

Meng, T.L., Khushi, M.: Reinforcement learning in financial markets. Data 4(3), 110 (2019). https://doi.org/10.3390/data4030110

Tan, R., Zhou, J., Du, H., et al.: An modeling processing method for video games based on deep reinforcement learning. In: 2019 IEEE 8th Joint International Information Technology and Artificial Intelligence Conference (ITAIC), pp. 939–942. IEEE (2019)

Watkins, C.J.: Learning from delayed rewards, unpublished Ph. D. Thèse de doctorat. thesis, Kings College, Cambridge, England (1989)

Watkins, C.J.C.H., Dayan, P.: Dayan,“Q-learning.” Mach. Learn. 8(3/4), 279–292 (1992). https://doi.org/10.1023/A:1022676722315

Mnih, V., Kavukcuoglu, K., Silver, D., et al.: Playing atari with deep reinforcement learning. arXiv preprint arXiv:1312.5602 (2013)

Yang, S., Paddrik, M., Hayes, R., et al.: Behavior based learning in identifying high frequency trading strategies. In: 2012 IEEE Conference on Computational Intelligence for Financial Engineering & Economics (CIFEr), pp. 1–8. IEEE (2012)

Mao, H., Alizadeh, M., Menache, I., et al.: Resource management with deep reinforcement learning. In: Proceedings of the 15th ACM Workshop on Hot Topics in Networks, pp. 50–56 (2016)

Abbeel, P., Coates, A., Quigley, M., et al.: An application of reinforcement learning to aerobatic helicopter flight. In: Advances in neural information processing systems, pp. 1–8 (2007)

Zhou, Z., Li, X., Zare, R.N.: Optimizing chemical reactions with deep reinforcement learning. ACS Central Sci. 3(12), 1337–1344 (2017). https://doi.org/10.1021/acscentsci.7b00492

Bagnell, J.A., Schneider, J.G.: Autonomous helicopter control using reinforcement learning policy search methods. In: Proceedings 2001 ICRA. IEEE International Conference on Robotics and Automation (Cat. No. 01CH37164), pp. 1615–1620. IEEE (2001)

Kim, H.J., Jordan, M.I., Sastry, S., et al.: Autonomous helicopter flight via reinforcement learning. In: Advances in Neural Information Processing Systems, pp. 799–806 (2004)

Michie, D., Chambers, R.A.: BOXES: An experiment in adaptive control. Mach. Intell. 2(2), 137–152 (1968)

Tesauro, G.: Practical issues in temporal difference learning. In: Advances in Neural Information Processing Systems, pp. 259–266 (1992)

Levine, S., Pastor, P., Krizhevsky, A., et al.: Learning hand-eye coordination for robotic grasping with large-scale data collection. In: International Symposium on Experimental Robotics. Springer, Cham, pp. 173–184 (2016)

Kahn, G., Zhang, T., Levine, S., et al.: Plato: policy learning using adaptive trajectory optimization. In: 2017 IEEE International Conference on Robotics and Automation (ICRA), pp. 3342–3349. IEEE (2017)

Silver, D., et al.: Mastering the game of Go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016). https://doi.org/10.1038/nature16961

Moody, J., Wu, L., Liao, Y., et al.: Performance functions and reinforcement learning for trading systems and portfolios. J. Forecast. 17(5‐6), 441–470 (1998)

Bertoluzzo, F., Corazza, M.: Reinforcement learning for automatic financial trading: introduction and some applications. University Ca'Foscari of Venice, Department of Economics Research Paper Series No, 2012, vol. 33

Bertsimas, D., Lo, A.W.: Optimal control of execution costs. J. Financ. Mark. 1(1), 1–50 (1998). https://doi.org/10.1016/S1386-4181(97)00012-8

Moody, J., Saffell, M.: Learning to trade via direct reinforcement. IEEE Trans. Neural Netw. 12(4), 875–889 (2001). https://doi.org/10.1109/72.935097

Cumming, J., Alrajeh, D., Dickens, L.: An investigation into the use of reinforcement learning techniques within the algorithmic trading domain. Imperial College London, London, UK (2015)

Kanwar, N., et al.: Deep reinforcement learning-based portfolio management. Thèse de doctorat (2019)

Lee, H., Grosse, R., Ranganath, R., et al.: Convolutional deep belief networks for scalable unsupervised learning of hierarchical representations. In: Proceedings of the 26th Annual International Conference on Machine Learning, pp. 609–616 (2009)

Graves, A., Mohamed, A.R., Hinton, G.: Speech recognition with deep recurrent neural networks. In: 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 6645–6649. IEEE (2013)

Gudelek, M.U., Boluk, S.A., Ozbayoglu, A.M.: A deep learning based stock trading model with 2-D CNN trend detection. In: 2017 IEEE Symposium Series on Computational Intelligence (SSCI), pp. 1–8. IEEE (2017)

Korczak, J., Hemes, M.: Deep learning for financial time series forecasting in a-trader system. In: 2017 Federated Conference on Computer Science and Information Systems (FedCSIS), pp. 905–912. IEEE (2017)

Optioneering - Make $1000’s in Extra Income Every Month: The Best Low Risk Options Trading Strategy Around. Hands Down! Optioneering Ltd., p. 10 (2015)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Khemlichi, F., Chougrad, H., Idrissi Khamlichi, Y., El Boushaki, A., El Haj Ben Ali, S. (2022). A Stock Trading Strategy Based on Deep Reinforcement Learning. In: Kacprzyk, J., Balas, V.E., Ezziyyani, M. (eds) Advanced Intelligent Systems for Sustainable Development (AI2SD’2020). AI2SD 2020. Advances in Intelligent Systems and Computing, vol 1418. Springer, Cham. https://doi.org/10.1007/978-3-030-90639-9_74

Download citation

DOI: https://doi.org/10.1007/978-3-030-90639-9_74

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-90638-2

Online ISBN: 978-3-030-90639-9

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)