Abstract

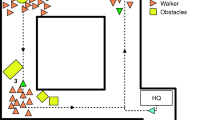

In a crowdsourced experiment, the effects of distance and type of the approaching vehicle, traffic density, and visual clutter on pedestrians’ attention distribution were explored. 966 participants viewed 107 images of diverse traffic scenes for durations between 100 and 4000 ms. Participants’ eye-gaze data were collected using the TurkEyes method. The method involved briefly showing codecharts after each image and asking the participants to type the code they saw last. The results indicate that automated vehicles were more often glanced at than manual vehicles. Measuring eye gaze without an eye tracker is promising.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Shinar, D.: Traffic Safety and Human Behavior. Emerald Group Publishing, UK (2017)

Lappi, O., Rinkkala, P., Pekkanen, J.: Systematic observation of an expert driver’s gaze strategy—an on-road case study. Front. Psychol. 8, 620 (2017)

Deng, T., Yang, K., Li, Y., Yan, H.: Where does the driver look? top-down-based saliency detection in a traffic driving environment. IEEE Trans. Intell. Transp. Syst. 17, 2051–2062 (2016)

Katsuki, F., Constantinidis, C.: Bottom-up and top-down attention: different processes and overlapping neural systems. Neuroscientist 20, 509–521 (2014)

Connor, C.E., Egeth, H.E., Yantis, S.: Visual attention: bottom-up versus top-down. Curr. Biol. 14, R850–R852 (2004)

National Highway Traffic Safety Administration: Pedestrian Safety (2018)

DaSilva, M.P., Smith, J.D., Najm, W.G.: Analysis of Pedestrian Crashes. Technical report DOT-VNTSC-NHTSA-02–02. National Highway Traffic Safety Administration (2003)

Stelling, A., Hagenzieker, M.P. Afleiding in het Verkeer: Een Overzicht van de Literatuur. SWOV Institute for Road Safety Research (2012)

Emo, B.: Seeing the axial line: evidence from wayfinding experiments. Behav. Sci. 4, 167–180 (2014)

Foulsham, T., Walker, E., Kingstone, A.: The where, what and when of gaze allocation in the lab and the natural environment. Vis. Res. 51, 1920–1931 (2011)

De Lavalette, B.C., Tijus, C., Poitrenaud, S., Leproux, C., Bergeron, J., Thouez, J.P.: Pedestrian crossing decision-making: a situational and behavioral approach. Saf. Sci. 47, 1248–1253 (2009)

Lévêque, L., Ranchet, M., Deniel, J., Bornard, J.C., Bellet, T.: Where do pedestrians look when crossing? a state of the art of the eye-tracking studies. IEEE Access 8, 164833–164843 (2020)

Rasouli, A., Tsotsos, J.K.: Autonomous vehicles that interact with pedestrians: a survey of theory and practice. IEEE Trans. Intell. Transport. Sys. 21, 900–918 (2019)

Tapiro, H., Meir, A., Parmet, Y., Oron-Gilad, T.: Visual search strategies of child-pedestrians in road crossing tasks. In: De Waard, D., Brookhuis, K., Wiczorek, R., Di Nocera, F., Brouwer, R., Barham, P., Weikert, C., Kluge, A., Gerbino, W., Toffetti, A. (eds.) Proceedings of the Human Factors and Ergonomics Society Europe Chapter 2013 Annual Conference (2014)

Geruschat, D.R., Hassan, S.E., Turano, K.A.: Gaze behavior while crossing complex intersections. Optom. Vis. Sci. 80, 515–528 (2003)

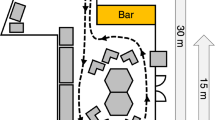

De Winter, J., Bazilinskyy, P., Wesdorp, D., De Vlam, V., Hopmans, B., Visscher, J., Dodou, D.: How do pedestrians distribute their visual attention when walking through a parking garage? an eye-tracking study. Ergon. 64, 793–805 (2021)

Fosco, C., Newman, A., Sukhum, P., Zhang, Y. B., Oliva, A., Bylinskii, Z.: How Many Glances? Modeling Multi-Duration Saliency. In: Workshop on Shared Visual Representations in Human and Machine Intelligence at NeurIPS (2019)

Waskom, M.: seaborn.kdeplot-seaborn 0.11.1 documentation (2020). https://seaborn.pydata.org/generated/seaborn.kdeplot.html

Tawari, A., Kang, B.: A computational framework for driver’s visual attention using a fully convolutional architecture. In: 2017 IEEE Intelligent Vehicles Symposium (IV), pp. 887–894. IEEE Press, New York (2017)

Bazilinskyy, P., Kyriakidis, M., Dodou, D., De. Winter, J.: When will most cars be able to drive fully automatically? projections of 18,970 survey respondents. Transp. Res. F: Traffic Psychol. Beh. 64, 184–195 (2019)

Bazilinskyy, P., De. Winter, J.: Crowdsourced measurement of reaction times to audiovisual stimuli with various degrees of asynchrony. Hum. Factors 60, 1192–1206 (2018)

Dong, W., et al.: Comparing pedestrians’ gaze behavior in desktop and in real environments. Cartogr. Geogr. Inform. Sci. 47, 432–451 (2020)

Acknowledgement

This research is supported by grant 016.Vidi.178.047 (“How should automated vehicles communicate with other road users?”), which is financed by the Netherlands Organisation for Scientific Research (NWO).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Bazilinskyy, P., Dodou, D., De Winter, J.C.F. (2021). Visual Attention of Pedestrians in Traffic Scenes: A Crowdsourcing Experiment. In: Stanton, N. (eds) Advances in Human Aspects of Transportation. AHFE 2021. Lecture Notes in Networks and Systems, vol 270. Springer, Cham. https://doi.org/10.1007/978-3-030-80012-3_18

Download citation

DOI: https://doi.org/10.1007/978-3-030-80012-3_18

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-80011-6

Online ISBN: 978-3-030-80012-3

eBook Packages: EngineeringEngineering (R0)