Abstract

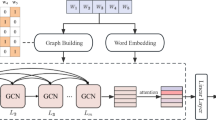

Text classification is one of the most important problems in natural language processing. There are many useful features cannot be captured by traditional methods of text classification. Deep learning models have been proven that is able to extract features from data effectively. In this paper, we propose a deep graph convolutional network model that construct graph base on words and documents. We construct a new text graph based on the relevance of words and the relationship between words and documents in order to capture information from words and documents effectively. To obtain the sufficient representation information, we propose a deep graph residual learning (DGRL) method, which can slow down the risk of gradient disappearance. Experimental results demonstrate the effectiveness of the proposed model on various text datasets.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Blei, D.M., Ng, A.Y., Jordan, M.I.: Latent dirichlet allocation. J. Mach. Learn. Res. 3(Jan), 993–1022 (2003)

Bouma, G.: Normalized (pointwise) mutual information in collocation extraction. In: Proceedings of GSCL, pp. 31–40 (2009)

Defferrard, M., Bresson, X., Vandergheynst, P.: Convolutional neural networks on graphs with fast localized spectral filtering. In: Advances in Neural Information Processing Systems, pp. 3844–3852 (2016)

Dong, L., Wei, F., Tan, C., Tang, D., Zhou, M., Xu, K.: Adaptive recursive neural network for target-dependent twitter sentiment classification. In: Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, vol. 2: Short papers, pp. 49–54 (2014)

Ganguly, D., Roy, D., Mitra, M., Jones, G.J.: Word embedding based generalized language model for information retrieval. In: Proceedings of the 38th International ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 795–798 (2015)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Huang, Z., Xu, W., Yu, K.: Bidirectional LSTM-CRF models for sequence tagging. arXiv preprint arXiv:1508.01991 (2015)

Joulin, A., Grave, É., Bojanowski, P., Mikolov, T.: Bag of tricks for efficient text classification. In: Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics, vol. 2, Short Papers, pp. 427–431 (2017)

Kim, Y.: Convolutional neural networks for sentence classification. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1746–1751 (2014)

Kipf, T.N., Welling, M.: Semi-supervised classification with graph convolutional networks. In: In ICLR (2016)

Kowsari, K., Brown, D.E., Heidarysafa, M., Meimandi, K.J., Gerber, M.S., Barnes, L.E.: HDLTex: hierarchical deep learning for text classification. In: 2017 16th IEEE International Conference on Machine Learning and Applications (ICMLA), pp. 364–371. IEEE (2017)

Lai, S., Xu, L., Liu, K., Zhao, J.: Recurrent convolutional neural networks for text classification. In: Twenty-Ninth AAAI Conference on Artificial Intelligence (2015)

Li, G., Muller, M., Thabet, A., Ghanem, B.: DeepGCNs: can GCNs go as deep as CNNs? In: Proceedings of the IEEE International Conference on Computer Vision, pp. 9267–9276 (2019)

Li, Q., Han, Z., Wu, X.M.: Deeper insights into graph convolutional networks for semi-supervised learning. In: Thirty-Second AAAI Conference on Artificial Intelligence (2018)

Lin, Y.S., Jiang, J.Y., Lee, S.J.: A similarity measure for text classification and clustering. IEEE Trans. Knowl. Data Eng. 26(7), 1575–1590 (2013)

Linmei, H., Yang, T., Shi, C., Ji, H., Li, X.: Heterogeneous graph attention networks for semi-supervised short text classification. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pp. 4823–4832 (2019)

Liu, P., Qiu, X., Huang, X.: Recurrent neural network for text classification with multi-task learning. In: IJCAI (2016)

Miyato, T., Dai, A.M., Goodfellow, I.: Adversarial training methods for semi-supervised text classification, stat 1050, 7 (2016)

Pang, B., Lee, L.: Seeing stars: exploiting class relationships for sentiment categorization with respect to rating scales. In: Proceedings of the 43rd Annual Meeting on Association for Computational Linguistics, pp. 115–124 (2005)

Pennington, J., Socher, R., Manning, C.D.: GloVe: global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543 (2014)

Shen, D., et al.: Baseline needs more love: on simple word-embedding-based models and associated pooling mechanisms. In: Proceedings of the 56th Annual Meeting of the ACL, vol. 1: Long Papers, pp. 440–450 (2018)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. In: Computer Vision and Pattern Recognition (2014)

Tai, K.S., Socher, R., Manning, C.D.: Improved semantic representations from tree-structured long short-term memory networks. In: Proceedings of the 53rd Annual Meeting of the ACL and the 7th International Joint Conference on Natural Language Processing, vol. 1: Long Papers, pp. 1556–1566 (2015)

Tang, J., Qu, M., Mei, Q.: PTE: predictive text embedding through large-scale heterogeneous text networks. In: Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 1165–1174 (2015)

Trstenjak, B., Mikac, S., Donko, D.: KNN with TF-IDF based framework for text categorization. Procedia Eng. 69, 1356–1364 (2014)

Vashishth, S., Dasgupta, S.S., Ray, S.N., Talukdar, P.: Dating documents using graph convolution networks. In: Proceedings of the 56th Annual Meeting of the ACL, vol. 1: Long Papers, pp. 1605–1615 (2018)

Wang, G., et al.: Joint embedding of words and labels for text classification. In: Proceedings of the 56th Annual Meeting of the ACL, vol. 1: Long Papers, pp. 2321–2331 (2018)

Wang, X., Yang, L., Wang, D., Zhen, L.: Improved TF-IDF keyword extraction algorithm. Comput. Sci. Appl. 3(1), 64–68 (2013)

Yao, L., Mao, C., Luo, Y.: Graph convolutional networks for text classification. Proc. AAAI Conf. Artif. Intell. 33, 7370–7377 (2019)

Zhang, N., et al.: Long-tail relation extraction via knowledge graph embeddings and graph convolution networks. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, vol. 1 (Long and Short Papers), pp. 3016–3025 (2019)

Zhang, S., Li, X., Zong, M., Zhu, X., Cheng, D.: Learning k for kNN classification. ACM Trans. Intell. Syst. Technol. (TIST) 8(3), 1–19 (2017)

Zhang, S., Li, X., Zong, M., Zhu, X., Wang, R.: Efficient kNN classification with different numbers of nearest neighbors. IEEE Trans. Neural Netw. Learn. Syst. 29(5), 1774–1785 (2017)

Zhu, X., Huang, Z., Yang, Y., Shen, H.T., Xu, C., Luo, J.: Self-taught dimensionality reduction on the high-dimensional small-sized data. Pattern Recogn. 46(1), 215–229 (2013)

Zhu, X., Li, X., Zhang, S.: Block-row sparse multiview multilabel learning for image classification. IEEE transactions on cybernetics 46(2), 450–461 (2015)

Zhu, X., Li, X., Zhang, S., Ju, C., Wu, X.: Robust joint graph sparse coding for unsupervised spectral feature selection. IEEE Trans. Neural Netw. Learn. Syst. 28(6), 1263–1275 (2016)

Zhu, X., Li, X., Zhang, S., Xu, Z., Yu, L., Wang, C.: Graph pca hashing for similarity search. IEEE Trans. Multimedia 19(9), 2033–2044 (2017)

Zhu, X., Suk, H.I., Wang, L., Lee, S.W., Shen, D., Initiative, A.D.N., et al.: A novel relational regularization feature selection method for joint regression and classification in AD diagnosis. Med. Image Anal. 38, 205–214 (2017)

Zhu, X., Zhang, L., Huang, Z.: A sparse embedding and least variance encoding approach to hashing. IEEE Trans. Image Process. 23(9), 3737–3750 (2014)

Acknowledgments

This work was supported by the Natural Science Foundation of China (No: 81701780 and 61672177); the Project of Guangxi Science and Technology (No: GuiKeAD17195062, GuiKeAD19110133 and GuiKeAD20159041); the Guangxi Collaborative Innovation Center of Multi-Source Information Integration and Intelligent Processing; the Research Fund of Guangxi Key Lab of Multi-source Information Mining and Security (No: 20-A-01-01); Innovation Project of Guangxi Graduate Education (No: JXXYYJSCXXM-012 and JXXYYJSCXXM-011).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Chen, B., Lu, G., Peng, B., Zhang, W. (2020). DGRL: Text Classification with Deep Graph Residual Learning. In: Yang, X., Wang, CD., Islam, M.S., Zhang, Z. (eds) Advanced Data Mining and Applications. ADMA 2020. Lecture Notes in Computer Science(), vol 12447. Springer, Cham. https://doi.org/10.1007/978-3-030-65390-3_7

Download citation

DOI: https://doi.org/10.1007/978-3-030-65390-3_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-65389-7

Online ISBN: 978-3-030-65390-3

eBook Packages: Computer ScienceComputer Science (R0)