Abstract

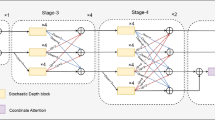

Human pose estimation (HPE) is a key building block for developing AI-based context-aware systems inside the operating room (OR). The 24/7 use of images coming from cameras mounted on the OR ceiling can however raise concerns for privacy, even in the case of depth images captured by RGB-D sensors. Being able to solely use low-resolution privacy-preserving images would address these concerns and help scale up the computer-assisted approaches that rely on such data to a larger number of ORs. In this paper, we introduce the problem of HPE on low-resolution depth images and propose an end-to-end solution that integrates a multi-scale super-resolution network with a 2D human pose estimation network. By exploiting intermediate feature-maps generated at different super-resolution, our approach achieves body pose results on low-resolution images (of size 64 \(\times \) 48) that are on par with those of an approach trained and tested on full resolution images (of size 640 \(\times \) 480).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Belagiannis, V., et al.: Parsing human skeletons in an operating room. Mach. Vis. Appl. 27(7), 1035–1046 (2016)

Cao, Z., Simon, T., Wei, S.E., Sheikh, Y.: Realtime multi-person 2D pose estimation using part affinity fields. In: CVPR, pp. 7291–7299 (2017)

Cheng, Z., Shi, T., Cui, W., Dong, Y., Fang, X.: 3D face recognition based on kinect depth data. In: 4th International Conference on Systems and Informatics (ICSAI), pp. 555–559 (2017)

Chou, E., et al.: Privacy-preserving action recognition for smart hospitals using low-resolution depth images. In: NeurIPS-MLH (2018)

Haque, A., et al.: Towards vision-based smart hospitals: a system for tracking and monitoring hand hygiene compliance. In: Proceedings of Machine Learning for Healthcare, vol. 68 (2017)

Haque, A., Peng, B., Luo, Z., Alahi, A., Yeung, S., Fei-Fei, L.: Towards viewpoint invariant 3D human pose estimation. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 160–177. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_10

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask R-CNN. In: ICCV, pp. 2961–2969 (2017)

Kadkhodamohammadi, A., Gangi, A., de Mathelin, M., Padoy, N.: Articulated clinician detection using 3D pictorial structures on RGB-D data. Med. Image Anal. 35, 215–224 (2017)

Lin, T.Y., et al.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Ma, A.J., et al.: Measuring patient mobility in the ICU using a novel noninvasive sensor. Crit. Care Med. 45(4), 630 (2017)

Maier-Hein, L., et al.: Surgical data science: enabling next-generation surgery. Nat. Biomed. Eng. 1, 691–696 (2017)

Padoy, N.: Machine and deep learning for workflow recognition during surgery. Minim. Invasive Ther. Allied Technol. 28(2), 82–90 (2019)

Rodas, N.L., Barrera, F., Padoy, N.: See it with your own eyes: markerless mobile augmented reality for radiation awareness in the hybrid room. IEEE Trans. Biomed. Eng. 64(2), 429–440 (2017)

Saxe, A.M., McClelland, J.L., Ganguli, S.: Exact solutions to the nonlinear dynamics of learning in deep linear neural networks. arXiv:1312.6120 (2013)

Shi, W., et al.: Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. In: CVPR, pp. 1874–1883 (2016)

Shotton, J., et al.: Real-time human pose recognition in parts from single depth images. Commun. ACM 56(1), 116–124 (2013)

Srivastav, V., Issenhuth, T., Abdolrahim, K., de Mathelin, M., Gangi, A., Padoy, N.: MVOR: a multi-view RGB-D operating room dataset for 2D and 3D human pose estimation. In: MICCAI-LABELS Workshop (2018)

Twinanda, A.P., Shehata, S., Mutter, D., Marescaux, J., de Mathelin, M., Padoy, N.: Multi-stream deep architecture for surgical phase recognition on multi-view RGBD videos. In: M2CAI-MICCAI Workshop (2016)

Xiao, B., Wu, H., Wei, Y.: Simple baselines for human pose estimation and tracking. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11210, pp. 472–487. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01231-1_29

Yang, Y., Ramanan, D.: Articulated human detection with flexible mixtures of parts. IEEE Trans. Pattern Anal. Mach. Intell. 35(12), 2878–2890 (2012)

Acknowledgements

This work was supported by French state funds managed by the ANR within the Investissements d’Avenir program under references ANR-16-CE33-0009 (DeepSurg), ANR-11-LABX-0004 (Labex CAMI) and ANR-10-IDEX-0002-02 (IdEx Unistra). The authors would also like to thank the members of the Interventional Radiology Department at University Hospital of Strasbourg for their help in generating the dataset.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Srivastav, V., Gangi, A., Padoy, N. (2019). Human Pose Estimation on Privacy-Preserving Low-Resolution Depth Images. In: Shen, D., et al. Medical Image Computing and Computer Assisted Intervention – MICCAI 2019. MICCAI 2019. Lecture Notes in Computer Science(), vol 11768. Springer, Cham. https://doi.org/10.1007/978-3-030-32254-0_65

Download citation

DOI: https://doi.org/10.1007/978-3-030-32254-0_65

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-32253-3

Online ISBN: 978-3-030-32254-0

eBook Packages: Computer ScienceComputer Science (R0)