Abstract

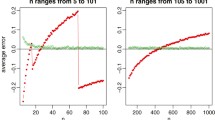

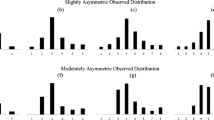

In truncated polynomial spline or B-spline models where the covariates are measured with error, a fully Bayesian approach to model fitting requires the covariates and model parameters to be sampled at every Markov chain Monte Carlo iteration. Sampling the unobserved covariates poses a major computational problem and usually Gibbs sampling is not possible. This forces the practitioner to use a Metropolis–Hastings step which might suffer from unacceptable performance due to poor mixing and might require careful tuning. In this article we show for the cases of truncated polynomial spline or B-spline models of degree equal to one, the complete conditional distribution of the covariates measured with error is available explicitly as a mixture of double-truncated normals, thereby enabling a Gibbs sampling scheme. We demonstrate via a simulation study that our technique performs favorably in terms of computational efficiency and statistical performance. Our results indicate up to 62 and 54 % increase in mean integrated squared error efficiency when compared to existing alternatives while using truncated polynomial splines and B-splines respectively. Furthermore, there is evidence that the gain in efficiency increases with the measurement error variance, indicating the proposed method is a particularly valuable tool for challenging applications that present high measurement error. We conclude with a demonstration on a nutritional epidemiology data set from the NIH-AARP study and by pointing out some possible extensions of the current work.

Similar content being viewed by others

References

Berry, S.M., Carroll, R.J., Ruppert, D.: Bayesian smoothing and regression splines for measurement error problems. J. Am. Stat. Assoc. 97, 160–169 (2002)

Besag, J.: On the statistical analysis of dirty pictures. J. R. Stat. Soc. Ser. B 48, 259–302 (1986)

Carroll, R.J., Kchenhoff, H., Lombard, F., Stefanski, L.A.: Asymptotics for the simex estimator in nonlinear measurement error models. J. Am. Stat. Assoc. 91, 242–250 (1996)

Carroll, R.J., Maca, J.D., Ruppert, D.: Nonparametric regression in the presence of measurement error. Biometrika 86, 541–554 (1999)

Carroll, R.J., Ruppert, D., Crainiceanu, C.M., Tosteson, T.D., Karagas, M.R.: Nonlinear and nonparametric regression and instrumental variables. J. Am. Stat. Assoc. 99, 736–750 (2004)

Chopin, N.: Fast simulation of truncated Gaussian distributions. Stat. Comput. 21, 275–288 (2011)

Cook, J.R., Stefanski, L.A.: Simulation-extrapolation estimation in parametric measurement error models. J. Am. Stat. Assoc. 89, 1314–1328 (1994)

Crainiceanu, C.M., Ruppert, D., Wand, M.P.: Bayesian analysis for penalized spline regression using WinBUGS. J. Stat. Softw. 14, 1–24 (2005)

Eilers, P.H.C., Marx, B.D.: Flexible smoothing with B-splines and penalties. Stat. Sci. 11, 89–121 (1996)

Ganguli, B., Staudenmayer, J., Wand, M.: Additive models with predictors subject to measurement error. Aust. N. Z. J. Stat. 47, 193–202 (2005)

Gelfand, A.E., Smith, A.F.M.: Sampling-based approaches to calculating marginal densities. J. Am. Stat. Assoc. 85, 398–409 (1990)

Hastie, T.J., Tibshirani, R.J.: Generalized Additive Models. CRC Press, Boca Raton (1990)

Marley, J.K., Wand, M.P.: Non-standard semiparametric regression via BRugs. J. Stat. Softw. 37, 1–30 (2010)

Pham, T.H., Ormerod, J.T., Wand, M.P.: Mean field variational Bayesian inference for nonparametric regression with measurement error. Comput. Stat. Data Anal. 68, 375–387 (2013)

Robert, C.: Simulation of truncated normal variables. Stat. Comput. 5, 121–125 (1995)

Roberts, G.O., Gelman, A., Gilks, W.R.: Weak convergence and optimal scaling of random walk Metropolis algorithms. Annals Appl. Probab. 7, 110–120 (1997)

Roberts, G.O., Rosenthal, J.S.: Optimal scaling for various Metropolis–Hastings algorithms. Stat. Sci. 16, 351–367 (2001)

Ruppert, D.: Selecting the number of knots for penalized splines. J. Comput. Gr. Stat. 11, 735–757 (2002)

Ruppert, D., Carroll, R.J.: Spatially-adaptive penalties for spline fitting. Aust. N. Z. J. Stat. 42, 205–223 (2000)

Schatzkin, A., Subar, A.F., Thompson, F.E., Harlan, L.C., Tangrea, J., Hollenbeck, A.R., Hurwitz, P.E., Coyle, L., Schussler, N., Michaud, D.S., Freedman, L.S., Brown, C.C., Midthune, D., Kipnis, V.: Design and serendipity in establishing a large cohort with wide dietary intake distributions: the National Institutes of Health–American Association of Retired Persons Diet and Health study. Am. J. Epidemiol. 154, 1119–1125 (2001)

Sinha, S., Mallick, B.K., Kipnis, V., Carroll, R.J.: Semiparametric Bayesian analysis of nutritional epidemiology data in the presence of measurement error. Biometrics 66, 444–454 (2010)

Stefanski, L.A., Cook, J.R.: Simulation-extrapolation: the measurement error jackknife. J. Am. Stat. Assoc. 90, 1247–1256 (1995)

Thompson, F.E., Subar, A.F.: Dietary assessment methodology. In: Coulston, A.M., Rock, C.L., Monsen, E.R. (eds.) Nutrition in the Prevention and Treatment of Disease. Academic Press, San Diego, CA (2001)

Thomson, C.A., Giuliano, A., Rock, C.L., Ritenbaugh, C.K., Flatt, S.W., Faerber, S., Newman, V., Caan, B., Graver, E., Hartz, V., Whitacre, R., Parker, F., Pierce, J.P., Marshall, J.R.: Measuring dietary change in a diet intervention trial: comparing food frequency questionnaire and dietary recalls. Am. J. Epidemiol. 157, 754–762 (2003)

Wang, B., Titterington, D.M.: Lack of consistency of mean field and variational Bayes approximations for state space models. Neural Process. Lett. 20, 151–170 (2004)

Acknowledgments

Carroll’s research was supported by Grant R37-CA057030 from the National Cancer Institute.

Author information

Authors and Affiliations

Corresponding author

Appendix A: Proofs of Proposition 1 and 2

Appendix A: Proofs of Proposition 1 and 2

Recall that \(\Theta \) denotes the \((K +2)\) parameter vector \((\beta _0, \beta _1, \theta _1, \ldots , \theta _K)^T\). The log likelihood of the complete data is given by

where “constant” is a collection of all terms that are independent of data as well as model parameters. Collecting the terms containing X in \({{\mathcal {L}}}\), except for an irrelevant constant,

We also have

and

Thus in \({{\mathcal {L}}}_x\) the terms corresponding to \(X_i\) are

Recall that \(W_{i\bullet } = \sum _{j=1}^{m_i} W_{ij}\). In the next two subsections, we treat the special cases of polynomial splines of degree 1 and B-splines of degree 1 respectively.

1.1 A.1 Proof of Proposition 1

In this case, the basis functions are given by Eq. (3). We now establish the distribution of each \(X_i\) given all the other quantities as a mixture of truncated normals. To see this, suppose \(\omega _{L} \le X_i < \omega _{L+1}\) where \(0 \le L \le K\) with the convention that \(\omega _0 = -\infty \), \(\omega _{K+1} = \infty \) and \((X_i - \omega _0)_{+} = X_i\). From Equation (17), when \(\omega _{L} \le X_i < \omega _{L+1}\), we have

and the relevant terms in the log likelihood for \(X_i\) are

Thus, the density function of \(X_i\) is

This shows the density of \(X_i\) on the interval \([\omega _L, \omega _{L+1}]\) is proportional to a double-truncated normal with mean \(\zeta _{1iL}\zeta _{2iL}\) and variance \(\zeta _{1iL}\), truncated at the boundaries and the overall density for \(X_i\) is a mixture of \((K+1)\) of these truncated normals, since \(0\le L\le K\), giving \((K+1)\) mixture components. From Eq. (18), we see that the mixing probabilities \(p_{iL} \propto \left\{ \Phi (b_{iL}) - \Phi (a_{iL})\right\} \exp \left\{ (1/2)\hbox {log}(\zeta _{1iL}) + \zeta _{3iL}\right. \left. + \zeta _{1iL}\left( \zeta _{2iL}\right) ^2/2\right\} \). Further note that it must hold that \(\sum _{L=0}^{K} p_{iL} =1\), so all that remains is to find the appropriate normalizations for the \(p_{iL}\). By algebra, we see that

Again by algebra, we see that the mixing probabilities then become \(p_{iL}\), as claimed.

1.2 A.2 Proof of Proposition 2

In this case, the \((K+1)\) basis functions are given by Eqs. (13–15) and \(\beta _0=\beta _1 =0\). We again establish the distribution of each \(X_i\) given all the other quantities as a mixture of truncated normals. The fitted function is

Clearly, if \(X_i< \omega _0\) or \(X_i \ge \omega _{K}\) then \(\sum _{k=1}^{K+1} \theta _k \tilde{B}_k(X_i) =0\). For \(L=0, \ldots , K-1\), suppose \(\omega _L \le X_i < \omega _{L+1}\). Then the relevant terms in the log likelihood for \(X_i\) are

If \(X_i< \omega _0\) or \(X_i \ge \omega _{K}\) the the relevant terms in the log likelihood are

Then, by calculations similar to Appendix A.1, the proof of Proposition 2 is completed.

Rights and permissions

About this article

Cite this article

Bhadra, A., Carroll, R.J. Exact sampling of the unobserved covariates in Bayesian spline models for measurement error problems. Stat Comput 26, 827–840 (2016). https://doi.org/10.1007/s11222-015-9572-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-015-9572-7