Abstract

Recently, performance-driven facial animation has been popular in various entertainment area, such as game, animation movie, and advertisement. With the easy use of motion capture data from a performer’s face, the resulting animated faces are far more natural and lifelike. However, when the characteristic features between live performer and animated character are quite different, expression mapping becomes a difficult problem. Many previous researches focus on facial motion capture only or facial animation only. Little attention has been paid to mapping motion capture data onto 3D face model.

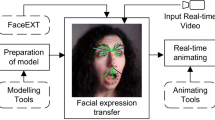

Therefore, we present a new expression mapping approach for performance-driven facial animation. Especially, we consider online factor of expression mapping for real-time application. Our basic idea is capturing the facial motion from a real performer and adapting it to a virtual character in real-time. For this purpose, we address three issues: facial expression capture, expression mapping and facial animation. We first propose a comprehensive solution for real-time facial expression capture without any devices such as head-mounted cameras and face-attached markers. With the analysis of the facial expression, the facial motion can be effectively mapped onto another 3D face model. We present a novel example-based approach for creating facial expressions of model to mimic those of face performer. Finally, real-time facial animation is provided with multiple face models, called ”facial examples”. Each of these examples reflects both a facial expression of different type and designer’s insight to be a good guideline for animation. The resulting animation preserves the facial expressions of performer as well as the characteristic features of the target examples.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Basu, S., Oliver, N., Pentland, A.: 3D modeling and tracking of human lip motions. In: Proceedings of ICCV 1998 (1998)

Black, M.J., Yacoob, Y.: Tracking and recognizing rigid and non-rigid facial motions using local parametric models of image motions. In: International Conference on Computer Vision 95, pp. 374–381 (1995)

Blanz, V., Vetter, T.: A morphable model for the synthesis of 3d faces. In: SIGGRAPH 1999 Conference Proceedings, pp. 187–194 (1999)

Chuang, E., Bregler, C.: Performance driven facial animation using blendshape interpolation. Stanford University Computer Science Technical Report, CS-TR-2002-02 (2002)

Chuang, E., Bregler, C.: Mood swings: Expressive speech animation. ACM Transactions on Graphics 24(2), 331–347 (2005)

DeCarlo, D., Metaxas, D.: Optical flow constraints on deformable models with applications to face tracking. International Journal of Computer Vision 38(2), 99–127 (2000)

Essa, I., Pentland, A.: Facial expression recognition usin a dynamic model and motion energy. In: Proceedings of ICCV 1995, pp. 360–367 (1995)

Goto, T., Kshirsagar, S., Thalmann, N.M.: Real time facial feature tracking and speech acquisition for cloned head. IEEE Signal Processing Magazine 18(3), 17–25 (2001)

Guenter, B., Grimm, C., Wood, D., Malvar, H., Pighin, F.: Making faces. In: SIGGRAPH 1998 Conference Proceedings, pp. 55–67 (1998)

Joshi, P., Tien, W.C., Desbrun, M., Pighin, F.: Learning controls for blend shape based realistic facial animation. In: Eurographics/SIGGRAPH Symposium on Computer Animation (2003)

Kahler, K., Haber, J., Seidel, H.-P.: Reanimating the dead: Reconstruction of expressive faces from skull data. In: SIGGRAPH 2003 (2003)

Kalra, P., Mangili, A., Thalmann, N.M., Thalmann, D.: Simulation of facial muscle actions based on rational free form deformations. In: Eurographics 1992, vol. 58, pp. 59–69 (1992)

Kass, M., Witkin, A., Terzopoulos, D.: Snakes: Active contour models. International Journal of Computer Vision 1(4), 321–331 (1987)

Lee, Y., Terzopoulos, D., Waters, K.: Realistic modeling for facial animation. In: SIGGRAPH 1995 Conference Proceedings, pp. 55–62 (1995)

Marschner, S.R., Guenter, B., Raghupathy, S.: Modeling and rendering for realistic facial animation. In: EUROGRAPHICS Rendering Workshop 2000, pp. 98–110 (2000)

Oliver, N., Pentland, A., Berard, F.: Lafter: Lips and face tracking. In: Computer Vision and Pattern Recognition ’97 (1997)

Pighin, F., Szeliski, R., Salesin, D.: Resynthesizing facial animation through 3d model-based tracking. In: International Conference on Computer Vision, pp. 143–150 (1999)

Pratt, W.K.: Digital Image Processing, 2nd edn. Wiley Interscience, Chichester (1991)

Pyun, H., Kim, Y., Chae, W., Kang, H.W., Shin, S.Y.: An example-based approach for facial expression cloning. In: Eurographics/SIGGRAPH Symposium on Computer Animation (2003)

Singh, K., Fiume, E.: Wires: A Geometric Deformation Technique. In: SIGGRAPH 1998 Conference Proceedings, pp. 299–308 (1998)

Sloan, P.-P., Rose, C.F., Cohen, M.F.: Shape by example. In: Proceedings of 2001 Symposium on Interactive 3D Graphics, pp. 135–144 (2001)

Terzopoulos, D., Waters, K.: Analysis and synthesis of facial image sequences using physical and anatomical models. IEEE Transactions of Pattern Analysis and Machine Intelligence 15(6), 569–579 (1993)

Thalmann, N.M., Pandzic, I., Kalra, P.: Interactive facial animation and communication. In: Tutorial of Computer Graphics International ’96, pp. 117–130 (1996)

Williams, L.: Performance-driven facial animation. In: Proceedings of ACM SIGGRAPH Conference, pp. 235–242. ACM Press, New York (1990)

Zhang, L., Snavely, N., Curless, B., Seitz, S.M.: Spacetime faces: High resolution capture for modeling and animation. ACM Transactions on Graphics 23(3), 548–558 (2004)

Zhang, Q., Liu, Z., Guo, B., Shum, H.: Geometry-driven photorealistic facial expression synthesis. In: Eurographics/SIGGRAPH Symposium on Computer Animation (2003)

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2007 IFIP International Federation for Information Processing

About this paper

Cite this paper

Byun, H.W. (2007). Online Expression Mapping for Performance-Driven Facial Animation. In: Ma, L., Rauterberg, M., Nakatsu, R. (eds) Entertainment Computing – ICEC 2007. ICEC 2007. Lecture Notes in Computer Science, vol 4740. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-74873-1_39

Download citation

DOI: https://doi.org/10.1007/978-3-540-74873-1_39

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-74872-4

Online ISBN: 978-3-540-74873-1

eBook Packages: Computer ScienceComputer Science (R0)