Abstract

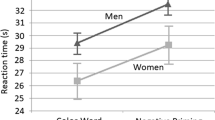

Voices provide a rich source of information that is important for identifying individuals and for social interaction. During search for a face in a crowd, voices often accompany visual information, and they facilitate localization of the sought-after individual. However, it is unclear whether this facilitation occurs primarily because the voice cues the location of the face or because it also increases the salience of the associated face. Here we demonstrate that a voice that provides no location information nonetheless facilitates visual search for an associated face. We trained novel face–voice associations and verified learning using a two-alternative forced choice task in which participants had to correctly match a presented voice to the associated face. Following training, participants searched for a previously learned target face among other faces while hearing one of the following sounds (localized at the center of the display): a congruent learned voice, an incongruent but familiar voice, an unlearned and unfamiliar voice, or a time-reversed voice. Only the congruent learned voice speeded visual search for the associated face. This result suggests that voices facilitate the visual detection of associated faces, potentially by increasing their visual salience, and that the underlying crossmodal associations can be established through brief training.

Similar content being viewed by others

References

Amedi, A., von Kriegstein, K., van Atteveldt, N. M., Beauchamp, M. S., & Naumer, M. J. (2005). Functional imaging of human crossmodal identification and object recognition. Experimental Brain Research, 166, 559–571.

Balas, B., Cox, D., & Conwell, E. (2007). The effect of real-world personal familiarity on the speed of face information processing. PLoS ONE, 2, e1223. doi:10.1371/journal.pone.0001223

Beauchamp, M. S., Argall, B. D., Bodurka, J., Duyn, J. H., & Martin, A. (2004). Unraveling multisensory integration: Patchy organization within human STS multisensory cortex. Nature Neuroscience, 7, 1190–1192.

Benevento, L. A., Fallon, J., Davis, B. J., & Rezak, M. (1977). Auditory–visual interaction in single cells in the cortex of the superior temporal sulcus and the orbital frontal cortex of the macaque monkey. Experimental Neurology, 57, 849–872.

Blank, H., Anwander, A., & von Kriegstein, K. (2011). Direct structural connections between voice- and face-recognition areas. Journal of Neuroscience, 31, 12906–12915. doi:10.1523/JNEUROSCI.2091-11.2011

Bolognini, N., Frassinetti, F., Serino, A., & Làdavas, E. (2005). “Acoustical vision” of below threshold stimuli: Interaction among spatially converging audiovisual inputs. Experimental Brain Research, 160, 273–282.

Brainard, D. H. (1997). The psychophysics toolbox. Spatial Vision, 10, 433–436. doi:10.1163/156856897X00357

Bruce, V. (1986). Influences of familiarity on the processing of faces. Perception, 15, 387–397.

Buchtel, H. A., & Butter, C. M. (1988). Spatial attentional shifts: Implications for the role of polysensory mechanisms. Neuropsychologia, 26, 499–509.

Calvert, G. A. (1997). Activation of auditory cortex during silent lipreading. Science, 276, 593–596.

Dahl, C. D., Logothetis, N. K., & Kayser, C. (2009). Spatial organization of multisensory responses in temporal association cortex. Journal of Neuroscience, 29, 11924–11932.

Driver, J., & Spence, C. (1994). Spatial synergies between auditory and visual attention. In C. Umiltà & M. Moscovitch (Eds.), Attention and performance XV: Conscious and nonconscious information processing (pp. 311–331). Cambridge, MA: MIT Press, Bradford Books.

Driver, J., & Spence, C. (1998). Attention and the crossmodal construction of space. Trends in Cognitive Sciences, 2, 254–262.

Eimer, M., Cockburn, D., Smedley, B., & Driver, J. (2001). Cross-modal links in endogenous spatial attention are mediated by common external locations: Evidence from event-related brain potentials. Experimental Brain Research, 139, 398–411.

Eimer, M., & Driver, J. (2000). An event-related brain potential study of cross-modal links in spatial attention between vision and touch. Psychophysiology, 37, 697–705.

Frassinetti, F., Bolognini, N., & Làdavas, E. (2002). Enhancement of visual perception by crossmodal visuo-auditory interaction. Experimental Brain Research, 147, 332–343.

Ghazanfar, A. A., Maier, J. X., Hoffman, K. L., & Logothetis, N. K. (2005). Multisensory integration of dynamic faces and voices in rhesus monkey auditory cortex. Journal of Neuroscience, 25, 5004–5012.

Ghazanfar, A. A., & Schroeder, C. E. (2006). Is neocortex essentially multisensory? Trends in Cognitive Sciences, 10, 278–285. doi:10.1016/j.tics.2006.04.008

Giard, M. H., & Peronnet, F. (1999). Auditory–visual integration during multimodal object recognition in humans: A behavioral and electrophysiological study. Journal of Cognitive Neuroscience, 11, 473–490.

Guzman-Martinez, E., Ortega, L., Grabowecky, M., Mossbridge, J., & Suzuki, S. (2012). Interactive coding of visual spatial frequency and auditory amplitude-modulation rate. Current Biology, 22, 383–388.

Hein, G., Doehrmann, O., Müller, N. G., Kaiser, J., Muckli, L., & Naumer, M. J. (2007). Object familiarity and semantic congruency modulate responses in cortical audiovisual integration areas. Journal of Neuroscience, 27, 7881–7887.

Iordanescu, L., Grabowecky, M., Franconeri, S., Theeuwes, J., & Suzuki, S. (2010). Characteristic sounds make you look at target objects more quickly. Attention, Perception, & Psychophysics, 72, 1736–1741. doi:10.3758/APP.72.7.1736

Iordanescu, L., Grabowecky, M., & Suzuki, S. (2011). Object-based auditory facilitation of visual search for pictures and words with frequent and rare targets. Acta Psychologica, 137, 252–259.

Iordanescu, L., Guzman-Martinez, E., Grabowecky, M., & Suzuki, S. (2008). Characteristic sounds facilitate visual search. Psychonomic Bulletin & Review, 15, 548–554. doi:10.3758/PBR.15.3.548

Kelly, S. P., Gomez-Ramirez, M., & Foxe, J. J. (2008). Spatial attention modulates initial afferent activity in human primary visual cortex. Cerebral Cortex, 18, 2629–2636.

Kuehn, S. M., & Jolicœur, P. (1994). Impact of quality of the image, orientation, and similarity of the stimuli on visual search for faces. Perception, 23, 95–122.

Lundqvist, D., Flykt, A., & Öhman, A. (1998). Karolinska Directed Emotional Faces, KDEF (CD ROM). Stockholm, Sweden: Karolinska Institutet, Department of Clinical Neuroscience, Psychology section.

Molholm, S., Ritter, W., Javitt, D. C., & Foxe, J. J. (2004). Multisensory visual–auditory object recognition in humans: A high-density electrical mapping study. Cerebral Cortex, 14, 452–465.

Molholm, S., Ritter, W., Murray, M. M., Javitt, D. C., Schroeder, C. E., & Foxe, J. J. (2002). Multisensory auditory–visual interactions during early sensory processing in humans: A high-density electrical mapping study. Cognitive Brain Research, 14, 115–128.

Pelli, D. G. (1997). The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spatial Vision, 10, 437–442. doi:10.1163/156856897X00366

Poghosyan, V., & Ioannides, A. A. (2008). Attention modulates earliest responses in the primary auditory and visual cortices. Neuron, 58, 802–813.

Roland, P. E., Hanazawa, A., Undeman, C., Eriksson, D., Tompa, T., Nakamura, H., & Ahmed, B. (2006). Cortical feedback depolarization waves: a mechanism of top-down influence on early visual areas. Proceedings of the National Academy of Sciences of the United States of America, 103, 12586–12591.

Saalmann, Y. B., Pigarev, I. N., & Vidyasagar, T. R. (2007). Neural mechanisms of visual attention: How top-down feedback highlights relevant locations. Science, 316, 1612–1615.

Schroeder, C. E., & Foxe, J. J. (2002). The timing and laminar profile of converging inputs to multisensory areas of the macaque neocortex. Cognitive Brain Research, 14, 187–198.

Schroeder, C. E., & Foxe, J. (2005). Multisensory contributions to low-level, “unisensory” processing. Current Opinion in Neurobiology, 15, 454–458.

Schweinberger, S. R. (2013). Audiovisual integration in speaker identification. In P. Belin, S. Campanella, & T. Ethofer (Eds.), Integrating face and voice in person perception (pp. 45–69). New York, NY: Springer.

Schweinberger, S. R., Kloth, N., & Robertson, D. M. C. (2011). Hearing facial identities: Brain correlates of face–voice integration in person identification. Cortex, 47, 1026–1037.

Schweinberger, S. R., Robertson, D., & Kaufmann, J. M. (2007). Hearing facial identities. Quarterly Journal of Experimental Psychology, 60, 1446–1456.

Shams, L., Iwaki, S., Chawla, A., & Bhattacharya, J. (2005). Early modulation of visual cortex by sound: An MEG study. Neuroscience Letters, 378, 76–81.

Sheffert, S. M., Pisoni, D. B., Fellowes, J. M., & Remez, R. E. (2002). Learning to recognize talkers from natural, sinewave, and reversed speech samples. Journal of Experimental Psychology: Human Perception and Performance, 28, 1447–1469. doi:10.1037/0096-1523.28.6.1447

Smith, E. L., Grabowecky, M., & Suzuki, S. (2007). Auditory–visual crossmodal integration in perception of face gender. Current Biology, 17, 1680–1685.

Stein, B. E., Meredith, M. A., Huneycutt, W. S., & McDade, L. (1989). Behavioral indices of multisensory integration: Orientation to visual cues is affected by auditory stimuli. Journal of Cognitive Neuroscience, 1, 12–24.

Taylor, K. I., Stamatakis, E. A., & Tyler, L. K. (2009). Crossmodal integration of object features: Voxel-based correlations in brain-damaged patients. Brain, 132, 671–683.

Tong, F., & Nakayama, K. (1999). Robust representation for faces: Evidence from visual search. Journal of Experimental Psychology: Human Perception and Performance, 25, 1016–1035. doi:10.1037/0096-1523.25.4.1016

Tsakiris, M. (2008). Looking for myself: Current multisensory input alters self-face recognition. PLoS ONE, 3, e4040. doi:10.1371/journal.pone.0004040

Van der Burg, E., Olivers, C. N. L., Bronkhorst, A. W., & Theeuwes, J. (2008). Pip and pop: Nonspatial auditory signals improve spatial visual search. Journal of Experimental Psychology: Human Perception and Performance, 34, 1053–1065. doi:10.1037/0096-1523.34.5.1053

Virsu, V., & Rovamo, J. (1979). Visual resolution, contrast sensitivity, and the cortical magnification factor. Experimental Brain Research, 37, 475–494.

von Kriegstein, K., & Giraud, A.-L. (2006). Implicit multisensory associations influence voice recognition. PLoS Biology, 4, e326. doi:10.1371/journal.pbio.0040326

von Kriegstein, K., Kleinschmidt, A., Sterzer, P., & Giraud, A.-L. (2005). Interaction of face and voice areas during speaker recognition. Journal of Cognitive Neuroscience, 17, 367–376. doi:10.1162/0898929053279577

Watkins, S., Shams, L., Josephs, O., & Rees, G. (2007). Activity in human V1 follows multisensory perception. NeuroImage, 37, 572–578. doi:10.1016/j.neuroimage.2007.05.027

Watkins, S., Shams, L., Tanaka, S., Haynes, J.-D., & Rees, G. (2006). Sound alters activity in human V1 in association with illusory visual perception. NeuroImage, 31, 1247–1256. doi:10.1016/j.neuroimage.2006.01.016

Zheng, Z. Z., Wild, C., & Trang, H. P. (2010). Spatial organization of neurons in the superior temporal sulcus. Journal of Neuroscience, 30, 1201–1203.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zweig, L.J., Suzuki, S. & Grabowecky, M. Learned face–voice pairings facilitate visual search. Psychon Bull Rev 22, 429–436 (2015). https://doi.org/10.3758/s13423-014-0685-3

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13423-014-0685-3