Abstract

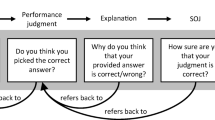

According to the unskilled and unaware effect (Kruger and Dunning 1999), low-performing students tend to overestimate their performance. Differentiating the assessment of metacognitive judgments into performance judgments (PJs) and second-order judgments (SOJs), PJs of low-performing students tend to be inflated, while their SOJs are usually lower than those of high-performing students (Händel and Fritzsche 2016; Miller and Geraci 2011). This suggests some level of awareness. The present study investigated whether low-performers’ lower SOJs actually indicate metacognitive awareness. We studied SOJs after adequate and inadequate PJs, and investigated whether low-performers’ lower SOJs are made by default or whether their lower SOJs differ in a similar magnitude compared to those of high-performers (indicating metacognitive awareness). We address this issue by disentangling student and item effects via generalized linear mixed models. Reanalyzing the data of Händel and Fritzsche (2016) from N = 116 students, we found that SOJs depended on the students who provided the SOJ and on the items on which the SOJ was made. Overall, SOJs depended on the PJs and on the interaction of performance and PJs, but not on the performance itself. Separate analyses for students of different performance levels revealed that low-performing students showed less awareness, indicated by a non-significant interaction effect of performance and PJs. Thus, it takes mixed models to tell the whole story of low-performing students’ lower SOJs.

Similar content being viewed by others

Notes

Studies in education usually use multilevel analyses to address the multilevel structure of students nested in classes which are again nested in schools. Quite contrary to this procedure, multilevel analyses in the context of SOJs allows considering that the single responses are simultaneously nested in the students (independently from the accuracy of their PJs, students might be differently confident about their PJs) and in the items (items might be differently to judge for a student).

To check whether students really differentiate between PJs and SOJs and not only confirm their PJ with the SOJ, we did a validation study beforehand. Data from this study showed that students indeed can differentiate between PJs and SOJs (Händel and Fritzsche 2016).

In an earlier study, the smiley scale yielded the most appropriate results compared to verbally or numerically labeled scales (Händel and Fritzsche 2015). Nevertheless, due to possible constraints of such smiley scales regarding the influence of emotions (Jäger and Bortz 2004), one might consider using verbally labeled scales in future studies with higher education students.

Furthermore, traditional SDT measure are usually not applied to investigate how students judge their own performance resulting in a different amount of items in the SDT categories across students, but to measure discrimination on a fixed dataset which is the same for all subjects (Higham and Gerrard 2005).

In the first place, the sample sizes of the two quartiles do not seem to be very large. But the responses for the bottom quartile and for the top quartile to be analyzed on the response level can be considered as an acceptable sample size for GLMMs (Maas and Hox 2005).

References

Al-Harthy, I. S., Was, C. A., & Hassan, A. S. (2015). Poor performers are poor predictors of performance and they know it: Can they improve their prediction accuracy? Journal of Global Research in Education and Social Science, 4, 93–100.

Barrett, A. B., Dienes, Z., & Seth, A. K. (2013). Measures of metacognition on signal-detection theoretic models. Psychological Methods, 18(4), 535–552. https://doi.org/10.1037/a0033268.

Bates, D., Mächler, M., Bolker, B. M., & Walker, S. C. (2015). Fitting linear mixed-effects models using lme4. Journal of Statistical Software, 67(1), 1–48. https://doi.org/10.18637/jss.v067.i01.

Boekaerts, M. (1997). Self-regulated learning: A new concept embraced by researchers, policy makers, educators, teachers, and students. Learning and Instruction, 7, 161–186.

Budescu, D. V., & Johnson, T. R. (2011). A model-based approach for the analysis of the calibration of probability judgments. Judgment and Decision making, 6(8), 857–869.

Buratti, S., & Allwood, C. M. (2012). The accuracy of meta-metacognitive judgments: Regulating the realism of confidence. Cognitive Processing, 13, 243–253. https://doi.org/10.1007/s10339-012-0440-5.

Buratti, S., & Allwood, C. M. (2015). Regulating metacognitive processes - Support for a meta-metacognitive ability. In A. Pena-Ayala (Ed.), Metacognition: Fundaments, applications, and trends. A profile of the current state-of-the-art (1 ed., Vol. 76, pp. 17–38). Switzerland: Springer International Publishing.

Buratti, S., Allwood, C. M., & Kleitman, S. (2013). First- and second-order metacognitive judgments of semantic memory reports: The influence of personality traits and cognitive styles. Metacognition and Learning, 8(1), 79–102. https://doi.org/10.1007/s11409-013-9096-5.

Burson, K. A., Larrick, R. P., & Klayman, J. (2006). Skilled or unskilled, but still unaware of it: How perceptions of difficulty drive miscalibration in relative comparisons. Journal of Personality and Social Psychology, 90(1), 60–77. https://doi.org/10.1037/0022-3514.90.1.60.

Development Core Team, R. (2012). R: A language and environment for statistical computing. Vienna: R Foundation for Statistical Computing Retrieved from http://www.R-project.org/.

Dinsmore, D. L., & Parkinson, M. M. (2013). What are confidence judgments made of? Students' explanations for their confidence ratings and what that means for calibration. Learning and Instruction, 24, 4–14. https://doi.org/10.1016/j.learninstruc.2012.06.001.

Dunlosky, J., Serra, M. J., Matvey, G., & Rawson, K. A. (2005). Second-order judgments about judgments of learning. The Journal of General Psychology, 132, 335–346.

Ehrlinger, J., Johnson, K., Banner, M., Dunning, D., & Kruger, J. (2008). Why the unskilled are unaware: Further explorations of (absent) self-insight among the incompetent. Organizational Behavior and Human Decision Processes, 105(1), 98–121. https://doi.org/10.1016/j.obhdp.2007.05.002.

Freund, P. A., & Kasten, N. (2012). How smart do you think you are? A meta-analysis on the validity of self-estimates of cognitive ability. Psychological Bulletin, 138(2), 296–321. https://doi.org/10.1037/a0026556.

Fritzsche, E. S., Kröner, S., Dresel, M., Kopp, B., & Martschinke, S. (2012). Confidence scores as measures of metacognitive monitoring in primary students? (limited) validity in predicting academic achievement and the mediating role of self-concept. [Antwortsicherheiten als Maß für die metakognitive Überwachung bei Grundschulkindern? (Eingeschränkte) Validität bei der Vorhersage schulischer Leistungen und die mediierende Rolle des Selbstkonzepts]. Journal for Educational Research Online, 4(2), 120–142.

Gigerenzer, G., Hoffrage, U., & Kleinbölting, H. (1991). Probabilistic mental models: A brunswikian theory of confidence. Psychological Review, 98, 506–528. https://doi.org/10.1037/0033-295X.98.4.506.

Hacker, D. J., Bol, L., & Bahbahani, K. (2008). Explaining calibration accuracy in classroom contexts: The effects of incentives, reflection, and explanatory style. Metacognition and Learning, 3, 101–121. https://doi.org/10.1007/s11409-008-9021-5.

Hacker, D. J., Bol, L., Horgan, D. D., & Rakow, E. A. (2000). Test prediction and performance in a classroom context. Journal of Educational Psychology, 92, 160–170. https://doi.org/10.1037//0022-0663.92.1.160.

Händel, M., & Fritzsche, E. S. (2015). Students’ confidence in their performance judgements: A comparison of different response scales. Educational Psychology, 35(3), 377–395. https://doi.org/10.1080/01443410.2014.895295.

Händel, M., & Fritzsche, E. S. (2016). Unskilled but subjectively aware: Metacognitive monitoring ability and respective awareness in low-performing students. Memory and Cognition, 44, 229–241. https://doi.org/10.3758/s13421-015-0552-0.

Higham, P. A., & Gerrard, C. (2005). Not all errors are created equal: Metacognition and changing answers on multiple-choiche tests. Canadian Journal of Experimental Psychology, 59(1), 28–34.

Jäger, R., & Bortz, J. (2004). Ratings scales with smileys as symbolic labels: Determined and checked by methods of psychophysics. Retrieved from http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.11.5405&rep=rep1&type=pdf

Juslin, P. (1994). The overconfidence phenomenon as a consequence of informal experimenter-guided selection of almanac items. Organizational Behavior and Human Decision Processes, 57, 226–246. https://doi.org/10.1006/obhd.1994.1013.

Juslin, P., & Olsson, H. (1997). Thurstonian and brunswikian origins of uncertainty in judgment: A sampling model of confidence in sensory discrimination. Psychological Review, 104, 344–366. https://doi.org/10.1037/0033-295X.104.2.344.

Kleitman, S., & Stankov, L. (2007). Self-confidence and metacognitive processes. Learning and Individual Differences, 17(2), 161–173. https://doi.org/10.1016/j.lindif.2007.03.004.

Koriat, A. (1997). Monitoring one's own knowledge during study: A cue-utilization approach to judgments of learning. Journal of Experimental Psychology: General, 126, 349–370.

Kröner, S., & Biermann, A. (2007). The relationship between confidence and self-concept - towards a model of response confidence. Intelligence, 35, 580–590. https://doi.org/10.1016/j.intell.2006.09.009.

Kröner, S., & Robitzsch, A. (2010). Towards a model of response confidence - person and task effects on item-level metacognitive self-evaluation. Paper presented at the 4th biennial meeting of the EARLI special interest group 16 metacognition, Münster, Germany. http://www.metacognition2010.de/pdf/Programmheft-SIG2010.pdf

Krueger, J., & Mueller, R. A. (2002). Unskilled, unaware, or both? The better-than-average heuristic and statistical regression predict errors in estimates of own performance. Journal of Personality and Social Psychology, 82(2), 180–188. https://doi.org/10.1037//0022-3514.82.2.180.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: How difficulties in recognizing one's own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121–1134.

Kruger, J., & Dunning, D. (2002). Unskilled and unaware - but why? A reply to Krueger and Mueller (2002). Journal of Personality and Social Psychology, 82(2), 189–192. https://doi.org/10.1037//0022-3514.82.2.189.

Kugler, K. C., Trail, J. B., Dziak, J. J., & Collins, L. M. (2012). Effect coding versus dummy coding in analysis of data from factorial experiments. Technical report series. Retrieved from http://methodology.psu.edu/media/techreports/12-120.pdf

Lew, M. D. N., Alwis, W. A. M., & Schmidt, H. G. (2010). Accuracy of students' self-assessment and their beliefs about its utility. Assessment and Evaluation in Higher Education, 35(2), 135–156. https://doi.org/10.1080/02602930802687737.

Lichtenstein, S., & Fischhoff, B. (1977). Do those who know more also know more about how much they know? Organizational Behavior and Human Performance, 20, 159–183.

Maas, C. J. M., & Hox, J. J. (2005). Sufficient sample sizes for multilevel modeling. Methodology, 1, 86–92. https://doi.org/10.1027/1614-1881.1.3.86.

Merkle, E. C. (2009). The disutility of the hard-easy effect in choice confidence. Psychonomic Bulletin and Review, 16(1), 204–213. https://doi.org/10.3758/PBR.16.1.204.

Merkle, E. C. (2010). Calibrating subjective probabilities using hierarchical bayesian models. In S.-K. Chai, J. J. Salerno, & P. L. Mabry (Eds.), SBP 2010, 6007 LNCS (pp. 13–22). Berlin: Springer-Verlag.

Meyers, J. L., & Beretvas, S. N. (2006). The impact of inappropriate modeling of cross-classified data structures. Multivariate Behavioral Research, 41(4), 473–497. https://doi.org/10.1207/s15327906mbr4104_3.

Miller, T. M., & Geraci, L. (2011). Unskilled but aware: Reinterpreting overconfidence in low-performing students. Journal of Experimental Psychology: Learning, Memory, and Cognition, 37(2), 502–506. https://doi.org/10.1037/a0021802.

Murayama, K., Sakaki, M., Yan, V. X., & Smith, G. M. (2014). Type I error inflation in the traditional by-participant analysis to metamemory accuracy: A generalized mixed-effects model perspective. Journal of Experimental Psychology. Learning, Memory, and Cognition, 40(5), 1287–1306. https://doi.org/10.1037/a0036914.

Noortgate, W. V. d., Boeck, P. D., & Meulders, M. (2003). Cross-classification multilevel logistic models in psychometrics. Journal of Educational and Behavioral Statistics, 28, 369–386.

Poinstingl, H. (2009). The linear logistic test model (LLTM) as the methodological foundation of item generating rules for a new verbal reasoning test. Psychology Science Quarterly, 51, 123–134.

Raudenbush, S. W., Bryk, A. S., Cheong, Y. F., Congdon, R. T., & du Toit, M. (2011). HLM 7: Hierarchical linear and nonlinear modeling. Chicago: Scientific Software International.

Rouder, J. N., & Lu, J. (2005). An introduction to bayesian hierarchical models with an application in the theory of signal detection. Psychometric Bulletin and Review, 12(4), 573–604.

Rouder, J. N., Lu, J., Sun, D., Speckman, P., Morey, R., & Naveh-Benjamin, M. (2007). Signal detection models with random participant and item effects. Psychometrika, 72(4), 621–642. https://doi.org/10.1007/s11336-005-1350-6.

Saenz, G. D., Geraci, L., Miller, T. M., & Tirso, R. (2017). Metacognition in the classroom: The association between students' exam predictions and their desired grades. Consciousness and Cognition, 51, 125–139. https://doi.org/10.1016/j.concog.2017.03.002.

Schraw, G., Kuch, F., & Gutierrez, A. P. (2013). Measure for measure: Calibrating ten commonly used calibration scores. Learning and Instruction, 24(1), 48–57. https://doi.org/10.1016/j.learninstruc.2012.08.007.

Serra, M. J., & DeMarree, K. G. (2016). Unskilled and unaware in the classroom: College students' desired grades predict their biased grade predictions. Memory and Cognition, 44(7), 1127–1137. https://doi.org/10.3758/s13421-016-0624-9.

Stankov, L., & Crawford, J. D. (1997). Self-confidence and performance on tests of cognitive abilities. Intelligence, 25, 93–109. https://doi.org/10.1016/S0160-2896(97)90047-7.

Stankov, L., & Lee, J. (2008). Confidence and cognitive test performance. Journal of Educational Psychology, 100, 961–976. https://doi.org/10.1037/a0012546.

Stankov, L., Lee, J., Luo, W., & Hogan, D. J. (2012). Confidence: A better predictor of academic achievement than self-efficacy, self-concept and anxiety? Learning and Individual Differences, 22(6), 747–758. https://doi.org/10.1016/j.lindif.2012.05.013.

Yates, J. F. (1994). Subjective probability accuracy analysis. In G. Wright & P. Ayton (Eds.), Subjective probability (pp. 381–410). Oxford: John Wiley & Sons.

Zimmerman, B. J. (2000). Attaining self-regulation: A social cognitive perspective. In M. Boekaerts, P. R. Pintrich, & M. Zeidner (Eds.), Handbook of self-regulation (pp. 13–39). San Diego: Academic Press.

Acknowledgements

This research was supported by a grant from the “Sonderfonds für wissenschaftliches Arbeiten an der Universität Erlangen-Nürnberg“.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Rights and permissions

About this article

Cite this article

Fritzsche, E.S., Händel, M. & Kröner, S. What do second-order judgments tell us about low-performing students’ metacognitive awareness?. Metacognition Learning 13, 159–177 (2018). https://doi.org/10.1007/s11409-018-9182-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11409-018-9182-9