Nothing is more dangerous for a new truth than an old misconception.

—Johann Wolfgang von Goethe, Wilhelm Meister’s Journeyman Years, 1829

Abstract

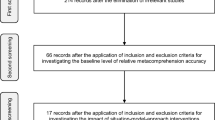

Research shows that misconceptions are usually detrimental to text comprehension. However, whether misconceptions also impair metacomprehension accuracy, that is, the accuracy with which one self-assesses one’s text comprehension, has received far less attention. We conducted a study in which we examined students’ (N = 47) comprehension and metacomprehension accuracy (prediction accuracy and postdiction accuracy) of a statistics text as a function of their statistical misconceptions. Text comprehension and metacomprehension accuracy referred to both conceptual and procedural aspects of statistics. The results showed that students who had more misconceptions achieved poorer conceptual text comprehension and, at the same time, provided more overconfident predictions of their conceptual and procedural text comprehension than students who had fewer misconceptions. In contrast, postdiction accuracy of conceptual and procedural text comprehension was not affected by misconceptions.

Similar content being viewed by others

References

Alvermann, D. E., Smith, L. C., & Readence, J. E. (1985). Prior knowledge activation and the comprehension of compatible and incompatible text. Reading Research Quarterly, 20(4), 420–436. https://doi.org/10.2307/747852.

Baars, M., Gog, T., Bruin, A. B. H., & Paas, F. (2014a). Effects of problem solving after worked example study on primary school children’s monitoring accuracy. Applied Cognitive Psychology, 28(3), 382–391. https://doi.org/10.1002/acp.3008.

Baars, M., Vink, S., Van Gog, T., De Bruin, A. B. H., & Paas, F. (2014b). Effects of training self-assessment and using assessment standards on retrospective and prospective monitoring of problem solving. Learning and Instruction, 33, 92–107. https://doi.org/10.1016/j.learninstruc.2014.04.004.

Baars, M., Visser, S., Van Gog, T., De Bruin, A. B. H., & Paas, F. (2013). Completion of partially worked-out examples as a generation strategy for improving monitoring accuracy. Contemporary Educational Psychology, 38(4), 395–406. https://doi.org/10.1016/j.cedpsych.2013.09.001.

Batanero, C., Estepa, A., & Godino, J. D. (1997). Evolution of students’ understanding of statistical association in a computer-based teaching environment. In J. Garfield & G. Burrill (Eds.), Research on the role of technology in teaching and learning statistics: Proceedings of the 1996 IASE Round Table Conference (pp. 191–205). Voorburg: International Statistical Institute. Retrieved from http://iase-web.org/documents/papers/rt1996/15.Batanero.pdf.

Batanero, C., Estepa, A., Godino, J. D., & Green, D. R. (1996). Intuitive strategies and preconceptions about association in contingency tables. Journal for Research in Mathematics Education, 27(2), 151–169. https://doi.org/10.2307/749598.

Batanero, C., Godino, J. D., & Estepa, A. (1998). Building the meaning of statistical association through data analysis activities. In A. Olivier & K. Newstead (Eds.), Proceedings of the 22nd Conference of the International Group for the Psychology of Mathematics Education (Vol. 1, pp. 221–236). Stellenbosh: University of Stellenbosh. Retrieved from http://files.eric.ed.gov/fulltext/ED427969.pdf#page=249.

Ben-Zvi, D., & Garfield, J. (2004). Statistical literacy, reasoning, and thinking: Goals, definitions, and challenges. In D. Ben-Zvi & J. Garfield (Eds.), The challenge of developing statistical literacy, reasoning and thinking (pp. 3–16). Dordrecht: Kluwer Academic.

Bol, L., Hacker, D. J., O’Shea, P., & Allen, D. (2005). The influence of overt practice, achievement level, and explanatory style on calibration accuracy and performance. The Journal of Experimental Education, 73(4), 269–290. https://doi.org/10.3200/JEXE.73.4.269-290.

Bortz, J., & Schuster, C. (2010). Statistik für Human- und Sozialwissenschaftler [Statistics for human and social scientists] (7th ed.). Berlin: Springer.

Cohen, J. (1960). A coefficient of agreement for nominal scales. Educational and Psychological Measurement, 20(1), 37–46. https://doi.org/10.1177/001316446002000104.

De Bruin, A. B. H., Rikers, R. M. J. P., & Schmidt, H. G. (2005). Monitoring accuracy and self-regulation when learning to play a chess endgame. Applied Cognitive Psychology, 19(2), 167–181. https://doi.org/10.1002/acp.1109.

De Bruin, A. B. H., Rikers, R. M. J. P., & Schmidt, H. G. (2007). Improving metacomprehension accuracy and self-regulation when learning to play a chess endgame: The effect of learner expertise. European Journal of Cognitive Psychology, 19(4–5), 671–688. https://doi.org/10.1080/09541440701326204.

Diakidoy, I.-A. N., & Kendeou, P. (2001). Facilitating conceptual change in astronomy: A comparison of the effectiveness of two instructional approaches. Learning and Instruction, 11(1), 1–20. https://doi.org/10.1016/S0959-4752(00)00011-6.

Dunlosky, J., & Rawson, K. A. (2012). Overconfidence produces underachievement: Inaccurate self-evaluations undermine students’ learning and retention. Learning and Instruction, 22(4), 271–280. https://doi.org/10.1016/j.learninstruc.2011.08.003.

Dunlosky, J., Rawson, K. A., & Middleton, E. L. (2005). What constrains the accuracy of metacomprehension judgments? Testing the transfer-appropriate-monitoring and accessibility hypotheses. Journal of Memory and Language, 52(4), 551–565. https://doi.org/10.1016/j.jml.2005.01.011.

Dunning, D., Johnson, K., Ehrlinger, J., & Kruger, J. (2003). Why people fail to recognize their own incompetence. Current Directions in Psychological Science, 12(3), 83–87. https://doi.org/10.1111/1467-8721.01235.

Estepa, A., & Batanero, C. (1996). Judgments of correlation in scatter plots: Students’ intuitive strategies and preconceptions. Hiroshima Journal of Mathematics Education, 4, 25–41. Retrieved from http://www.ugr.es/~batanero/pages/ARTICULOS/Hiroshima1996.PDF.

Estepa, A., Batanero, C., & Sánchez, F. T. (1999). Students’ intuitive strategies in judging association when comparing two samples. Hiroshima Journal of Mathematics Education, 7, 17–30. Retrieved from http://www.ugr.es/~batanero/pages/ARTICULOS/Hiroshima1999.PDF.

Estepa, A., & Sánchez, F. T. (2001). Empirical research on the understanding of association and implications for the training of researchers. In C. Batanero (Ed.), Training researchers in the use of statistics: Proceedings of the 2000 IASE Round Table Conference (pp. 37–51). Voorburg: International Statistical Institute. Retrieved from http://www.ugr.es/~batanero/documentos/training.pdf#page=42.

Flesch, R. (1948). A new readability yardstick. Journal of Applied Psychology, 32(3), 221–233. https://doi.org/10.1037/h0057532.

Gal, I. (2002). Adults’ statistical literacy: Meanings, components, responsibilities. International Statistical Review, 70(1), 1–25. https://doi.org/10.1111/j.1751-5823.2002.tb00336.x.

Garfield, J. (2003). Assessing statistical reasoning. Statistics Education Research Journal, 2(1), 22–38. Retrieved from https://www.researchgate.net/profile/Iddo_Gal/publication/239981959_Expanding_conceptions_of_statistical_literacy_An_analysis_of_products_from_statistics_agencies/links/544e27730cf2bca5ce8eef3b.pdf#page=24.

Garfield, J., & Ahlgren, A. (1988). Difficulties in learning basic concepts in probability and statistics: Implications for research. Journal for Research in Mathematics Education, 19(1), 44–63. https://doi.org/10.2307/749110.

Garfield, J., & Ben-Zvi, D. (2007). How students learn statistics revisited: A current review of research on teaching and learning statistics. International Statistical Review, 75(3), 372–396. https://doi.org/10.1111/j.1751-5823.2007.00029.x.

Griffin, T. D., Jee, B. D., & Wiley, J. (2009). The effects of domain knowledge on metacomprehension accuracy. Memory & Cognition, 37(7), 1001–1013. https://doi.org/10.3758/MC.37.7.1001.

Guzzetti, B. J., Snyder, T. E., Glass, G. V., & Gamas, W. S. (1993). Promoting conceptual change in science: A comparative meta-analysis of instructional interventions from reading education and science education. Reading Research Quarterly, 28(2), 116–159. https://doi.org/10.2307/747886.

Huck, S. W. (2016). Statistical misconceptions. New York: Routledge.

Kendeou, P., & van den Broek, P. (2005). The effects of readers’ misconceptions on comprehension of scientific text. Journal of Educational Psychology, 97(2), 235–245. https://doi.org/10.1037/0022-0663.97.2.235.

Kendeou, P., & van den Broek, P. (2007). The effects of prior knowledge and text structure on comprehension processes during reading of scientific texts. Memory & Cognition, 35(7), 1567–1577. https://doi.org/10.3758/BF03193491.

Kenny, D. A. (2004). Correlation and causality (Rev. ed.). New York: Wiley. Retrieved from http://davidakenny.net/books.htm.

Kintsch, W. (1998). Comprehension: A paradigm for cognition. Cambridge: Cambridge University Press.

Koriat, A. (1997). Monitoring one’s own knowledge during study: A cue-utilization approach to judgments of learning. Journal of Experimental Psychology: General, 126(4), 349–370. https://doi.org/10.1037/0096-3445.126.4.349.

Koriat, A., Ma’ayan, H., & Nussinson, R. (2006). The intricate relationships between monitoring and control in metacognition: Lessons for the cause-and-effect relation between subjective experience and behavior. Journal of Experimental Psychology: General, 135(1), 36–69. https://doi.org/10.1037/0096-3445.135.1.36.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121–1134. https://doi.org/10.1037/0022-3514.77.6.1121.

Kwon, H., & Linderholm, T. (2014). Effects of self-perception of reading skill on absolute accuracy of metacomprehension judgements. Current Psychology, 33(1), 73–88. https://doi.org/10.1007/s12144-013-9198-x.

Liu, T.-C. (2010). Developing simulation-based computer assisted learning to correct students’ statistical misconceptions based on cognitive conflict theory, using “correlation” as an example. Journal of Educational Technology & Society, 13(2), 180–192. Retrieved from http://www.ifets.info/journals/13_2/15.pdf.

Liu, H.-J. C., & Garfield, J. B. (2002). Sex differences in statistical reasoning. Bulletin of Educational Psychology, 34(1), 123–138. Retrieved from http://ojs.lib.ntnu.edu.tw/index.php/bep/article/viewFile/312/210.

Liu, T.-C., & Lin, Y.-C. (2008, January). Development and application of a two-tier diagnostic test for identifying students’ correlation misconceptions. Paper presented at the 6th Annual Hawaii International Conference on Education, Honolulu. Retrieved from http://hiceducation.org/wp-content/uploads/proceedings-library/EDU2008.pdf.

Liu, T.-C., Lin, Y.-C., & Kinshuk, (2010). The application of Simulation-Assisted Learning Statistics (SALS) for correcting misconceptions and improving understanding of correlation. Journal of Computer Assisted Learning, 26(2), 143–158. https://doi.org/10.1111/j.1365-2729.2009.00330.x.

Liu, T.-C., Lin, Y.-C., & Tsai, C.-C. (2009). Identifying misconceptions about statistical correlation and their possible causes among high school students: An exploratory study using concept mapping with interviews. International Journal of Science and Mathematics Education, 7(4), 791–820. https://doi.org/10.1007/s10763-008-9142-y.

Maki, R. H., Shields, M., Wheeler, A. E., & Zacchilli, T. L. (2005). Individual differences in absolute and relative metacomprehension accuracy. Journal of Educational Psychology, 97(4), 723–731. https://doi.org/10.1037/0022-0663.97.4.723.

Mevarech, Z. R., & Kramarsky, B. (1997). From verbal descriptions to graphic representations: Stability and change in students’ alternative conceptions. Educational Studies in Mathematics, 32(3), 229–263. https://doi.org/10.1023/A:1002965907987.

Moritz, J. (2004). Reasoning about covariation. In D. Ben-Zvi & J. Garfield (Eds.), The challenge of developing statistical literacy, reasoning and thinking (pp. 227–255). Dordrecht: Kluwer Academic.

Morris, E. J. (1998). Link: The principled design of a computer-assisted learning program for correlation. In Proceedings of the 5th international conference on teaching statistics. Retrieved from http://citeseerx.ist.psu.edu/viewdoc/download;jsessionid=EC6CE7E46B65FEB1E3C31AAECAAAEF8B?doi=10.1.1.215.8383&rep=rep1&type=pdf.

Morris, E. J. (1999). The design and evaluation of link: A CAL system designed to address psychology students’ misconceptions about correlation (Doctoral Dissertation). Retrieved from http://oro.open.ac.uk/19856/1/pdf81.pdf.

Morris, E. J. (2001). The design and evaluation of Link: A computer-based learning system for correlation. British Journal of Educational Technology, 32(1), 39–52. https://doi.org/10.1111/1467-8535.00175.

Nelson, T. O. (1984). A comparison of current measures of the accuracy of feeling-of-knowing predictions. Psychological Bulletin, 95(1), 109–133. https://doi.org/10.1037/0033-2909.95.1.109.

Perepiczka, M., Chandler, M., & Becerra, M. (2011). Relationship between graduate students’ statistics self-efficacy, statistics anxiety, attitude toward statistics, and social support. The Professional Counselor, 1(2), 99–108. https://doi.org/10.15241/mpa.1.2.99.

Perfetti, C. A. (1985). Reading ability. New York: Oxford University Press.

Pierce, B. H., & Smith, S. M. (2001). The postdiction superiority effect in metacomprehension of text. Memory & Cognition, 29(1), 62–67. https://doi.org/10.3758/BF03195741.

Richter, T., & van Holt, N. (2005). ELVES: Ein computergestütztes Diagnostikum zur Erfassung der Effizienz von Teilprozessen des Leseverstehens [ELVES: Computer-based assessment of the efficiency of component processes involved in reading comprehension]. Diagnostica, 51(4), 169–182. https://doi.org/10.1026/0012-1924.51.4.169.

Rittle-Johnson, B., & Schneider, M. (2015). Developing conceptual and procedural knowledge of mathematics. In R. C. Kadosh & A. Dowker (Eds.), The Oxford handbook of numerical cognition (pp. 1118–1134). Oxford: Oxford University Press.

Schneider, W., Körkel, J., & Weinert, F. E. (1989). Domain-specific knowledge and memory performance: A comparison of high- and low-aptitude children. Journal of Educational Psychology, 81(3), 306–312. https://doi.org/10.1037/0022-0663.81.3.306.

Schneider, M., Rittle-Johnson, B., & Star, J. R. (2011). Relations among conceptual knowledge, procedural knowledge, and procedural flexibility in two samples differing in prior knowledge. Developmental Psychology, 47(6), 1525–1538. https://doi.org/10.1037/a0024997.

Schraw, G. (2009). A conceptual analysis of five measures of metacognitive monitoring. Metacognition and Learning, 4(1), 33–45. https://doi.org/10.1007/s11409-008-9031-3.

Sundre, D. L. (2003, April). Assessment of quantitative reasoning to enhance educational quality. Paper presented at the annual American Educational Research Association, Chicago. Retrieved from https://www.stat.auckland.ac.nz/~iase/cblumberg/sundreqrqpaper.pdf.

Thiede, K. W., Anderson, M. C. M., & Therriault, D. (2003). Accuracy of metacognitive monitoring affects learning of texts. Journal of Educational Psychology, 95(1), 66–73. https://doi.org/10.1037/0022-0663.95.1.66.

Thiede, K. W., Griffin, T. D., Wiley, J., & Redford, J. S. (2009). Metacognitive monitoring during and after reading. In A. C. Graesser, D. J. Hacker, & J. Dunlosky (Eds.), Handbook of metacognition in education (pp. 85–106). New York: Routledge.

Tippett, C. D. (2010). Refutation text in science education: A review of two decades of research. International Journal of Science and Mathematics Education, 8(6), 951–970. https://doi.org/10.1007/s10763-010-9203-x.

Van Loon, M. H., de Bruin, A. B. H., van Gog, T., & van Merriënboer, J. J. (2013). Activation of inaccurate prior knowledge affects primary-school students’ metacognitive judgments and calibration. Learning and Instruction, 24(1), 15–25. https://doi.org/10.1016/j.learninstruc.2012.08.005.

Van Loon, M. H., Dunlosky, J., van Gog, T., van Merriënboer, J. J., & de Bruin, A. B. H. (2015). Refutations in science texts lead to hypercorrection of misconceptions held with high confidence. Contemporary Educational Psychology, 42, 39–48. https://doi.org/10.1016/j.cedpsych.2015.04.003.

Acknowledgements

This study was funded by the German Federal Ministry of Education and Research (BMBF; 01JA1518A).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Appendices

Bortz and Schuster (2010, pp. 153–156). Translated from German and adapted with permission.

Appendix 1: Text

Bortz and Schuster (2010, pp. 153–156). Translated from German and adapted with permission.

Covariance

Covariance is a measure of association that is used to describe linear relationships. A relationship between two variables is linear if it can be represented by a fit line. If the pairs of observations on two variables are drawn as points in a scatter plot with the axes representing the x- and y-variables, the points would have to lie exactly on a line when there is a linear relationship. However, for nearly all variable relationships that are of interest in research, this requirement is not met. Nevertheless, the line is a useful model to describe the linear relationship between two variables. For example, the trend between body weight x and body height y for a sample of people could be linearly described even if not all points lie exactly on a line. Thereby, the height of the covariance reflects how strongly the points spread around the hypothetical line. If the absolute covariance value is high, this means that the points lie close to the line. The slope of the line, however, depends on the measuring units in which the x- and y-variables are represented in the scatter plot.

One receives a high positive covariance if frequently an above-average value in variable x corresponds to an above-average value in variable y and, accordingly, if frequently a below-average value in variable x corresponds to a below-average value in variable y (Fig. A).

There will be a high negative covariance if frequently an above-average value in variable x corresponds to a below-average value in variable y and, accordingly, if frequently a below-average value in variable x corresponds to an above-average value in variable y (Fig. B).

If there is a covariance of zero between two variables, for above-average values in variable x there will be above-average values in variable y as well as below-average values in variable y and vice versa. Therefore, a covariance of zero indicates that there is no linear relationship between two variables. In this case, however, the variables can still be related in a nonlinear manner. Conceivable relationships are, for example, exponential, logarithmic, S-shaped, or U-shaped relationships (Fig. C).

The covariance between two variables can be calculated with the following formula:

Every studied object produces a pair of observation (x, y), whereby x and y can lie more or less far away from the respective sample mean. If both values lie well above or well below the mean, there is a high positive product of deviation \((x_{i} - \bar{x}) \cdot (y_{i} - \bar{y}).\) If the values do not deviate much, the product of deviation will be smaller. The sum of the products of deviation of all objects is therefore a measure of the degree of “covariation” of the series of pairs of observation. To account for the number of objects that are included in this sum, the sum is divided by the sample size N.

Therefore, covariance indicates if two variables vary together. Concerning the question if two variables are causally related, covariance does not provide statistical evidence. However, covariance can be used to get a first hint towards a potential cause-and-effect relationship.

Moreover, as a nonstandardized statistic, covariance is dependent on the measuring units of the underlying variables. Theoretically, covariance values can reach from negative infinity to positive infinity. For example, if in two studies the variables body weight and/or body height are measured in different units (e.g., weight: kg, g; height: m, cm), the studies will yield covariances between these two variables that are not directly comparable.

Appendix 2: Misconceptions test

Parts added for clarity appear in italics. These parts were not provided to the participants in the study.

Appendix 3: Misconceptions about covariance and respective references

Misconception | References |

|---|---|

M1 Covariance enables definite predictions | Batanero et al. (1996, 1997, 1998), Estepa and Batanero (1996), Estepa et al. (1999), Liu (2010), Liu and Lin (2008), and Liu et al. (2010) |

M2 Negative covariance indicates the absence of a relationship | Batanero et al. (1996, 1997, 1998), Liu and Lin (2008), Mevarech and Kramarsky (1997), and Morris (1998, 1999, 2001, 2004) |

M3 Covariance is related to the slope of the fit line | |

M4 A straight fit line without deviation invariably indicates perfect covariance | |

M5 Covariance implies causality | Estepa and Sánchez (2001), Garfield (2003), Liu and Lin (2008), Liu et al. (2009), Morris (1998, 1999, 2001), and Sundre (2003) |

M6 Positive covariance is always stronger than negative covariance | Estepa and Sánchez (2001), Liu (2010), Liu and Lin (2008), Liu et al. (2009, 2010), and Morris (1998, 1999, 2001) |

M7 Covariance can be interpreted from isolated points in scatter plots | Batanero et al. (1996, 1997, 1998), Estepa and Batanero (1996), Estepa et al. (1999), Mevarech and Kramarsky (1997), and Moritz (2004) |

M8 Zero covariance indicates the absence of any association | Estepa and Sánchez (2001), Liu (2010), Liu and Lin (2008), and Liu et al. (2009, 2010) |

M9 Only consider covariance if attributable to a causal relationship | |

M10 Only a straight fit line without deviation indicates covariance | |

M11 Positive and zero covariance are always stronger than negative covariance | |

M12 Covariance is a standardized statistic | Batanero et al. (1997, 1998), Estepa and Sánchez (2001), Liu (2010), Liu and Lin (2008), and Liu et al. (2010) |

M13 Covariance changes if the variables are reversed | Estepa and Sánchez (2001), Liu (2010), Liu and Lin (2008), and Liu et al. (2010) |

M14 More negative covariance indicates a weaker relationship | |

M15 The fit line of perfect covariance must have a constant slope of 45° |

Appendix 4: Conceptual comprehension questions

Parts added for clarity appear in italics. These parts were not provided to the participants in the study.

Appendix 5: Procedural comprehension questions

Appendix 6: Results on metacomprehension accuracy in terms of absolute accuracy

Misconceptions and prediction accuracy

As can be seen in Table 8, the linear regression analyses revealed that misconceptions neither significantly influenced the prediction absolute accuracy of conceptual comprehension nor the prediction absolute accuracy of procedural comprehension. Hence, in contrast to bias, the absolute accuracy with which participants predicted their conceptual and procedural text comprehension was not affected by their misconceptions. This is because the range of bias was made up of negative and positive values. More precisely, participants with a lower number of misconceptions were rather underconfident, whereas participants with a higher number of misconceptions tended to be overconfident. Thus, while there was an effect of misconceptions on the direction of the deviations between predicted and actual comprehension (bias), there was no effect of misconceptions when only looking at the magnitude of the deviations (absolute accuracy).

Misconceptions and postdiction accuracy

As represented in Table 9, the linear regression analyses showed that misconceptions neither significantly influenced the postdiction absolute accuracy of conceptual comprehension nor the postdiction absolute accuracy of procedural comprehension.

Rights and permissions

About this article

Cite this article

Prinz, A., Golke, S. & Wittwer, J. The double curse of misconceptions: misconceptions impair not only text comprehension but also metacomprehension in the domain of statistics. Instr Sci 46, 723–765 (2018). https://doi.org/10.1007/s11251-018-9452-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11251-018-9452-6