Abstract

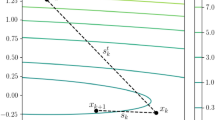

We introduce a new efficient nonlinear conjugate gradient method for unconstrained optimization, based on minimizing a penalty function. Our penalty function combines the good properties of the linear conjugate gradient method using some penalty parameters. We show that the new method is a member of Dai–Liao family and, more importantly, propose an efficient Dai–Liao parameter by closely analyzing the penalty function. Numerical experiments show that the proposed parameter is promising.

Similar content being viewed by others

References

Hestenes, M.R., Stiefel, E.: Method of conjugate gradient for solving linear system. J. Res. Natl. Bur. Stand. 49, 409–436 (1952)

Fletcher, R., Reeves, C.M.: Function minimization by conjugate gradients. Comput. J. 7, 149–154 (1964)

Polak, E., Ribière, G.: Not sur la convergence de méthods directions conjugées. Revue Franccaise d’Informatique et de Recherche opiérationnelle. 16, 35–43 (1969)

Polyak, B.T.: The conjugate gradient method in extremal problems. USSR Comput. Math. Math. Phys. 9, 94–112 (1969)

Dai, Y.H., Liao, L.Z.: New conjugacy conditions and related nonlinear conjugate gradient methods. Appl. Math. Optim. 43, 87–101 (2001)

Hager, W.W., Zhang, H.: A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J. Optim. 16, 170–192 (2005)

Dai, Y.H., Kou, C.X.: A nonlinear conjugate gradient algorithm with an optimal property and an improved Wolfe line search. SIAM J. Optim. 23, 296–320 (2013)

Hager, W.W., Zhang, H.: A survey of nonlinear conjugate gradient methods. Pac. J. Optim. 2(1), 335–358 (2006)

Dai, Y.H.: Nonlinear Conjugate Gradient Methods. Wiley Encyclopedia of Operations Research and Management Science. doi:10.1002/9780470400531.eorms0183 (2011)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2, 21–42 (1992)

Liu, D.C., Nocedal, J.: On the limited memory BFGS method for large scale optimization. Math. Program. 45, 503–528 (1989)

Oren, S.S.: Self-scaling metric algorithms for unconstrained minimization. Ph.D. thesis, Department of Engineering Economic Systems. Stanford University, Stanford (1972)

Perry, J.M.: A Class of Conjugate Gradient Algorithms with a Two-Step Variable-Metric Memory. Northwestern University, Evanston (1977)

Shanno, D.F.: On the convergence of a new conjugate gradient algorithm. SIAM J. Numer. Anal. 15, 1247–1257 (1978)

Moré, J.J., Thuente, D.J.: Line search algorithms with guaranteed sufficient decrease. Acm Trans. Math. Soft. 20, 286–307 (1994)

Hager, W.W., Zhang, H.: Algorithm 851: CG-DESCENT, a conjugate gradient method with guaranteed descent. Acm Trans. Math. Soft. 32, 113–137 (2006)

Gould, N.I.M., Orban, D., Toint, P.L.: CUTEr ( and SifDec ), a constrained and unconstrained testing environment, revisited. Technical report TR/PA/01/04, CERFACS, Toulouse (2001)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profile. Math. Program. 91, 201–213 (2002)

Acknowledgments

The author also thank the Research Council of K. N. Toosi University of Technology for supporting this work and sincerely appreciate the helpful comments and suggestions provided by anonymous referees.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Fatemi, M. An Optimal Parameter for Dai–Liao Family of Conjugate Gradient Methods. J Optim Theory Appl 169, 587–605 (2016). https://doi.org/10.1007/s10957-015-0786-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-015-0786-9