Abstract

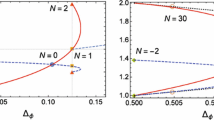

The fractal dimensions of the hull, the external perimeter and of the red bonds are measured through Monte Carlo simulations for dilute minimal models, and compared with predictions from conformal field theory and SLE methods. The dilute models used are those first introduced by Nienhuis. Their loop fugacity is \(\beta=-2 \cos(\pi/\bar{\kappa})\) where the parameter \(\bar{\kappa}\) is linked to their description through conformal loop ensembles. It is also linked to conformal field theories through their central charges \(c(\bar{\kappa})=13-6(\bar{\kappa}+\bar{\kappa}^{-1})\) and, for the minimal models of interest here, \(\bar{\kappa}=p/p'\) where p and p′ are two coprime integers. The geometric exponents of the hull and external perimeter are studied for the pairs (p,p′)=(1,1),(2,3),(3,4),(4,5),(5,6),(5,7), and that of the red bonds for (p,p′)=(3,4). Monte Carlo upgrades are proposed for these models as well as several techniques to improve their speeds. The measured fractal dimensions are obtained by extrapolation on the lattice size H,V→∞. The extrapolating curves have large slopes; despite these, the measured dimensions coincide with theoretical predictions up to three or four digits. In some cases, the theoretical values lie slightly outside the confidence intervals; explanations of these small discrepancies are proposed.

Similar content being viewed by others

References

Adams, D.A., Sander, L.M., Ziff, R.M.: Fractal dimensions of the Q-state Potts model for the complete and external hulls. J. Stat. Mech. P03004 (2010). arXiv:1001.0055v1 [cond-mat.stat-mech]

Aharony, A., Asikainen, J.: Fractal dimensions and corrections to scaling for critical Potts clusters. Fractals 11, 3–7 (2003). arXiv:cond-mat/0206367

Arguin, L.-P.: Homology of Fortuin-Kasteleyn clusters of Potts models on the torus. J. Stat. Phys. 109, 301–310 (2002). arXiv:hep-th/0111193

Asikainen, J., Aharony, A., Mandelbrot, B.B., Rauch, E.M., Hovi, J.P.: Fractal geometry of critical Potts clusters. Eur. Phys. J. B 34, 479–487 (2003). arXiv:cond-mat/0212216

Batchelor, M.T., Suzuki, J., Yung, C.M.: Exact results for Hamiltonian walks from the solution of the fully packed loop model on the honeycomb lattice. Phys. Rev. Lett. 73, 2646–2649 (1994). arXiv:cond-mat/9408083v1

Beffara, V.: The dimension of the SLE curves. Ann. Probab. 36(4), 1421–1452 (2008). arXiv:math/0211322v3 [math.PR]

Blöte, H.W.J., Nienhuis, B.: Critical behaviour and conformal anomaly of the \(\mathcal{O}(n)\) model on the square lattice. J. Phys. A, Math. Gen. 22, 1415–1438 (1989)

Blöte, H.W.J., Knops, Y.M.M., Nienhuis, B.: Geometrical aspects of critical Ising configurations in two dimensions. Phys. Rev. Lett. 68, 3440–3443 (1992)

Camia, F., Newman, C.M.: Two-dimensional critical percolation: the full scaling limit. Commun. Math. Phys. 268, 1–38 (2006). arXiv:math/0605035v1 [math.PR]

Chayes, L., Machta, J.: Graphical representations and cluster algorithms II. Physica A 254, 477–516 (1998)

Coniglio, A.: Fractal structure of Ising and Potts clusters: exact results. Phys. Rev. Lett. 62, 3054–3057 (1989)

Deng, Y., Blöte, H.W.J., Nienhuis, B.: Geometric properties of two-dimensional critical and tricritical Potts models. Phys. Rev. E 69, 026123 (2004)

Deng, Y., Garoni, T.M., Guo, W., Blöte, H.W.J., Sokal, A.D.: Cluster simulations of loop models on two-dimensional lattices. Phys. Rev. Lett. 98, 120601 (2007). arXiv:cond-mat/0608447v3 [cond-mat.stat-mech]

Ding, C., Deng, Y., Guo, W., Qian, X., Blöte, H.W.J.: Geometric properties of two-dimensional \(\mathcal{O}(n)\) loop configurations. J. Phys. A, Math. Theor. 40, 3305–3317 (2007). arXiv:cond-mat/0608547v1 [cond-mat.stat.mech]

Draper, N.R., Smith, H.: Applied Regression Analysis, 3rd edn. Wiley, New York (1998)

Dubail, J., Jacobsen, J.L., Saleur, H.: Conformal boundary conditions in the critical \(\mathcal{O}(n)\) model and dilute loop models. Nucl. Phys. B 827, 457–502 (2010). arXiv:0905.1382v1

Duminil-Copin, H., Smirnov, S.: Conformal invariance of lattice models. arXiv:1109.1549v2 [math.PR]

Duplantier, B.: Critical exponents of Manhattan Hamiltonian walks in two dimensions, from Potts and \(\mathcal{O}(n)\) models. J. Stat. Phys. 49, 411–431 (1987)

Duplantier, B.: Two-dimensional fractal geometry, critical phenomena and conformal invariance. Phys. Rep. 184(2–4), 229–257 (1989)

Duplantier, B.: Conformally invariant fractals and potential theory. Phys. Rev. Lett. 84(7), 1363–1367 (2000)

Fishman, G.S.: A First Course in Monte Carlo. Duxbury Press, Belmont (2006)

Grossman, T., Aharony, A.: Structure and perimeters of percolation clusters. J. Phys. A, Math. Gen. 19, L745–L751 (1986)

Ikhlef, Y., Cardy, J.: Discretely holomorphic parafermions and integrable loop models. J. Phys. A 102001 (2009). arXiv:0810.5037v2 [math-ph]

Kager, W., Nienhuis, B.: A guide to stochastic Loewner evolution and its applications. J. Stat. Phys. 115, 1149–1229 (2004). arXiv:math-ph/0312056v3

Kenyon, R.: Conformal invariance of domino tiling. Ann. Probab. 28, 759–795 (2000). arXiv:math-ph/9910002v1

Langlands, R., Lewis, M.-A., Saint-Aubin, Y.: Universality and conformal invariance for the Ising model in domains with boundary. J. Stat. Phys. 98, 131–244 (2000)

Liu, Q., Deng, Y., Garoni, T.M.: Worm Monte Carlo study of the honeycomb-lattice loop model. Nucl. Phys. B 846, 283–315 (2011). arXiv:1011.1980v2 [cond-mat.stat-mech]

Mandelbrot, B.B.: Negative fractal dimensions and multifractals. Physica A 163, 306–315 (1990)

Nienhuis, B.: Critical and multicritical \(\mathcal{O}(n)\) models. Physica A 163, 152–157 (1990)

Pearce, P.A., Rasmussen, J., Zuber, J.-B.: Logarithmic minimal models. J. Stat. Mech. P11017 (2006). arXiv:hep-th/0607232v3

Pinson, T.H.: Critical percolation on the torus. J. Stat. Phys. 75, 1167–1177 (1994)

Rohde, S., Schramm, O.: Basic properties of SLE. Ann. Math. 161, 883–924 (2005). arXiv:math/0106036v4 [math.PR]

Saint-Aubin, Y., Pearce, P.A., Rasmussen, J.: Geometric exponents, SLE and logarithmic minimal models. J. Stat. Mech. P02028 (2009). arXiv:0809.4806v2

Saleur, H., Duplantier, B.: Exact determination of the percolation hull exponent in two dimensions. Phys. Rev. Lett. 58, 2325–2328 (1987)

Sheffield, S.: Exploration trees and conformal loop ensembles. Duke Math. J. 147(1), 79–129 (2006). arXiv:math/0609167v2 [math.PR]

Smirnov, S.: Critical percolation in the plane: conformal invariance. Cardy’s formula, scaling limits. C. R. Acad. Sci. Paris Sér. I Math. 333, 239–244 (2001)

Smirnov, S.: Conformal invariance in random cluster models. I. Holomorphic fermions in the Ising model. Ann. Math. 172, 1435–1467 (2010). arXiv:0708.0039v1 [math-ph]

Stanley, H.E.: Cluster shapes at the percolation threshold: an effective cluster dimensionality and its connection with critical-point exponents. J. Phys. A, Math. Gen. 10, L211–L220 (1977)

Swendsen, R.H., Wang, J.-S.: Nonuniversal critical dynamics in Monte Carlo simulations. Phys. Rev. Lett. 58(2), 86–88 (1987)

Vanderzande, C.: Fractal dimensions of Potts clusters. J. Phys. A, Math. Gen. 25, L75–L80 (1992)

Werner, W.: The conformally invariant measure on self-avoiding loops. J. Am. Math. Soc. 21, 137–169 (2008). arXiv:math/0511605v3 [math.PR]

Acknowledgements

This work is supported by the Canadian Natural Sciences and Engineering Research Council (Y.S.-A.) and the Australian Research Council (P.A.P. and J.R.). J.R. is supported by the Australian Research Council under the Future Fellowship scheme, project number FT100100774. We acknowledge Amelia Brennan who carried out some preliminary numerical calculations for small lattices as part of her Summer Vacation Scholarship at Melbourne University.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix A: Upgrade Algorithms

In this appendix, we first present the upgrade algorithm common to all the models. The subsequent subsections sketch the ideas relevant for particular models.

1.1 A.1 The Basic Algorithm

For the dense loop models \(\mathcal{LM}(p,p')\), reviewed in Appendix C, each box is in either one of two faces, corresponding to the two w-faces of \(\mathcal{DLM}(p,p')\). A straightforward Metropolis-Hastings upgrade can be chosen as simply changing one box at a time and checking whether the usual Monte Carlo condition is verified. (See [33].) For the dilute loop models \(\mathcal{DLM}(p,p')\), the edges of the nine possible states are not necessarily crossed by loop segments, as is shown in Fig. 1. Indeed, the empty state contains no loop segments, two edges of the u- and v-faces are crossed by a loop segment and all four edges of the w-faces are crossed. As the loop segment must remain continuous at box interfaces, we could refine the previous algorithm by requiring that the edges that are crossed before the change, and only those, remain crossed after the change. But then the only effective change would be the flipping of a w-face to its mirror face, thus preventing the algorithm from sampling over all configuration space. Because of this, we are forced to consider changing many contiguous boxes in a single upgrade step.

The upgrade step must therefore change an m×n block of boxes, with m,n≥2. First an m×n block is chosen at random in the H×V lattice on the cylinder. Because this block has to fit entirely in the lattice, this amounts to placing randomly the upper left box of the block in the first V−n+1 rows, all possibilities being weighted uniformly. Second, the content of the m×n block is changed for another admissible block. To be admissible, the 2(m+n) edges of the block must be crossed by a loop if they were in the original block, and be free of crossing if the original edge had none. For instance, if the chosen 3×3 block corresponds to Fig. 17(a), then an admissible replacement is shown in Fig. 17(b), while Fig. 17(c) shows a forbidden replacement block. The new block must be chosen uniformly among admissible ones. Finally, the Boltzmann weight \(u^{n_{u}}v^{n_{v}}w^{n_{w}}\beta^{N}\) of the configuration where the m×n block is replaced by the new choice is computed and compared with the original one (see Eq. (6)): the Metropolis-Hastings ratio decides whether the replacement is to be accepted or rejected. Note that, even though the change is limited to the block, the computation of the weight involves counting the number of closed loops (except when β=1) and might therefore require exploring the configuration at a large distance of the m×n block under consideration.

The requirement of uniformity of the block state among admissible ones raises a subtle problem. One might think that it is achieved by simply choosing the face of each of the m×n boxes one after the other, respecting at each step the conditions on the perimeter. This is not the case as the next example shows. Suppose that the m=n=3 block to be changed is that of Fig. 17(a) and that the new box states will be chosen from left to right, top to bottom. If the first box to be chosen is the upper left one, three choices are admissible: the u-face u 2 that has a single west-north crossing, and both w-faces w 1 and w 2. (As in Sect. 2.1.2, the index of the letters u, v and w refers to the order of Fig. 1, the bottom box being labeled by 1.) Each of these three faces will be given probability \(\frac{1}{3}\). It is easy to check that there are two possible replacements for the next box, and only one for the last box of the top row. Similarly, two faces are admissible for the leftmost box of the second row. So far, twelve different fillings are possible, each occurring with probability \(\frac{1}{12}\). The difficulty occurs for the box at the center. Two of the twenty-seven possible fillings are shown in Fig. 18. For the filling on the left, two choices are allowed: the u 4 or the empty face. But for the filling on the right, three are possible, namely the u 2, w 1 and w 2 faces. This means that, starting from the center box, the blocks obtained from the filling on the left will occur with probability \(\frac{1}{24}\), and the ones obtained from the right filling will get probability \(\frac{1}{36}\): this violates the requirement of uniformity. To assure uniformity, one has to determine first, for a given block, the number of allowed replacements. We found it more efficient to count beforehand these for all the possible edge configurations of the perimeter, or boundary state, for the m×n block, and actually construct a list of the admissible replacements. For m=n=3, there is a total of 113 361 possible replacements for the 2048 boundary states. Depending on the boundary state, there can be as little as 18 admissible replacements or as many as 690.

We decided to work with 3×3 blocks. The list of possible replacements for larger blocks would take up much more memory. Moreover, given some boundary state on the block being changed, the variations in probability brought by tentative replacements would likely be in a wider range and lead to a higher rejection rate. Such an argument does not hold for 2×2 blocks, the smallest that allow exploration of the whole configuration space, for which the list of replacements is short and the acceptance rate is high. However, some experimentation with this shorter list shows that the autocorrelation between upgrades, and therefore the number of upgrades between statistically independent measurements, is very high. We found the 3×3 blocks to be a good compromise.

1.2 A.2 Improvements

The Ising model is described by the dilute phase of \(\mathcal {DLM}(3,4)\). Since the loop fugacity of this model is β=1, there is no need to know the number of loops crossing the m×n block when computing the Boltzmann weight. This simplification greatly speeds up the algorithm because loop counting is by far its most time-consuming component.

It is more difficult to improve the algorithm for models with β∈(0,2]∖{1}. The rest of this paragraph is devoted to this problem, while the case β=0 will be discussed in Sect. A.3. One can distinguish between models according to whether 0<β<1 or 1<β≤2, because models of the first type will tend to be filled with less loops than those of the second type. Most configurations of both types of models have large loops, some being of length of the same order as that of the defect.

Our first attempt to compute Boltzmann weights was rather naive. We simply followed every connected path that crosses the m×n block until it returns to its starting point or, if the path is part of the defect, reaches the boundary of the lattice; that works, but it is slow, especially for large lattices. It is slow due to the presence of the defect and, potentially, of long loops. The problem is particularly acute for those models with large β, e.g. the dilute phase of \(\mathcal{DLM}(1,1)\), because the predicted hull fractal dimension of the defect is large and lots of loops are present.

A way of reducing the impact of the defect crossing the m×n block is to assign a time order to each edge it crosses, starting from its entry point down to its exiting point. Because every edge crossed by the defect is then identified in some way as belonging to it, it is possible to distinguish the defect from a loop during loop counting. Moreover, the time ordering allows to identify when the defect enters first the block and when it leaves it for good. Since these two edges cannot change when going from the original to the replacement block, it allows for quick identification of those situations where the defect generates a loop or absorbs one intersecting the block.

Large loops are common, some with size commensurate to that of the defect. When the m×n block selected for the upgrade is crossed by one of these, a slowing down of the algorithm similar to that encountered for the defect is observed. To get over this problem, we time-ordered the edges of each loop, starting from an arbitrary edge on its path, and also assigned a unique number to each loop. During the loop counting phase of the former block, the number of different loops crossing the block is now obtained very quickly: it is simply the total of different loop numbers. The time order is useful because it allows the algorithm to know how the different edges of the same loop or defect are connected to each other outside the m×n block. Like for the entry and exit points of the defect, those external connections will not change after replacement of the m×n block. So, with this information, during the loop counting step for the replacement block, the algorithm does not have to follow the path of the loops (or defect) which lies outside the block since the reentry point is now known.

1.3 A.3 The Case β=0

The percolation model, as described by the dilute phase of \(\mathcal {DLM}(2,3)\), needs a special algorithm to be simulated efficiently since its β=0 loop fugacity forbids the presence of loops. It is still possible to choose an m×n replacement block on the sole basis that it suits the boundary conditions of the block, but then if the chosen configuration creates a loop, this choice will have to be rejected. To get rid of this problem, we need to know what the external connections of the block are, as described in Sect. A.2; this information is easily retrieved if the defect is time-ordered. All that is left to do is to choose, with uniform probability, a replacement amongst the blocks respecting both the boundary conditions and external connections. In Fig. 19, an example of a possible replacement admitting no loop is shown for a 3×3 block on a lattice with cylindrical geometry.

To obtain a new list of replacement blocks appropriate for this model, the external connections of the m×n block have to be taken into account, so it is necessary to be able to enumerate them all. To do so, one may use a diagrammatic representation, an example of which can be seen in Fig. 20. This example of a diagram corresponds to the external connections of Figs. 19(a) and 19(b). Under this representation, the connected edges are linked pairwise by arches, and the entry and exit edges of the defect in the block, depicted by pale dots in the figures, are linked at infinity by vertical lines. The linking arches may not cross each other, but they may go “under” the vertical lines, that is without intersecting them, as the defect may wind around the cylinder without self-intersecting. For given block boundary conditions, consisting of p connected and 2m+2n−p unconnected edges, there are

different external connections, where \(C_{i}=\binom{i}{i/2}-\binom {i}{i/2-1}\). To obtain the new list of replacements, one must first fix the boundary conditions and external connections of the m×n block and then, using the list of Sect. A.1, check whether any of the suggested replacements yields loops; those that do not generate loops belong to the new list. This testing has to be repeated for all possible external connections matching these fixed boundary conditions. Finally, repeating this process for all 2048 boundary conditions completes the list of replacements. For a 3×3 block, we find, using Eq. (30), a total of

different external connections, leading to a list of over 3.8×106 replacements. This is to be compared with the 113 361 possible replacements of the standard β>0 list.

Diagrammatic representation of the external connections shown in Fig. 19. The points are aligned by starting from the top left edge and following the boundary clockwise

When the β=0 algorithm steps on an empty m×n block, nothing can be done since loops are not allowed, and so the algorithm skips this block and chooses a new one. According to Eq. (7), the β=0, or λ=π/8, model is the most diluted of all the β≥0 models. That is, the ratios of the u,v and w weights with that of the empty face are at their smallest. Empty blocks therefore occur frequently for β=0 and the present algorithm takes advantage of this. Even though the β=0 algorithm is involved and its list of replacements is heavy, it is the second fastest algorithm, second only to the one for β=1. By second fastest we mean that the algorithm for β=0 computes in average the second most Metropolis-Hastings iterations per second.

Appendix B: Statistics

In this section, we explain the procedures we used to obtain warm-up intervals, the statistical formulas we used to obtain the confidence intervals on measurements, and the extrapolation procedure.

2.1 B.1 Warm-up Interval

The warm-up interval, or burn-in period, is the average number of Monte Carlo cycles needed to attain thermalization. To find a reliable warm-up interval for each of the models \(\mathcal{DLM}(p,p')\), one may use the standard procedure (see Fishman [21] for instance). From any H×V lattice configuration, start the Monte Carlo algorithm for n independent Markov chains and take m measures of the length L of the defect in the bulk. Each measure is to be separated from the next one by Δ Metropolis-Hastings (MH) cycles. Finally, plot the measurements, averaged on these n chains, against m: the abscissa where the average values stabilize provides a warm-up interval.

The approximate point of thermalization for \(\mathcal{DLM}(3,4)\) on the cylinder of size H=512 and V=1024 is represented in Fig. 21 by a vertical line at m=400. After that point, the average value fluctuates around \(\overline{L}=6500\) which, according to Eq. (21) with \(R=\sqrt{523\,776}\simeq723.7\), corresponds to \(d_{h}^{H\times V}=1.33\). This is to be compared with the final measurement of Table 2, \(\widehat{d_{h}}^{H\times V}=1.3323\pm0.0004\). To illustrate the reliability of this thermalization point, we plotted two datasets in Fig. 21: the dark one corresponds to a set of measures started from a configuration having the smallest possible defect length in the bulk, that is L=L min=512, while the light one corresponds to a set started from the maximal defect length L=L max=R 2=523 776.

2.2 B.2 Statistical Analysis

We give the details here of the methods used for obtaining confidence intervals on the different measures, on how the linear regressions, or fits, of the data were done, and also what processes were used to discriminate between a good and a bad linear regression. For more details on these subjects, see Draper and Smith [15].

2.2.1 B.2.1 Confidence Interval

For an experiment consisting of n independent Markov chains, e.g. n computers or processes, and a total of m measurements Q i,j per chain, where i labels the chain and j the datum, the unbiased estimator of the expected value \(\mathrm{\mathbf{E}}[Q]=\overline{Q}\) of an observable Q is given by the grand sample average

where \(\overline{Q}_{i}=\frac{1}{m}\sum_{j=1}^{m}Q_{i,j}\) is the average value of the i-th Markov chain. To obtain a confidence interval on \(\widehat{Q}\), we first need to compute the unbiased sample variance

In this work, some of the observables \(d_{S}^{H\times V}\) were measured with a small number n of Markov chains (n∼20). In these cases, it is better to replace the approximate 95 % confidence interval \(2\widehat{\sigma}/\sqrt{n}\) by the correct

where τ n−1 is the inverse Student t-distribution with n−1 degrees of freedom. For n=20 observations, the factor τ 19 of Eq. (31) equals approximately 2.093.

2.2.2 B.2.2 Linear Regression

The estimated fractal dimension of the different observables in the continuum scaling limit, as R→∞ in Eq. (21), is obtained by extrapolating the linear regression of the measurement data acquired at finite R’s. For an experiment of n 1+n 2+⋯+n ℓ ≡n total measures (sample points) of the observable, with n i the number of measures for the i-th lattice size (e.g. H i =32,H i+1=64, etc.), the most general linear model with p parameters is:

where Y i , i=1,2,…,n, are the sample points, β k , k=0,1,…,p−1, are parameters associated with the p independent variables X ik , and \(\epsilon_{i}\sim N (0,\sigma_{i}^{2} )\) is the i-th random error associated with Y i . We shall be interested in polynomial fits, for which \(X_{ij}=X_{i}^{j}\), with X i =1/lnR(H i ,V i ). Moreover, Y i corresponds to the measured fractal dimension \(d_{S}^{H_{i}\times V_{i}}\) at R(H i ,V i ). Equation (32) can be rewritten in matrix notation as

where Y and ϵ are n×1 vectors, β is a p×1 vector, and X is a n×p matrix. Note that the first column of X, related to β 0, is filled with 1’s. The fitted equation,

is such that \(\widehat{\mathbf{Y}}=\mathrm{\mathbf{E}}[\mathbf{Y}]\) and \(\boldsymbol{\widehat{\beta}}=\mathrm{\mathbf{E}}[\boldsymbol{\beta}]\) are unbiased estimators of Y and β, respectively; these are the quantities we need to evaluate.

The difficulty with the present datasets is that the observables \(d_{S}^{H_{i}\times V_{i}}\) have not been measured with the same precision, that is, their variances depend on the size of the lattice. And when  , with σ

2

V

ij

=Cov[ϵ

i

,ϵ

j

] and σ a positive constant, one cannot rely on ordinary least squares (OLS) for obtaining an unbiased linear regression, but must count instead on weighted least squares (WLS). In other words, if the sample variances σ

2

V

ii

=Var[ϵ

i

]=Var[Y

i

] in Eq. (32) cannot be considered constant throughout the dataset, one cannot use OLS. Moreover, the WLS method may be used only if V is diagonal, i.e. if the different ϵ

i

are uncorrelated. Fortunately, this is the case here as our Markov chains are independent.

, with σ

2

V

ij

=Cov[ϵ

i

,ϵ

j

] and σ a positive constant, one cannot rely on ordinary least squares (OLS) for obtaining an unbiased linear regression, but must count instead on weighted least squares (WLS). In other words, if the sample variances σ

2

V

ii

=Var[ϵ

i

]=Var[Y

i

] in Eq. (32) cannot be considered constant throughout the dataset, one cannot use OLS. Moreover, the WLS method may be used only if V is diagonal, i.e. if the different ϵ

i

are uncorrelated. Fortunately, this is the case here as our Markov chains are independent.

The idea behind WLS is to multiply both sides of Eq. (33) by the constant n×n matrix P=V

−1/2, in such a way that the variance of the transformed error P

ϵ becomes constant amongst the dataset; indeed,  since P is symmetric. In practice, one may set σ

2=1 since this constant is the desired variance of the transformed variable P

Y, in which case \(V=\mathrm{diag} \{\widehat{\sigma_{1}}^{2},\widehat{\sigma_{2}}^{2},\ldots ,\widehat{\sigma_{n}}^{2} \}\). The expressions for \(\boldsymbol{\widehat {\beta}}\), \(\mathrm{\mathbf{Var}} [\boldsymbol{\widehat{\beta}} ]\) and \(\mathrm{\mathbf{Var}} [\widehat{\mathbf{Y}} ]\) in the WLS method are obtained by replacing X with PX and Y with P

Y in the usual OLS expressions. The WLS expressions are then

since P is symmetric. In practice, one may set σ

2=1 since this constant is the desired variance of the transformed variable P

Y, in which case \(V=\mathrm{diag} \{\widehat{\sigma_{1}}^{2},\widehat{\sigma_{2}}^{2},\ldots ,\widehat{\sigma_{n}}^{2} \}\). The expressions for \(\boldsymbol{\widehat {\beta}}\), \(\mathrm{\mathbf{Var}} [\boldsymbol{\widehat{\beta}} ]\) and \(\mathrm{\mathbf{Var}} [\widehat{\mathbf{Y}} ]\) in the WLS method are obtained by replacing X with PX and Y with P

Y in the usual OLS expressions. The WLS expressions are then

Since we are interested in polynomial models, the last expression of (35) may be rewritten to yield \(\mathrm{\mathbf{Var}} [\widehat{\mathbf{Y}}]\) as a function of x:

where \(\widehat{Y}_{x}=\mathbf{x}^{\intercal}\widehat{\boldsymbol{\beta}}\) is the linear regression. Using (36), the 95 % confidence limits of \(\widehat{Y}_{x}\) as a function of x is

with τ n−p (0.95) as in (31).

In Fig. 22, the results of fitting the model \(Y_{i}=\beta_{0}+\beta_{1}X_{i}+\beta_{3}X_{i}^{3}\) to the dataset of the observable d h (Eq. (16)) for \(\mathcal{DLM}(3,4)\) on the V/H=2 cylinder are shown. The average of d h for each run at each size H×V is shown by a small dot. The bottom and top curves are the confidence limits obtained by (37), and the central curve corresponds to the fit \(\widehat{Y}_{x}\). The 95 % confidence interval at 1/lnR=0 is [1.3744,1.3770], thus \(\widehat{d_{h}}=1.3757\pm0.0013\) (cf. Table 3).

2.2.3 B.2.3 Model Testing

Once a linear regression has been obtained, it is necessary to attest its validity and quality. To do this, the popular Pearson correlation coefficient \(\sum_{i=1}^{n} (\widehat{Y}_{i}-\overline{Y} )^{2} /\sum_{i=1}^{n} (Y_{i}-\overline{Y} )^{2}\) can be of help, but alone may lead to biased results, as discussed in Sect. 11.2 of Draper and Smith [15], especially if an extrapolation from the fit is intended. To complement the correlation coefficient, one may use the F-test that compares different models of linear regression for Y. To do so, we must first fix the full model that contains all the β parameters that are sensible to use. For instance, our linear regressions used a polynomial model of order 3. Higher order polynomials would have required data on more lattice sizes. The full model is thus

Different models are then compared with the full model. The F-test is used to verify linear hypotheses. For example, we may want to compare the quality of the fit obtained, say for a cubic model like Eq. (38) but with β 2=0, or an even model where β 1=β 3=0. We might also want to test a hypothesis of the form β 0+2β 1=4 and β 0+β 1+β 3=−1, where there are now two relations to be satisfied at the same time. More generally, a linear hypothesis can be written as

where R is an m×p matrix providing m linear relations amongst the β’s, where q of these restrictions are linearly independent. The m×1 vector r contains the constants of the m relations.

To verify such a hypothesis, both the estimator \(\widehat{\boldsymbol {\beta_{r}}}\) of the restricted model, Y=X β r+ϵ,

and the estimator \(\widehat{\boldsymbol{\beta}}\) of the full model are computed. Then the residual sum of squares \(\mathrm{SSE} (\widehat{\boldsymbol {\beta_{r}}} )\) and \(\mathrm{SSE} (\widehat{\boldsymbol{\beta}} )\) for both models are obtained. Their estimators are defined by

and similarly for \(\widehat{\boldsymbol{\beta_{r}}}\). Finally, the F-test for the hypothesis (39) consists in comparing the ratio

with the value z for which \(\int_{0}^{z}F(q,n-p,x)\,\mathrm {d}x=\lambda\), where λ∈[0,1] (we chose λ=0.95 in this work) and the F-distribution is

with B(x,y) the Beta function. Now, if \(f_{H_{0}}\leq z\) then, with probability λ, the dataset does not provide sufficient proof that H 0 has to be rejected. On the other hand, if \(f_{H_{0}}>z\), then the dataset shows that the hypothesis H 0 is implausible and should be rejected. Different scores coming from different hypotheses concerning the same full model may be compared with each other, the lowest score being the most plausible. We use this test to check whether one or more of the coefficients β i could be set to zero. When this is a possibility, we note that the estimated fractal dimension obtained from the restricted model is, most of the time, closer to the theoretical value than that of the full one, and its confidence interval is also smaller. For the dataset of the \(d_{h}^{H\times V}\) of \(\mathcal{DLM}(3,4)\), the full cubic model of (38) gives \(\widehat{d_{h}}=1.377\pm0.007\), while it is 1.3757±0.0013 for the restricted model having the lowest score, that is the one with β 2=0.

Appendix C: Logarithmic Minimal Models

Here the lattice models used by Pearce, Rasmussen, and Zuber [30] to define the logarithmic minimal models \(\mathcal{LM}(p,p')\) are recalled. They were used in [33] for simulations of the dense models. The underlying loop gas is based on two elementary faces, illustrated in Fig. 23. At the isotropic point, the two faces are given an equal weight ρ 1=ρ 2 which can be fixed to 1. The partition function of the loop gas is then

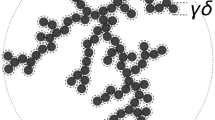

where \(\mathcal{L}\) is the set of all possible loop configurations, β the loop fugacity, and N the number of loops in a given configuration. These models do not correspond to a particular solution of the \(\mathcal{DLM}\) models ((6) and (7)) as no value of λ∈[−π/2,π/2] in any of the critical regimes found in Blöte and Nienhuis [7] yields u=v=0, w≠0 and no empty face. The simulations in [33] were done on a cylinder. The boundary conditions consisted in half-circles added at the extremities of the cylinder, as shown in Fig. 24. Note that, with these boundary conditions, there are \(|\mathcal{L}|=2^{H\times V}\) possible configurations.

It is these lattice models whose continuum scaling limit was given the name of logarithmic minimal models \(\mathcal{LM}(p,p')\). Their loop fugacity is given by \(\beta=-2\cos (\frac{\pi}{\bar{\kappa}} )\) with \(\bar{\kappa }=p'/p\) and they are believed to be described by logarithmic CFTs whose central charge and conformal weights are given by (9) and (10). For appropriate boundary conditions on a strip, the logarithmic minimal model \(\mathcal{LM}(2,3)\) with β=1 is equivalent to bond percolation on the square lattice.

Rights and permissions

About this article

Cite this article

Provencher, G., Saint-Aubin, Y., Pearce, P.A. et al. Geometric Exponents of Dilute Loop Models. J Stat Phys 147, 315–350 (2012). https://doi.org/10.1007/s10955-012-0464-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10955-012-0464-3