Abstract

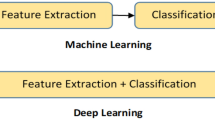

In machine learning literature, deep learning methods have been moving toward greater heights by giving due importance in both data representation and classification methods. The recently developed multilayered arc-cosine kernel leverages the possibilities of extending deep learning features into the kernel machines. Even though this kernel has been widely used in conjunction with support vector machines (SVM) on small-size datasets, it does not seem to be a feasible solution for the modern real-world applications that involve very large size datasets. There are lot of avenues where the scalability aspects of deep kernel machines in handling large dataset need to be evaluated. In machine learning literature, core vector machine (CVM) is being used as a scaling up mechanism for traditional SVMs. In CVM, the quadratic programming problem involved in SVM is reformulated as an equivalent minimum enclosing ball problem and then solved by using a subset of training sample (Core Set) obtained by a faster \((1+\epsilon )\) approximation algorithm. This paper explores the possibilities of using principles of core vector machines as a scaling up mechanism for deep support vector machine with arc-cosine kernel. Experiments on different datasets show that the proposed system gives high classification accuracy with reasonable training time compared to traditional core vector machines, deep support vector machines with arc-cosine kernel and deep convolutional neural network.

Similar content being viewed by others

Notes

DSVM: support vector machines with arc-cosine kernel.

Solving the quadratic problem and choosing the support vectors involves the order of \(n^2\), while solving the quadratic problem directly involves inverting the kernel matrix, which has complexity on the order of \(n^3\).

\(\left\langle a,b \right\rangle\) represent dot product between vectors a,b.

When a kernel depends only on the norm of the lag vector between two examples, and not on the direction, then the kernel is said to be isotropic: \(k(x,z)=k_l(\left\| x-y \right\| )\).

\(\delta _{ij}={\left\{ \begin{array}{ll}1 & \, {\rm if }\, i=j \\ 0 & \, { \rm if }\, i\ne j \end{array}\right. }\)

Optdigit was taken from UCI machine learning repository and all other datasets were taken from libSVM.

Available at: https://www.csie.ntu.edu.tw/cjlin/libsvm/oldfiles/.

References

Asharaf S, Murty MN, Shevade SK (2006) Cluster based core vector machine. In: Sixth international conference on data mining (ICDM’06), IEEE, pp 1038–1042

Asharaf S, Murty MN, Shevade SK (2007) Multiclass core vector machine. In: Proceedings of the 24th international conference on machine learning, ACM, pp 41–48

Bădoiu M, Clarkson KL (2008) Optimal core-sets for balls. Comput Geom 40(1):14–22

Bādoiu M, Har-Peled S, Indyk P (2002) Approximate clustering via core-sets. In: Proceedings of the thirty-fourth annual ACM symposium on theory of computing, ACM, pp 250–257

Bengio Y, Lamblin P, Popovici D, Larochelle H et al (2007) Greedy layer-wise training of deep networks. Adv Neural Inf Process Syst 19:153

Blake C, Merz CJ (1998) UCI repository of machine learning databases [http://www.ics.uci.edu/~mlearn/mlrepository.html]. University of California. Department of Information and Computer Science 55, Irvine

Chang CC, Lin CJ (2011) Libsvm: a library for support vector machines. ACM Trans Intell Syst Technol (TIST) 2(3):27

Cho Y, Saul LK (2009) Kernel methods for deep learning. In: Advances in neural information processing systems, pp 342–350

Cho Y, Saul LK (2011) Analysis and extension of arc-cosine kernels for large margin classification. arXiv preprint arXiv:11123712

Chollet F (2015) Keras. https://github.com/fchollet/keras

Cortes C, Vapnik V (1995) Support-vector networks. Mach Learn 20(3):273–297

Hinton G, Deng L, Yu D, Dahl GE, Ar Mohamed, Jaitly N, Senior A, Vanhoucke V, Nguyen P, Sainath TN et al (2012) Deep neural networks for acoustic modeling in speech recognition: the shared views of four research groups. IEEE Sign Process Mag 29(6):82–97

Hinton GE (2007) Learning multiple layers of representation. Trends Cogn Sci 11(10):428–434

Hinton GE, Osindero S, Teh YW (2006) A fast learning algorithm for deep belief nets. Neural Comput 18(7):1527–1554

Ji Y, Sun S (2013) Multitask multiclass support vector machines: model and experiments. Pattern Recogn 46(3):914–924

Kumar P, Mitchell JS, Yildirim EA (2003) Approximate minimum enclosing balls in high dimensions using core-sets. J Exp Algorithm (JEA) 8:1–1

Lee H, Grosse R, Ranganath R, Ng AY (2009) Convolutional deep belief networks for scalable unsupervised learning of hierarchical representations. In: Proceedings of the 26th annual international conference on machine learning, ACM, pp 609–616

Miao Y, Metze F, Rawat S (2013) Deep maxout networks for low-resource speech recognition. In: Automatic speech recognition and understanding (ASRU), 2013 IEEE Workshop on, IEEE, pp 398–403

Muller KR, Mika S, Ratsch G, Tsuda K, Scholkopf B (2001) An introduction to kernel-based learning algorithms. IEEE Trans Neural Netw 12(2):181–201

Salakhutdinov R, Hinton GE (2009) Deep boltzmann machines. In: AISTATS, vol 1, p 3

Tsang I, Kocsor A, Kwok J (2011) Libcvm toolkit. https://github.com/aydindemircioglu/libCVM

Tsang IH, Kwok JY, Zurada JM (2006) Generalized core vector machines. IEEE Trans Neural Netw 17(5):1126–1140

Tsang IW, Kwok JT, Cheung PM (2005) Core vector machines: Fast svm training on very large data sets. J Mach Learn Res 6:363–392

Tsang IW, Kwok JTY, Cheung PM (2005) Very large svm training using core vector machines. In: AISTATS

Tsang IW, Kocsor A, Kwok JT (2007) Simpler core vector machines with enclosing balls. In: Proceedings of the 24th international conference on machine learning, ACM, pp 911–918

Vapnik V, Golowich SE, Smola A, et al (1997) Support vector method for function approximation, regression estimation, and signal processing. Adv Neural Inf Process Syst 281–287

Zhong Sh, Liu Y, Liu Y (2011) Bilinear deep learning for image classification. In: Proceedings of the 19th ACM international conference on Multimedia, ACM, pp 343–352

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Afzal, A.L., Asharaf, S. Deep kernel learning in core vector machines. Pattern Anal Applic 21, 721–729 (2018). https://doi.org/10.1007/s10044-017-0600-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10044-017-0600-4