Abstract

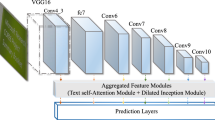

Saliency text is that characters are ordered with visibility and expressivity. It also contains important clues for video analysis, indexing, and retrieval. Thus, in order to localize the saliency text, a critical stage is to collect key points from real text pixels. In this paper, we propose an evidence-based model of saliency feature extraction (SFE) to probe saliency text points (STPs), which have strong text signal structure in multi-observations simultaneously and always appear between text and its background. Through the multi-observations, each signal structure with rhythms of signal segments is extracted at every location in the visual field. It supports source of evidences for our evidence-based model, where evidences are measured to effectively estimate the degrees of plausibility for obtaining the STP. The evaluation results on benchmark datasets also demonstrate that our proposed approach achieves the state-of-the-art performance on exploring real text pixels and significantly outperforms the existing algorithms for detecting text candidates. The STPs can be the extremely reliable text candidates for future text detectors.

Similar content being viewed by others

References

Wei, Y.C., Lin, C.H.: A robust video text detection approach using SVM. Expert Syst. Appl. 39(12), 10832–10840 (2012). doi:10.1016/j.eswa.2012.03.010

Reffle, U., Ringlstetter, C.: Unsupervised profiling of OCRed historical documents. Pattern Recognit. 46(5), 1346–1357 (2013). doi:10.1016/j.patcog.2012.10.002

Minetto, R., Thome, N., Cord, M., Leite, N.J., Stolfi, J.: T-HOG: an effective gradient-based descriptor for single line text regions. Pattern Recognit. 46(3), 1078–1090 (2013). doi:10.1016/j.patcog.2012.10.009

Shi, C.Z., Wang, C.H., Xiao, B.H., Zhang, Y., Gao, S.: Scene text detection using graph model built upon maximally stable extremal regions. Pattern Recognit. Lett. 34(2), 107–116 (2013). doi:10.1016/j.patrec.2012.09.019

Fabrizio, J., Marcotegui, B., Cord, M.: Text detection in street level images. Pattern Anal. Appl. (2013). doi:10.1007/s10044-013-0329-7

Shivakumara, P., Dutta, A., Tan, C.L., Pal, U.: Multi-oriented scene text detection in video based on wavelet and angle projection boundary growing. Multimed. Tools Appl. (2013). doi:10.1007/s11042-013-1385-0

Yi, C., Tian, Y.: Text extraction from scene images by character appearance and structure modeling. Comput. Vis. Image Underst. 117(2), 182–194 (2013). doi:10.1016/j.cviu.2012.11.002

Shahab, A, Shafait, F, Dengel, A, Uchida, S.: How salient is scene text? Paper presented at the 10th IAPR International Workshop on Document Analysis Systems (2012)

Gao, R, Shafait, F, Uchida, S, Feng, Y.L: A hierarchical visual saliency model for character detection in natural scenes. Paper presented at the Fifth International Workshop on Camera-Based Document Analysis and Recognition (2013)

Gao, R., Uchida, S., Shahab, A., Shafait, F., Frinken, V.: Visual saliency models for text detection in real world. PLoS One 9(12), e114539 (2014). doi:10.1371/journal.pone.0114539

Koo, H.I., Kim, D.H.: Scene text detection via connected component clustering and nontext filtering. IEEE Trans. Image Process. 22, 2296–2305 (2013)

Chen, X, Yuille, A.L.: Detecting and reading text in natural scenes. Paper presented at the Proceedings of IEEE Computer Society Conference on Computer Vision and Pattern Recognition (2004)

Yi, C., Tian, Y.: Localizing text in scene images by boundary clustering, stroke segmentation, and string fragment classification. IEEE Trans. Image Process. 21(9), 4256–4268 (2012). doi:10.1109/TIP.2012.2199327

Shivakumara, P., Sreedhar, R.P., Phan, T.Q., Lu, S.J., Tan, C.L.: Multioriented video scene text detection through Bayesian classification and boundary growing. IEEE Trans. Circuits Syst. Video Technol. 22(8), 1227–1235 (2012). doi:10.1109/Tcsvt.2012.2198129

Moradi, M., Mozaffari, S.: Hybrid approach for Farsi/Arabic text detection and localisation in video frames. IET Image Process. 7(2), 154–164 (2013). doi:10.1049/iet-ipr.2012.0441

Shivakumara, P., Phan, T.Q., Tan, C.L.: A Laplacian approach to multi-oriented text detection in video. IEEE Trans. Pattern Anal. Mach. Intell. 33(2), 412–419 (2011). doi:10.1109/TPAMI.2010.166

MacQueen, J.B.: Some methods for classification and analysis of multivariate observations. Paper presented at the Proceedings of 5th Berkeley Symposium on Mathematical Statistics and Probability (1967)

Crandall, D., Antani, S., Kasturi, R.: Extraction of special effects caption text events from digital video. Int. J. Doc. Anal. Recognit. 5, 138–157 (2003)

Kasturi, R., Goldgof, D., Soundararajan, P., Manohar, V., Garofolo, J., Bowers, R., Boonstra, M., Korzhova, V., Zhang, J.: Framework for performance evaluation of face, text, and vehicle detection and tracking in video: data, metrics, and protocol. IEEE Trans. Pattern Anal. Mach. Intell. 31(2), 319–336 (2009). doi:10.1109/TPAMI.2008.57

Aradhya, V.N.M., Pavithra, M.S., Naveena, C.: A robust multilingual text detection approach based on transforms and wavelet entropy. Paper presented at the 2nd International Conference on Computer, Communication, Control and Information Technology (2012)

Anthimopoulos, M., Gatos, B., Pratikakis, I.: A two-stage scheme for text detection in video images. Image Vis. Comput. 28(9), 1413–1426 (2010). doi:10.1016/j.imavis.2010.03.004

Canny, J.: A computational approach to edge detection. IEEE Trans. Pattern Anal. Mach. Intell. 8(6), 79–98 (1986)

Wang, F., Ngo, C.-W., Pong, T.-C.: Structuring low-quality videotaped lectures for cross-reference browsing by video text analysis. Pattern Recognit. 41(10), 3257–3269 (2008). doi:10.1016/j.patcog.2008.03.024

Mtimet, J., Amiri, H.: A layer-based segmentation method for compound images. Paper presented at the 10th International Multi-Conference on Systems, Signals & Devices (SSD) (2013)

Khosravi, H., Kabir, E.: Farsi font recognition based on Sobel-Roberts features. Pattern Recognit. Lett. 31(1), 75–82 (2010). doi:10.1016/j.patrec.2009.09.002

Shivakumara, P., Huang, W.H., Phan, T.Q., Tan, C.L.: Accurate video text detection through classification of low and high contrast images. Pattern Recognit. 43(6), 2165–2185 (2010). doi:10.1016/j.patcog.2010.01.009

Park, J., Lee, G., Kim, E., Lim, J., Kim, S., Yang, H., Lee, M., Hwang, S.: Automatic detection and recognition of Korean text in outdoor signboard images. Pattern Recognit. Lett. 31(12), 1728–1739 (2010). doi:10.1016/j.patrec.2010.05.024

Choi, S., Yun, J.P., Koo, K., Kim, S.W.: Localizing slab identification numbers in factory scene images. Expert Syst. Appl. 39(9), 7621–7636 (2012). doi:10.1016/j.eswa.2012.01.124

Shivakumara, P., Phan, T.Q., Tan, C.L.: New Fourier-statistical features in RGB space for video text detection. IEEE Trans. Circuits Syst. Video Technol. 20(11), 1520–1532 (2010). doi:10.1109/Tcsvt.2010.2077772

Kim, W., Kim, C.: A new approach for overlay text detection and extraction from complex video scene. IEEE Trans. Image Process 18(2), 401–411 (2009). doi:10.1109/TIP.2008.2008225

Zhao, X., Lin, K.H., Fu, Y., Hu, Y.X., Liu, Y.C., Huang, T.S.: Text from corners: a novel approach to detect text and caption in videos. IEEE Trans. Image Process. 20(3), 790–799 (2011). doi:10.1109/Tip.2010.2068553

Harris, C., Stephens, M.: A combined corner and edge detector. Paper presented at the Alvey Vision Conference (1988)

Schmid, C., Mohr, R., Bauckhage, C.: Evaluation of interest point detectors. Int. J. Comput. Vis. 37(2), 151–172 (2000)

Li, Z., Liu, G., Qian, X., Guo, D., Jiang, H.: Effective and efficient video text extraction using key text points. IET Image Process. 5(8), 671–683 (2011). doi:10.1049/iet-ipr.2010.0397

Shafer, G.: A Mathematical Theory of Evidence. Princeton University Press, Princeton (1976)

Klir, G.J., Yuan, B.: Fuzzy Sets and Fuzzy Logic: Theory and Applications. Prentice Hall, Upper Saddle River (1995)

Wang, L.X.: A Course in Fuzzy Systems and Control. Prentice-Hall, Upper Saddle River (1997)

Guan, J.W., Bell, D.A.: Evidence Theory and Its Applications. North-Holland, New York (1991)

Lee, K.C., Song, H.J., Sohn, K.H.: Detection-estimation based approach for impulsive noise removal. Electron. Lett. 34(5), 449–450 (1998). doi:10.1049/El:19980369

Lin, T.-C., Yu, P.-T.: Adaptive two-pass median filter based on support vector machines for image restoration. Neural Comput. 16(2), 332–353 (2004). doi:10.1162/089976604322742056

Wong, E.K., Chen, M.: A new robust algorithm for video text extraction. Pattern Recognit. 36(6), 1397–1406 (2003). doi:10.1016/s0031-3203(02)00230-3

Bostrom, N.: What is a singleton? Linguist. Philos. Investig. 5(2), 48–54 (2006)

Huang, X., Ma, H.: Automatic detection and localization of natural scene text in video. Paper presented at the IEEE ICPR (2010)

Lin,W.-H., C,Y-L.: Affine face clustering and recognition based on wavelet features. Paper presented at the International Conference of Information Management (2005)

Lin, W.-H., Chen, Y.-L., Pan, S.-W.: A novel face search system. Paper presented at the New Era Management Conference, Taiwan (2004)

Bloch, I.: Some aspects of Dempster–Shafer evidence theory for classification of multi-modality medical images taking partial volume effect into account. Pattern Recognit. Lett. 17(8), 905–919 (1996)

Le Hegarat-Mascle, S., Bloch, I., Vidal-Madjar, D.: Application of Dempster–Shafer evidence theory to unsupervised classification in multisource remote sensing. IEEE Trans. Geosci. Remote Sens. 35(4), 1018–1031 (1997)

Alkaabi, S., Deravi, F.: Candidate pruning for fast corner detection. Electron. Lett. 40(1), 18–19 (2004). doi:10.1049/El:20040023

Ko, S.-J., Lee, Y.H.: Center weighted median filters and their applications to image enhancement. IEEE Trans. Circuits Syst. 38(9), 984–993 (1991)

Zhou, W., David, Z.: Progressive switching median filter for the removal of impulse noise from highly corrupted images. IEEE Trans. Circuits Systems II: Analog Digit. Signal Process. 46, 77–80 (1999)

Boston, J.R.: A signal detection system based on Dempster–Shafer theory and comparison to fuzzy detection. IEEE Trans. Syst. Man Cybernet., Part C (Appl. Rev.) 30(1), 45–51 (2000). doi:10.1109/5326.827453

Awad, A.S., Man, H.: High performance detection filter for impulse noise removal in images. Electron. Lett. 44(3), 192–193 (2008). doi:10.1049/El:20083401

Sun, T., Neuvo, Y.: Detail-preserving median based filters in image processing. Pattern Recognit. Lett. 15, 341–347 (1994)

ICDAR 2003 text locating dataset. http://algoval.essex.ac.uk/icdar/Datasets.html (2003)

Shahab, A., Shafait, F., Dengel, A.: ICDAR 2011 robust reading competition challenge 2: reading text in scene images. Paper presented at the International Conference on Document Analysis and Recognition (ICDAR), September (2011)

Karatzas, D., Shafait, F., Uchida, S., Iwamura, M., Gomez, L., Robles, S., Mas, J., Fernandez, D., Almazan J.: Heras LPdl ICDAR 2013 robust reading competition. In: 12th International Conference of Document Analysis and Recognition (2013)

Wang, K., Belongie, S.: Word spotting in the wild. Paper presented at the European Conference on Computer Vision (ECCV) (2010)

Chen, Y.-L.: TVBS news dataset. http://vtmtcic.blogspot.tw (2014)

TVBS news. http://www.tvbs.com.tw/index/index.html (2014)

Huang, X.: A novel video text extraction approach based on Log-Gabor filters. Paper presented at the IEEE CISP (2011)

Kovesi, P.: Matlab and octave functions for computer vision and image processing. http://www.csse.uwa.edu.au/~pk/research/matlabfns (1991)

Hua, X., Yin, P., Zhang, H.J.: Efficient video text recognition using multiple frame integration. Paper presented at the IEEE ICIP (2002)

Ricardo, B.Y., Berthier, R.N.: Modern Information Retrieval. Addison Wesley, New York (1999)

Zhen, W., Zhiqiang, W.: An efficient video text recognition system. Paper presented at the IEEE IHMSC (2010)

Gali, P.K, Morellas, V., Papanikolopoulos, N.: A novel framework for annotating videos using text. Paper presented at the Mediterranean Conference on Control and Automation (2011)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Chen, YL., Yu, PT. An evidence-based model of saliency feature extraction for scene text analysis. IJDAR 19, 269–287 (2016). https://doi.org/10.1007/s10032-016-0270-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10032-016-0270-6