Abstract

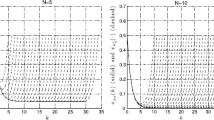

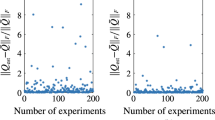

Receding horizon control has been a widespread method in industrial control engineering as well as an extensively studied subject in control theory. In this work, we consider a lag L receding horizon strategy that applies the initial L optimal controls from each quadratic program to each receding horizon. We investigate a discrete-time and time-varying linear-quadratic optimal control problem that includes a nonzero reference trajectory and constraints on both state and control. We prove that, under boundedness and controllability conditions, the solution obtained by the receding horizon strategy converges to the solution of the full problem interval exponentially fast in the length of the receding horizon for some lag L. The exponential rate of convergence provides a systematic way of choosing the receding horizon length given a desired accuracy level. We illustrate our theoretical findings using a small, synthetic production cost model with real demand data.

Similar content being viewed by others

References

Bellingham, J., Richards, A., How, J.P.: Receding horizon control of autonomous aerial vehicles. In: American Control Conference, 2002. Proceedings of the 2002, vol. 5, pp. 3741–3746. IEEE (2002)

Bertsekas, D.P.: Dynamic Programming and Optimal Control, vol. 1. Athena Scientific, Belmont (1995)

Bhattacharya, R., Balas, G.J., Kaya, A., Packard, A.: Nonlinear receding horizon control of F-16 aircraft. In: American Control Conference, 2001. Proceedings of the 2001, vol. 1, pp. 518–522. IEEE (2001)

Biegler, L.T., Zavala, V.M.: Large-scale nonlinear programming using IPOPT: an integrating framework for enterprise-wide dynamic optimization. Comput. Chem. Eng. 33, 575–582 (2009)

Boccia, A., Grüne, L., Worthmann, K.: Stability and feasibility of state constrained MPC without stabilizing terminal constraints. Syst. Control Lett. 72, 14–21 (2014)

Bonnans, J.F., Shapiro, A.: Perturbation Analysis of Optimization Problems. Springer, New York (2013)

Dunbar, W.B., Caveney, D.S.: Distributed receding horizon control of vehicle platoons: Stability and string stability. IEEE Trans. Autom. Control 57, 620–633 (2012)

Franz, R., Milam, M., Hauser, J.: Applied receding horizon control of the Caltech Ducted Fan. In: American Control Conference, 2002. Proceedings of the 2002, vol 5, pp. 3735–3740. IEEE (2002)

Grüne, L.: Analysis and design of unconstrained nonlinear MPC schemes for finite and infinite dimensional systems. SIAM J. Control Optim. 48, 1206–1228 (2009)

Grüne, L., Pannek, J.: Nonlinear Model Predictive Control: Theory and Algorithms. Communications and Control Engineering. Springer, London (2011)

Grüne, L., Pannek, J., Seehafer, M., Worthmann, K.: Analysis of unconstrained nonlinear MPC schemes with time varying control horizon. SIAM J. Control Optim. 48, 4938–4962 (2010)

Interconnection, P.: Estimated hourly load data. http://www.pjm.com/markets-and-operations/energy/real-time/loadhryr.aspx. Accessed: 2017-07-23

Jadbabaie, A., Hauser, J.: On the stability of receding horizon control with a general terminal cost. IEEE Trans. Autom. Control 50, 674–678 (2005)

Jadbabaie, A., Yu, J., Hauser, J.: Unconstrained receding-horizon control of nonlinear systems. IEEE Trans. Autom. Control 46, 776–783 (2001)

Keerthi, S.S., Gilbert, E.G.: Optimal infinite-horizon feedback laws for a general class of constrained discrete-time systems: Stability and moving-horizon approximations. J. Optim. Theory Appl. 57, 265–293 (1988)

Kwon, W., Pearson, A.: A modified quadratic cost problem and feedback stabilization of a linear system. IEEE Trans. Autom. Control 22, 838–842 (1977)

Kwon, W.H., Bruckstein, A.M., Kailath, T.: Stabilizing state-feedback design via the moving horizon method. Int. J. Control 37, 631–643 (1983)

Kwon, W.H., Han, S.H.: Receding Horizon Control: Model Predictive Control for State Models. Springer, London (2006)

Kwon, W.H., Lee, Y.S., Han, S.H.: General receding horizon control for linear time-delay systems. Automatica 40, 1603–1611 (2004)

Lubin, M., Dunning, I.: Computing in operations research using Julia. INFORMS J. Comput. 27, 238–248 (2015)

Mayne, D.Q., Michalska, H.: Receding horizon control of nonlinear systems. IEEE Trans. Autom. Control 35, 814–824 (1990)

Mayne, D.Q., Rawlings, J.B., Rao, C.V., Scokaert, P.O.M.: Constrained model predictive control: Stability and optimality. Automatica 36, 789–814 (2000)

Nocedal, J., Wright, S.: Numerical Optimization. Springer-Verlag, New York (2006)

Primbs, J.A., Nevistić, V.: Feasibility and stability of constrained finite receding horizon control. Automatica 36, 965–971 (2000)

Rawlings, J.B., Muske, K.R.: The stability of constrained receding horizon control. IEEE Trans. Autom. Control 38, 1512–1516 (1993)

Reble, M., Allgöwer, F.: Unconstrained model predictive control and suboptimality estimates for nonlinear continuous-time systems. Automatica 48, 1812–1817 (2012)

Richalet, J., Rault, A., Testud, J.L., Papon, J.: Model predictive heuristic control: Applications to industrial processes. Automatica 14, 413–428 (1978)

Sethi, S., Sorger, G.: A theory of rolling horizon decision making. Ann. Oper. Res. 29, 387–415 (1991)

Sideris, A., Rodriguez, L.A.: A Riccati Approach to Equality Constrained Linear Quadratic Optimal Control. In: Proceeding of the 2010 American Control Conference, pp. 5167–5172 (2010)

Xu, W., Anitescu, M.: Exponentially accurate temporal decomposition for long-horizon linear-quadratic dynamic optimization. SIAM J. Optim. 28, 2541–2573 (2018)

Zhang, W., Hu, J., Abate, A.: On the value functions of the discrete-time switched LQR problem. IEEE Trans. Autom. Control 54, 2669–2674 (2009)

Acknowledgements

We thank Prof. V. Zavala for pointing us to references about stability issues in rolling horizon control. We thank the anonymous referee of [30] who suggested that we look into RHC as well as the referee of this paper for important comments about our assumptions and scope relative to [11]. This material was based upon work supported by the U.S. Department of Energy, Office of Science, under Contract DE-AC02-06CH11347. M. A. acknowledges partial NSF funding through awards FP061151-01-PR and CNS-1545046.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Also, PrePrint ANL/MCS-P9015-1017.

Appendix A: Proofs of Results in Sections 2 and 3

Appendix A: Proofs of Results in Sections 2 and 3

1.1 A.1 Proof of Proposition 2

For any \(x_{q}\in \mathbb {R}^{n}\), consider the standard linear-quadratic problem:

For k ≥ q, successively applying (44b) gives, for j ≥ 0,

and for j = t, (45) reduces to

The index set being UCC(λC) implies that Cq,t is uniformly completely controllable and in particular that Cq,t has full row rank. Therefore, there exists \(\hat {u}=(\hat {u}_{q}^{T},\dots ,\hat {u}_{q+t-1}^{T})^{T}\) so that

Several \(\hat {u}\) satisfy this relationship; we consider the one defined by

Denote the corresponding states generated with \(\hat {u}_{q:q+t-1}\) as \(\hat {x}_{q:q+t}\). Then \(\hat {x}_{q+t}=\mathbf {0}\) by (46).

Lemma 1 implies that

Then from Definition 6 and Lemma 1, we have

From (45), we have, for 1 ≤ j ≤ t − 1,

Now we let \(\hat {u}_{k}=\mathbf {0}\) for k ≥ q + t. Then it follows that \(\hat {x}_{k}=\mathbf {0}\) for k ≥ q + t. Also note that since (A. 1) is a standard linear-quadratic regulator problem, the optimal value is given by \({x_{q}^{T}}K_{q}x_{q}\) [2]. As a result, we have the following.

Letting

completes the proof. Note that β depends only on the quantities in Assumption 1, Definitions 1 and 6, and Lemma 1, which are independent of n1, n2, and the particular choice of \(\mathcal {I}\) given it is UDB(λH) and UCC(λC).

1.2 A.2 Proof of Proposition 3

Define \(L_{k}=-W_{k}^{-1}\hat {B}_{k}^{T}K_{k+1}\hat {A}_{k}\). Then from Lemma 5 and (5d) we have \(D_{k}=\hat {A}_{k}+\hat {B}_{k}L_{k}\). In [2] the recursion (15b) is shown to be equivalent to

For q ≤ j ≤ n2 − 1, define xj+ 1 = Djxj. Then (49) and Proposition 2 imply that

Here we used the bounds from Lemma 1 and the fact that \({x_{j}^{T}}K_{j}x_{j} \geq x_{j+1}^{T}K_{j+1}x_{j+1}\), as implied by (49) and the positive definiteness of \(\hat {Q}_{k}\) and Rk. Also we have

As a result, for n2 − 1 ≥ j ≥ q, we have the following:

where the third inequality is obtained by repeatedly applying (50).

1.3 A.3 Proof of Lemma 9

Let

be the Lagrangian of problem (31). Then we have

Since G and F are positive definite and LICQ holds at y0, then from [6, Theorem 5.53] and [6, Remark 5.55] we have

where S is the solution of the following linearized problem,

and S is given by

Thus the directional derivative Dpy(𝜃0) of y(𝜃) along direction p at 𝜃0 is the solution of the problem

Let I1 be the set of active inequality constraints of problem (52). Then \(I_{1}\subset I_{0}(y_{0},\theta _{0},\bar {\lambda })\), and let \(I^{\prime }(\theta _{0})=I_{1}\cup I_{+}(y_{0},\theta _{0},\bar {\lambda })\). The KKT condition of problem (52) is hence

for some Lagrange multipliers \(\phi _{1}^{\ast }\) and \(\phi _{2}^{\ast }\). Since LICQ holds at y0, rows of \(A_{I^{\prime }(\theta _{0})}\) and B are linearly independent. Together with the fact that G is positive definite, we have that \(\widetilde {G}\) is invertible. Denote the first row of \(\widetilde {G}^{-1}\) to be \(\left [\begin {array}{lll} p_{11} & p_{12} & p_{13} \end {array}\right ]\). Then, we have

On the other hand, for problem (32) with \(I^{\prime }(\theta _{0})\) constructed above, the KKT condition is

for some Lagrange multipliers \(\psi _{1}^{\ast }\) and \(\psi _{2}^{\ast }\). Since \(\widetilde {G}\) is invertible, we have \(y_{I^{\prime }(\theta _{0})}^{\ast }(\theta )=-p_{11}c(\theta )+p_{12}r^{\prime }+p_{13}d(\theta )\). It follows that

As a result, we have

which proves the claim.

Rights and permissions

About this article

Cite this article

Xu, W., Anitescu, M. Exponentially Convergent Receding Horizon Strategy for Constrained Optimal Control. Vietnam J. Math. 47, 897–929 (2019). https://doi.org/10.1007/s10013-019-00375-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10013-019-00375-1