Abstract

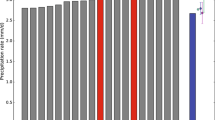

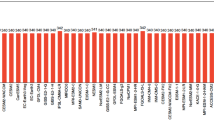

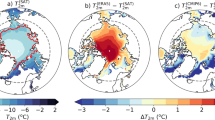

Ensembles of simulations of the twentieth- and twentyfirst-century climate, performed with 20 coupled models for the Intergovernmental Panel on Climate Change (IPCC) Fourth Assessment, provide the basis for an evaluation of the Arctic (70°–90°N) surface energy budget. While the various observational sources used for validation contain differences among themselves, some model biases and across-model differences emerge. For all energy budget components in the twentieth-century simulations (the 20C3M simulation), the across-model variance and the differences from observational estimates are largest in the marginal ice zone (Barents, Kara, Chukchi Seas). Both downward and upward longwave radiation at the surface are underestimated in winter by many models, and the ensenmble mean annual net surface energy loss by longwave radiation is 35 W/m2, which is less than for the NCEP and ERA40 reanalyses but in line with some of the satellite estimates. Incoming solar radiation is overestimated by the models in spring and underestimated in summer and autumn. The ensemble mean annual net surface energy gain by shortwave radiation is 39 W/m2, which is slightly less than for the observational based estimates, In the twentyfirst-century simulations driven by the SRES A2 scenario, increased concentrations of greenhouse gasses increase (average for 2080–2100 minus average for 1980–2000 averages) the annual average ensemble mean downward longwave radiation by 30.1 W/m2. This was partly counteracted by a 10.7 W/m2 reduction in downward shortwave radiation. Enhanced sea ice melt and increased surface temperatures increase the annual surface upward longwave radiation by 27.1 W/m2 and reduce the upward shortwave radiation by 13.2 W/m2, giving an annual net (shortwave plus longwave) surface radiation increase of 5.8 W/m2 , with the maximum changes in summer. The increase in net surface radiation is largely offset by an increased energy loss of 4.4 W/m2 by the turbulent fluxes.

Similar content being viewed by others

References

ACIA (2005) Impacts of a warming Arctic. Arctic Climate Impact Assessment (ACIA). Cambridge University Press, Cambridge, 140 pp

Arzel O, Fichefet T, Goosse H (2006) Sea ice evolution over the 20th and 21st centuries as simulated by current AOGCMs. Ocean Modell 12:401–415

Atlas Arktiki (1985) Glanoye Upravlenye Geodeziy i Kartografiy, Moscow, 204 pp

Beesley JA (2000) Estimating the effect of clouds on the arctic surface energy budget. J Geophys Res 105(D8):10103–10117

Bengtsson L, Semenov V, Johannessen OM (2004) The early 20th century warming in the Arctic—a possible mechanism. J Climate 17:4045–4057

Budyko MI (1969) The effect of solar radiation variations on the climate of the earth. Tellus 21:611

Björk G, Söderkvist J (2002) Dependence of the Arctic Ocean ice thickness distribution on the poleward energy flux in the atmosphere. J Geophys Res 107:C10, 3173

Byrkjedal Ø, Esau I, Kvamstø NG (2006) Sensitivity of simulated wintertime Arctic atmosphere to vertical resolution in the ARPEGE/IFS model. Climate Dynam (submitted)

Chapman W, Walsh JE (2006) Simulations of Arctic temperature and pressure by global coupled models. J Climate (in press)

Curry JA, Ebert EE (1992) Annual cycle of radiation fluxes over the Arctic ocean: sensitivity to cloud properties. J Climate 5:1267–1280

Curry JA, Ebert EE, Schramm JL (1993) Impact of clouds on the surface radiation balance of the Arctic Ocean. Meteor Atmos Phys 51:197–217

Curry JA, Serreze M, Ebert EE (1995) Water vapor feedback over the Arctic Ocean. J Geophys Res 100:14223–14229

Curry JA, Rossow WB, Randall D, Schramm JL (1996) Overview of Arctic cloud and radiation characteristics. J Climate 9:1731–1763

Dyurgerov MB, Meier MF (1997) Year to year fluctuation of global mass balance of small glaciers and their contribution to sea level changes. Arct Alp Res 29:392–402

Ebert EE, Curry JA (1993) An intermediate one-dimensional thermodynamic sea ice model for investigating ice-atmosphere interactions. J Geophys Res 98:10085–10109

Fletcher JO (1965) The heat budget of the Arctic Basin and its relation to climate. Rand Rep. R-444-PR, The Rand Corporation, Santa Monica, CA, 179 pp

Frei A, Robinson DA (1999) Northern Hemisphere snow extent: regional variability 1972-1994. Int J Climatol 19(14):1535–1560

Fu Q, Liou K, Cribb M, Charlock T, Grossman A (1997) On multiple scattering in thermal infrared radiative transfer. J Atmos Sci 54:2799–2812

Hartman DL (1994) Global physical climatology. Academic Press, New York, 411 pp

Held IM, Suarez MJ (1974) Simple albedo feedback models of the icecaps. Tellus 26:613–628

Johannessen OM, Bengtsson L, Miles MW, Kuzmina SI, Semenov VA, Alekseev GV, Nagurnyi AP, Zakharov VF, Bobylev LP, Pettersson LH, Hasselmann K, Cattle HP (2004) Arctic climate change: observed and modelled temperature and sea-ice variability. Tellus 56A:328–341

Kalnay E, Kanamitsu M, Kistler R, Collins W, Deaven D, Gandin L, Iredell M, Saha S, White G, Woollen J, Zhu Y, Leetmaa A, Reynolds B, Chelliah M, Ebisuzaki W, Higgins W, Janowiak J, Mo KC, Ropelewski C, Wang J, Jenne R, Joseph D (1996) The NCEP/NCAR 40-year reanalysis project. Bull Amer Meteorol Soc 77(3):437–471

Kattsov V, Källén E (2005) Future changes of climate: modelling and scenarios for the Arctic region, in Arctic Climate Impact Assessment (ACIA). Cambridge University Press, Cambridge, 1042 pp

Kattsov VM, Walsh JE (2000) Twentieth-century trends of Arctic precipitation from observational data and a climate model simulation. J Climate 13(8):1362–1370

Kattsov VM, Walsh JE (2002) Reply to Comments on “Twentieth-century trends of Arctic precipitation from observational data and a climate model simulation” by H.Paeth, A.Hense, and R. Hagenbrock. J Climate 15:804–805. IPCC, 2001

Kattsov VM, Walsh JE, Chapman WL, Govorkova VA, Pavlova TV, Zhang X (2006) Simulation and projection of Arctic freshwater budget components by the IPCC AR4 Global Climate Models. J Hydrometeorol (in press)

Key J (2001) The cloud and surface parameter retrieval (CASPR) system for Polar AVHRR Data User’s Guide. Space Science and Engineering Center, University of Wisconsin, Madison, 62 pp

Key J, Schweiger AJ (1998) Tools for atmospheric radiative transfer: Streamer and FluxNet. Comput Geosci 24(5):443–451

Key J, Slayback D, Xu C, Schweiger A (1999) New climatologies of polar clouds and radiation based on the ISCCP “D” products. In: Proceedings of the fifth conference on polar meteorology and oceanography, January 10–15. American Meteorological Society, Dallas, pp 227–232

Khrol VP (ed) (1992) Atlas of the energy balance of the Northern Polar Area, Arctic, and Antarctic. Res Inst, St. Petersburg, Russia, p. 72

Lindsay RW (1998) Temporal variability of the energy balance of thick Arctic pack ice. J Climate 11:313–331

Liu J, Curry JA, Rossow WB, Key JR, Wang X (2005) Comparison of surface radiative flux data sets over the Arctic Ocean. J Geophys Res 110:C02015. doi:10.1029/2004JC002381

Mashunova MS (1961) Principal chracterisitics of the radiation balance of the unerlying surface and the atmosphere in the Arctic. Trudy ANII 226:109–112 (in Russian)

Mashunova MS, Chernigovskii NT (1971) Radiation regime of the foreign Arctic. Gidmeteoizdat, Leningrad, 182 pp (in Russian)

Maslanik JA, Key J, Fowler CW, Nguyen T (2001) Spatial and temporal variability of satellite derived cloud and surface characteristics during FIRE-ACE. J Geophys Res 106(D14):15233–15249

Maslanik J, Fowler C, Key J, Scambos T, Hutchinson T, Emery W (1998) AVHRR-based Polar Pathfinder products for modeling applications. Ann Glaciol 25:388–392

McBean G, Alekseev G, Chen D, Førland E, Fyfe J, Groisman PY, King R, Melling H, Vose R, Whitfield PH (2005) Arctic Climate: Past and Present. Arctic Climate Impact Assessment: Scientific Report. Cambridge University Press, UK, 1042 pp

McKay DC, Morris RJ (1985) Solar radiation data analysis for Canada 1967–1976. The Yukon and Northwest territories, vol 6. Minister of Supply and Services, Canada, Ottawa

Meier WN, Maslanik JA, Key JR, Fowler CWW (1997) Multiparameter AVHRR-derived products for Arctic climate studies. Earth interactions, vol 1

Miller JR, Russell GL (2002) Projected impact of climate change on the energy budget of the Arctic Ocean by a global climate model. J Climate 15(21):3028–3042

Nakicenovic et al (2000) IPCC special report on emission scenarios. Cambridge University Press, Cambridge, 599 pp

Overland JE, Spillane MC, Percival DB, Wang M, Mofjeld HO (2004) Seasonal and regional variation of Pan-Arctic air temperature over the instrumental record. J Climate 17:3263–3282

Pinker RT, Laszlo I (1992) Modeling surface solar irradiance for satellite solar irradiance applications on a global scale. J Appl Meteor 31:194–211

Polyakov I, Bekryaev RV, Alekseev GV, Bhatt US, Colony RL, Johnson MA, Makshtas AP, Walsh D (2003) Variability and trends of air temperature and pressure in the maritime Arctic, 1875–2000. J Climate 16(12):2067–2077

Przybylak R (2003) The climate of the Arctic. Atm. and Ocean. Science Library, vol. 26. Kluwer Academic Publishers, 270 pp

Randall D, Curry J, Battisti D, Flato G, Grumbine R, Hakkinen S, Martinson D, Preller R, Walsh J, Weatherly J (1998) Status of and outlook for large-scale modeling of atmosphere–ice–ocean interactions in the Arctic. Bull Amer Meteorol Soc 79:197–219

Romanovsky V, Burgess M, Smith S, Yoshikawa K, Brown J (2002) Permafrost temperature records: indicators of climate change. EOS, AGU Trans 83(50):589–594

Rossow WB, Schiffer RA (1991) ISCCP cloud data products. Bull Amer Meteorol Soc 72:2–20

Serreze MC, Coauthors (2000) Observational evidence for recent changes in the northern high-latitude environment. Climatic Change 46:159–207

Serreze MC, Hurst CM (2002) Representation of Mean Arctic Precipitation fron NCEP-NCAR and ERA Reanalyses. Climate 13:182–201

Serreze MC, Key JR, Box JE, Maslanik JA, Stefen K (1998) A monthly climatology for global radiation for the Arctic and comparisons with NCEP-NCAR reanalysis and ISCCP-C2 fields. J Climate 11:121–136

Simmons AJ, Gibson JK (2000) The ERA-40 Project Plan. ERA-40 Project Report Series No. 1 ECMWF. Shinfield Park, Reading, 63 pp. http://www.ecmwf.int/research/era/Products/

Sorteberg A, Furevik T, Drange H, Kvamstø NG (2005) Effects of simulated natural variability on Arctic temperature projections. Geophys Res Lett 32:L18708. doi:10.1029/2005GL023404

Sorteberg A, Kvamstø NG (2006) The effect of internal variability on anthropogenic climate projections. Tellus 58(5):565–574

Stamnes K, Ellingson RG, Curry JA, Walsh JE, Zak BD (1999) Review of science issues, deployment strategy, and status for the ARM North Slope of Alaska-Adjacent Arctic Ocean climate research site. J Climate 12(1):46–63

Vavrus S (2003) The impact of cloud feedbacks on Arctic climate under greenhouse forcing. J Climate 17(3):603–615

Uppala SM, Kållberg PW, Simmons AJ, Andrae U, da Costa Bechtold V, Fiorino M, Gibson JK, Haseler J, Hernandez A, Kelly GA, Li X, Onogi K, Saarinen S, Sokka N, Allan RP, Andersson E, Arpe K, Balmaseda MA, Beljaars ACM, van de Berg L, Bidlot J, Bormann N, Caires S, Chevallier F, Dethof A, Dragosavac M, Fisher M, Fuentes M, Hagemann S, Hólm E, Hoskins BJ, Isaksen L, Janssen PAEM, Jenne R, McNally AP, Mahfouf J-F, Morcrette J-J, Rayner NA, Saunders RW, Simon P, Sterl A, Trenberth KE, Untch A, Vasiljevic D, Viterbo P, Woollen J (2005) The ERA-40 re-analysis. Quart J R Meteorol Soc 131:2961–3012. doi:10.1256/qj.04.176

Vinnikov KY, Coauthors (1999) Global warming and Northern Heimsphere Sea ice extent. Science 286:1934–1937

Vowinckel E, Orvig S (1964) Radiation balance of the troposphere and of the earth-atmosphere system in the Arctic. Arch Meorol Geophys Bioklim 13:480–502

Vowinckel E, Orvig S (1970) The climate of the Noth Polar Basin. In: Orvig S (eds) Climates of the Polar regions. World Survey of Climatology, vol 14. Elsevier, Amsterdam, pp 129–252

Walsh JE, Kattsov V, Chapman W, Govorkova V, Pavlova T (2002) Comparison of Arctic climate simulations by uncoupled and coupled global models. J Climate 15:1429–1446

Wang X, Key JR (2003) Recent trends in Arctic surface, cloud and radiation properties from space. Science 299:1725–1728

Wang M, Overland JE, Kattsov V, Walsh JE, Zhang X, Pavlova T (2006) Intrinsic versus forced variation in coupled climate model simulations over the Arctic during the twentieth Century. J Climate (in press)

Zhang X, Walsh JE (2006) Toward a seasonally ice-covered Arctic Ocean: scenarios from the IPCC AR4 model simulations. J Climate 19:1730–1747

Zhang T, Stamnes K, Bowling SA (1996) Impact of clouds on surface radiative fluxes amd snowmelt in the Arctic. J Climate 9(9):2110–2123

Zhang T, Bowling SA, Stamnes K (1997) Impact of the atmosphere on surface radiative fluxes and snowmelt in the Arctic and Subarctic. J Geophys Res 102(D4):4287–430

Acknowledgments

This work was supported by the Norwegian Research Council’s NORKLIMA program through the RegClim project and the BCCR SSF grant, by the U.S. National Science Foundation’s Office of Polar Programs through Grant OPP-0327664, and by the Russian Foundation for Basic Research through Grant 05-05-65093. We acknowledge the international modeling groups for providing their data for analysis, the Program for Climate Model Diagnosis and Intercomparison (PCMDI) for collecting and archiving the IPCC model data, the JSC/CLIVAR Working Group on Coupled Modelling (WGCM) and their Coupled Model Intercomparison Project (CMIP) and Climate Simulation Panel for organizing the model data analysis activity, and the IPCC WG1 TSU for technical support. The IPCC Data Archive at Lawrence Livermore National Laboratory is supported by the Office of Science, US Department of Energy. This is publication No. A150 from the Bjerknes Centre for Climate Research.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Sorteberg, A., Kattsov, V., Walsh, J.E. et al. The Arctic surface energy budget as simulated with the IPCC AR4 AOGCMs. Clim Dyn 29, 131–156 (2007). https://doi.org/10.1007/s00382-006-0222-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00382-006-0222-9