Abstract

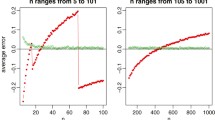

Logistic regression is a widely used method to model categorical response data, and maximum likelihood (ML) estimation has widespread use in logistic regression. Although ML method is the most used method to estimate the regression coefficients in logistic regression model, multicollinearity seriously affects the ML estimator. To remedy the undesirable effects of multicollinearity, estimators alternative to ML are proposed. Drawing on the similarities between the multiple linear and logistic regressions, ridge, Liu and two parameter estimators are proposed which are based on the ML estimator. On the other hand, first-order approximated ridge estimator is proposed for use in logistic regression. This study will present further solutions to the problem in the form of alternative estimators which reduce the effect of collinearity. Owing to this, first-order approximated Liu, iterative Liu and iterative two parameter estimators are proposed. A simulation study as well as real life application are carried out to ascertain the effect of sample size and degree of multicollinearity, in which the ML based, first-order approximated and iterative biased estimators are compared. Graphical representations are presented which support the effect of the shrinkage parameter on the mean square error and prediction mean square error of the biased estimators.

Similar content being viewed by others

Notes

We use the notations \(W_{ML}\) and \(\hat{\pi }_{i}^{ML}\) to show estimates at the \((m-1)\)th iteration.

Hereafter, we call both the ridge parameter k and the shrinkage parameter d as shrinkage parameters.

It is seen that the movement of the ML based two parameter estimator against d at fixed \(\hat{k}_{1}\) is same with one difference the superiority of one estimator over the others changes in accordance with the shrinkage parameter. To avoid the confusion on the figure, we shall skip it.

References

Aguilera AM, Escabias M, Valderrama MJ (2006) Using principal components for estimating logistic regression with high-dimensional multicollinear data. Comput Stat Data Anal 50:1905–1924

Asar Y, Genç A (2015) New shrinkage parameters for the Liu-type logistic estimators. Commun Stat Simul Comput. doi:10.1080/03610918.2014.995815

Duffy DE, Santner TJ (1989) On the small sample properties of norm-restricted maximum likelihood estimators for logistic regression models. Commun Stat Theory Methods 18(3):959–980

Hoerl AE, Kennard RW (1970) Ridge regression: biased estimation for nonorthogonal problems. Technometrics 12:55–67

Hoerl AE, Kennard RW, Baldwin KF (1975) Ridge regression: some simulation. Commun Stat 4:105–123

Huang J (2012) A simulation based research on a biased estimator in logistic regression model. In: Computational intelligence and intelligent systems. Springer, Berlin, p 389–395

Kibria BMG (2003) Performance of some new ridge regression estimators. Commun Stat Simul Comput 32(2):419–435

Kibria BMG, Månsson K, Shukur G (2012) Performance of some logistic ridge regression estimators. Comput Econ 40:401–414

Lawless JF, Wang P (1976) A simulation study of ridge and other regression estimators. Commun Stat A5:307–323

LeCessie S, VanHouwelingen JC (1992) Ridge estimators in logistic regression. Appl Stat 41(1):191–201

Lee AH, Silvapulle MJ (1988) Ridge estimation in logistic regression. Commun Stat Simul 17(4):1231–1257

Liu K (1993) A new class of biased estimate in linear regression. Commun Stat Theory Methods 22(2):393–402

Mansson K, Shukur G (2011) On ridge parameters in logistic regression. Commun Stat Theory Methods 40:3366–3381

Mansson K, Kibria BMG, Shukur G (2012) On Liu estimators for the logit regression model. Econ Model 29:1483–1488

Marx BD, Smith EP (1990) Principal component estimation for generalized linear regression. Biometrika 77(1):23–31

McDonald GC, Galarneau DI (1975) A Monte Carlo evaluation of some ridge-type estimators. J Am Stat Assoc 70(350):407–416

Muniz G, Kibria BMG, Mansson K, Shukur G (2012) On developing ridge regression parameters: a graphical investigation. SORT 36(2):115–138

Özkale MR, Kaçıranlar S (2007) The restricted and unrestricted two parameter estimators. Commun Stat Theory Methods 36(15):2707–2725

Pena WEL, De Massaguer PR, Zuniga ADG, Saraiva SH (2011) Modeling the growth limit of Alicyclobacillus acidoterrestris CRA7152 in apple juice: effect of pH, Brix, temperature and nisin concentration. J Food Process Preserv 35:509–517

Pregibon D (1981) Logistic regression diagnostics. Ann Stat 9(4):705–724

Schaefer RL (1986) Alternative regression collinear estimators in logistic when the data are collinear. J Stat Comput Simul 25:75–91

Schaefer RL, Roi LD, Wolfe RA (1984) A ridge logistic estimator. Commun Stat Theory Methods 13(1):99–113

Smith EP, Marx BD (1990) Ill-conditioned information matrices, generalized linear models and estimation of the effects of acid rain. Environmetrics 1(1):57–71

Stein C (1981) Estimation of the mean of a multivariate normal distribution. Ann Stat 9:1135–1151

Urgan NN, Tez M (2008) Liu estimator in logistic regression when the data are collinear. In: The 20th international conference, EURO mini conference “Continuous Optimization and Knowledge-Based Technologies” (EurOPT-2008), p 323–327

Acknowledgments

This work was supported by Research Fund of Çukurova University under Project Number FED-2015-4563.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1: Singular value decomposition of \(X^{\prime }\hat{W}X\)

Using SVD, \(X^{\prime }\hat{W}X\) can be expressed as \(X^{\prime }\hat{W} X=T\varLambda T^{\prime }\) where \(\varLambda =diag(\lambda _{j})\) is a \(p\times p\) diagonal matrix of the eigenvalues of \(X^{\prime }\hat{W}X\,(\lambda _{1}=\lambda _{\max }\ge \lambda _{2}\ge \cdots \ge \lambda _{p}=\lambda _{\min }\)) and \(T=[ t_{1},\ldots , t_{p} ] \) is a \(p\times p\) orthogonal matrix with columns constitute the eigenvectors (see also Marx and Smith 1990; Aguilera et al. 2006). Then logit is expressed as \(X\beta =XTT^{\prime }\beta =Z\alpha \) where \(Z=XT,\, \alpha =T^{\prime }\beta .\)

Appendix 2: Proof of theorem

The ith element of \(\widehat{\alpha }_{kd}^{ML}=T^{\prime }\hat{\beta } _{kd}^{ML}\) can be written as \(\widehat{\alpha }_{kd,i}^{ML}=\frac{\lambda _{i}+kd}{\lambda _{i}+k}\widehat{\alpha }_{ML,i}\) where \(\widehat{\alpha } _{ML}=T^{\prime }\hat{\beta }_{ML}.\) Then the asymptotic MSE of \(\widehat{ \alpha }_{\tilde{k}\hat{d}_{two},i}^{ML}\) equals

To use lemma, let

and \(f(\widehat{\alpha }_{ML,i})=\frac{\sum \nolimits _{j=1}^{p}\frac{1}{\lambda _{j}(\lambda _{j}+\tilde{k})}}{\sum \nolimits _{j=1}^{p}\frac{\tilde{k}\widehat{ \alpha }_{ML,j}^{2}}{(\lambda _{j}+\tilde{k})^{2}}}\widehat{\alpha }_{ML,i}.\) On account of \(\widehat{\alpha }_{ML,i}\sim N(\alpha _{i},\,\lambda _{i}^{-1})\) (see Marx and Smith 1990, p. 28), we get

Using A, the expression (9) can be presented as

From Eq. (10), the difference \(\varDelta =sMSE(\widehat{\alpha }_{\tilde{k}\hat{ d}_{two}}^{ML})-sMSE(\widehat{\alpha }_{ML})\) equals

Therefore,

For \(\varDelta \) to be negative, \(H(h_{two})=h_{two}\left[ \sum \nolimits _{j=1}^{p} \frac{-2}{\lambda _{j}(\lambda _{j}+\tilde{k})}+\frac{4}{\lambda _{\min }(\lambda _{\min }+\tilde{k})}\right. \) \(\left. +h_{two}\sum \nolimits _{j=1}^{p}\frac{1}{\lambda _{j}(\lambda _{j}+\tilde{k})}\right] \) should be negative. \(H(h_{two})\) is negative for \(0<h_{two}<2\left[ 1-\frac{2}{\sum \nolimits _{j=1}^{p}\frac{ \lambda _{\min }(\lambda _{\min }+\tilde{k})}{\lambda _{j}(\lambda _{j}+ \tilde{k})}}\right] \) if \(\sum \nolimits _{j=1}^{p}\frac{\lambda _{\min }(\lambda _{\min }+\tilde{k})}{\lambda _{j}(\lambda _{j}+\tilde{k})}>2\) and for \(2\left[ 1-\frac{2}{\sum \nolimits _{j=1}^{p}\frac{\lambda _{\min }(\lambda _{\min }+\tilde{k})}{\lambda _{j}(\lambda _{j}+\tilde{k})}}\right]<h_{two}<0 \) if \(\sum \nolimits _{j=1}^{p}\frac{\lambda _{\min }(\lambda _{\min }+\tilde{k})}{\lambda _{j}(\lambda _{j}+\tilde{k})}<2.\) Since we look for \( \hat{d}_{two}\) in interval (0, 1), the proof is completed.

Rights and permissions

About this article

Cite this article

Özkale, M.R. Iterative algorithms of biased estimation methods in binary logistic regression. Stat Papers 57, 991–1016 (2016). https://doi.org/10.1007/s00362-016-0780-9

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-016-0780-9

Keywords

- Iteratively reweighted least squares

- Binary logistic regression

- Ridge estimator

- Liu estimator

- Mean square error