Abstract

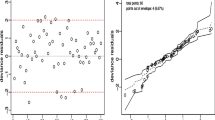

This paper considers non-parametric density estimation in the context of multiplicative censoring. A new estimator for the density function is proposed and consistency of the proposed estimator is investigated. Simulations are drawn to illustrate the results and to show how the estimator behaves for finite samples.

Similar content being viewed by others

References

Abbaszadeh M, Chesneau C, Doosti H (2012) Nonparametric estimation of density under bias and multiplicative censoring via wavelet methods. Stat Probab Lett 82:932–941

Abbaszadeh M, Chesneau C, Doosti H (2013) Multiplicative censoring: estimation of a density and its derivatives under the \(L_p\)-risk. REVSTAT Stat J 11(3):255–276

Andersen KE, Hansen MB (2001) Multiplicative censoring: density estimation by a series expansion approach. J Stat Plan Inference 98:137–155

Asgharian M, Carone M, Fakoor V (2012) Large-sample study of the kernel density estimators under multiplicative censoring. Ann Stat 82:932–941

Csörgő S (1985) Rates of uniform convergence for the empirical characteristic function. Acta Sci Math 48:97–102

Parzen E (1962) On estimation of a probability density function and mode. Ann Math Stat 33:1065–1076

Roenblatt M (1956) Remarks on some nonparametric estimates of a density function. Ann Math Stat 27:832–837

Stute W (1982) A law of the logarithm for kernel density estimators. Ann Probab 10(2):414–422

Stefanski LA, Carroll RJ (1990) Deconvoluting kernel density estimators. Statistics 21:169–184

Silverman BW (1986) Density estimation for statistics and data analysis. Chapman and Hall/CRC, London

Vardi Y (1989) Multiplicative censoring, renewal process, deconvolution and decreasing density: nonparametric estimation. Biometrika 76(4):751–761

Vardi Y, Zhang C-H (1992) Large sample study of empirical distributions in a random-multiplicative censoring model. Ann Stat 20:1022–1039

Wied D, Weißbach R (2010) Consistency of the kernel density estimator—a survey. Stat Pap 53(1):1–21

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix A: Proofs of the main results

Proof of Theorem 1

Under \({\mathbf {K(1)}},\) \(\mathbf {H(2)}\) and according to Lemma 7.1 we have

Let

be an ordinary kernel density estimator function of \(q\), that is defiend by (2.1), and based on the sample of \((T_1,\ldots ,T_n)\) where \(T_i=-\ln Z_i.\) An interchange of expectation and integration, justified by Fubini’s Theorem and \(\mathbf { K(3)},\) show that

Then it follows from (6.2) that

Thus \(q_{n}\) has the same bias as the ordinary kernel density estimator \(q^{*}_{n}\). \({\mathbf {K(1)}}, {{\mathbf {H(1)}}}\) and Lemma 7.1 follow

hence

Therefore from (6.1), (6.3) and Assumption \({\mathbf {P}}\), we have

Assumption \({{\mathbf {H(2)}}}\) and Lemma 7.2 follow that

By proof of Theorem 2.1 of Stefanski and Carroll (1990), \({\mathbf {K(2)-K(4)}}\) and \({\mathbf {H(1)}}\), we have

It can be easily seen that

so by combining (6.5), (6.6) we have

Finally, (6.4) and (6.8) complete the proof. \(\square \)

Proof of Theorem 2

It is easy to see that

From G(1), G(2), H(3), K(1) and Lemma 7.3 we have

For \(I_{2}\) first we have

Let \(W_i\) has density function \(f^*\) with characteristic function \(\Psi _{f^{*}}.\) Since \(\Psi _{K_{2}}\) is vanished out of \([-1,1]\), we have

where

hence

where

Lemma 7.4 implies that

hence using Assumption \({\mathbf {K(3)}}\) we have

Using \(\mathbf G(1) \) and \( \mathbf K(1) \),

Now (6.11), (6.13) and (6.14) yield

(6.9), (6.10), and (6.15) complete the proof. \(\square \)

Appendix B

In this section, we consider the kernel density estimator \(f_n\) of a univariate density \(f\) introduced by Roenblatt (1956),

where \(\xi _{1},\xi _{2},\ldots ,\xi _{n}\) are independent observations from distribution \(F\) with density function \(f\). \(K,\) \(a_n\) and \(F_n\) are kernel function, a bandwidth and empirical distribution function, respectively. Also, the characteristic function of \(f\) and its empirical are denoted by

and

Lemma 7.1

(Corollary 1A, Parzen (1962)). The estimator defined by (7.1) is asymptotically unbiased at all points \(x\) at which the probability density function is continuous if the constants \(a_{n}\) satisfy \(\lim _{n \longrightarrow \infty }a_{n}=0\) and if the function \(K(\cdot )\) satisfies

and

\(\square \)

Lemma 7.2

(Theorem 2A, Parzen (1962)) The estimates defined by (7.1) have variances satisfying

at all points \(x\) of continuity of \(f(x),\) if \(\lim _{n \longrightarrow \infty } a_{n}=0\) and the function \(K\) satisfies (7.2) and (7.3). \(\square \)

Lemma 7.3

(Theorem 1.2., Stute (1982)) Let \(K\) be of bounded variation and suppose that \(K(x)=0\) outside some finite interval \([r,s)\). If

with \(J=(a, b),\) then for each \(\epsilon >0\) and every bandsequence \((a_{n})\), where \(\lim _{n\rightarrow \infty }\) \(a_{n}=0\) and \(\lim _{n \rightarrow \infty }\frac{\ln ({a^{-1}_{n}})}{na_{n}}=0,\) we have

where \(J_{\epsilon }=(a+\epsilon , b-\epsilon )\) and \(C\) is a constant. \(\square \)

Lemma 7.4

(Example (1), Csörgő (1985)) Suppose that \(P\left( |\xi |>x\right) \le Lx^{-\alpha }\) for all large enough \(x,\) where \(L\) and \(\alpha \) are arbitrary positive constants. Then for any \(A>0\)

where \(C\) is a positive constant and

for any extended number \(0<T\le \infty \). \(\square \)

Rights and permissions

About this article

Cite this article

Zamini, R., Fakoor, V. & Sarmad, M. On estimation of a density function in multiplicative censoring. Stat Papers 56, 661–676 (2015). https://doi.org/10.1007/s00362-014-0602-x

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-014-0602-x