Abstract

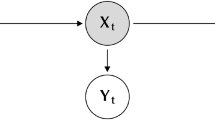

The parameters of a hidden Markov model (HMM) can be estimated by numerical maximization of the log-likelihood function or, more popularly, using the expectation–maximization (EM) algorithm. In its standard implementation the latter is unsuitable for fitting stationary hidden Markov models (HMMs). We show how it can be modified to achieve this. We propose a hybrid algorithm that is designed to combine the advantageous features of the two algorithms and compare the performance of the three algorithms using simulated data from a designed experiment, and a real data set. The properties investigated are speed of convergence, stability, dependence on initial values, different parameterizations. We also describe the results of an experiment to assess the true coverage probability of bootstrap-based confidence intervals for the parameters.

Similar content being viewed by others

References

Archer G, Titterington D (2002) Parameter estimation for hidden Markov chains. J Stat Plann Inference 108(1–2):365–390

Baum L, Petrie T, Soules G, Weiss N (1970) A maximization technique occurring in the statistical analysis of probabilistic functions of Markov chains. Ann Math Stat 41(1):164–171

Böhning D (2000) Computer assisted analysis of mixtures and applications. Meta-analysis, disease mapping and others. In: Monographs on statistics and applied probability, vol 81. Chapman & Hall/ CRC, London

Campillo F, Le Gland F (1989) MLE for partially observed diffusions: direct maximization vs. the EM algorithm, Stoch Processes Appl 33(2):245–274

Dempster A, Laird N, Rubin D (1977) Maximum likelihood from incomplete data via the EM algorithm. Discussion. J R Stat Soc Ser B Stat Methodol 39(1):1–38

Dennis JE, Moré JJ (1977) Quasi-Newton methods, motivation and theory. SIAM Rev 19:46–89

Dunmur A, Titterington D (1998) The influence of initial conditions on maximum likelihood estimation of the parameters of a binary hidden Markov model, Stat Probab Lett 40(1):67–73

Efron B, Tibshirani RJ (1993) An introduction to the bootstrap. In: Monographs on statistics and applied probability, vol 57. Chapman & Hall, New York

Hathaway RJ (1986) A constrained EM algorithm for univariate normal mixtures. J Stat Comput Simul 23(3):211–230

Jamshidian M, Jennrich RI (1997) Acceleration of the EM algorithm by using quasi-Newton methods. J R Stat Soc Ser B Stat Methodol 59(3):569–587

Lange K (1995) A quasi-Newton acceleration of the EM algorithm. Stat Sin 5(1):1–18

Lange K, Weeks D (1989) Efficient computation of lod scores: Genotype elimination, genotype redefinition, and hybrid maximum likelihood algorithms. Ann Hum Genet 53(1):67–83

Liporace LA (1982) Maximum likelihood estimation for multivariate observations of Markov sources. IEEE Trans Inf Theory 28(5):729–734

MacDonald IL, Zucchini W (1997) Hidden Markov and other models for discrete-valued time series. In: Monographs on statistics and applied probability, vol 70. Chapman & Hall, London

Matsumoto M, Nishimura T (1998) Mersenne twister: a 623-dimensionally equidistributed uniform pseudo-random number generator. ACM Trans Model Comput Simul 8(1):3–30

Nelder J, Mead R (1965) A simplex method for function minimization. Computer J. 7(4):308–313

Nityasuddhi D, Böhning D (2003) Asymptotic properties of the EM algorithm estimate for normal mixture models with component specific variances. Comput Stat Data Anal 41(3–4):591–601

R Development Core Team (2004) R: a language and environment for statistical computing, R Foundation for Statistical Computing, Vienna, Austria. URL: http://www.R-project.org

Rabiner L (1989) A tutorial on hidden Markov models and selected applications in speech recognition. IEEE Trans Inf Theory 77(2):257–284

Redner RA, Walker HF (1984) Mixture densities, maximum likelihood and the EM algorithm. SIAM Rev 26(2):195–239

Robert CP, Mengersen KL (1996) Testing for mixtures: a bayesian entropic approach. Bayesian Statistics 5: Proceedings of the Fifth Valencia International Meeting, pp 255–276

Robert CP, Mengersen KL (1999) Reparameterisation issues in mixture modelling and their bearing on MCMC algorithms. Comput Stat Data Anal 29(3):325–343

Robert CP, Titterington DM (1998) Reparameterization strategies for hidden Markov models and Bayesian approaches to maximum likelihood estimation. Stat Comput 8(2):145–158

Schnabel RB, Koontz JE, Weiss BE (1985) A modular system of algorithms for unconstrained minimization. ACM Trans Math Softw 11(4):419–440

Visser I, Raijmakers MEJ, Molenaar PCM (2000) Confidence intervals for hidden Markov model parameters. Br J Math Stat Psychol 53(2):17–327

Wang P, Puterman ML (2001) Analysis of longitudinal data of epileptic seizure counts—a two-state hidden Markov regression approach. Biom J 43(8):941–962

Wu C (1983). On the convergence properties of the EM algorithm. Ann Stat 11(1): 95–103

Zucchini W, MacDonald IL (1998) Hidden Markov Time Series Models: Some Computational Issues. In: Weisenberg (ed) Computational Science and Statistics, vol 30, Interface foundation of America, pp. 157–163

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Bulla, J., Berzel, A. Computational issues in parameter estimation for stationary hidden Markov models. Computational Statistics 23, 1–18 (2008). https://doi.org/10.1007/s00180-007-0063-y

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00180-007-0063-y