Abstract

Different senses have different processing times. Here we measured the perceived timing of galvanic vestibular stimulation (GVS) relative to tactile, visual and auditory stimuli. Simple reaction times for perceived head movement (438 ± 49 ms) were significantly longer than to touches (245 ± 14 ms), lights (220 ± 13 ms), or sounds (197 ± 13 ms). Temporal order and simultaneity judgments both indicated that GVS had to occur about 160 ms before other stimuli to be perceived as simultaneous with them. This lead was significantly less than the relative timing predicted by reaction time differences compatible with an incomplete tendency to compensate for differences in processing times.

Similar content being viewed by others

References

Allan LG (1975) The relationship between judgments of successiveness and judgments of order. Percept Psychophys 18:29–36

Alvarez-Buylla R, de Arellano JR (1952) Local responses in Pacinian corpuscles. Am J Physiol 172:237–244

Angelaki DE, Cullen KE (2008) Vestibular system: the many facets of a multimodal sense. Annu Rev Neurosci 31:125–150

Aw ST, Todd MJ, Halmagyi GM (2006) Latency and initiation of the human vestibuloocular reflex to pulsed galvanic stimulation. J Neurophysiol 96:925–930

Bekesy GV (1963) Interaction of paired sensory stimuli and conduction in peripheral nerves. J Appl Physiol 18:1276–1284

Bense S, Stephan T, Yousry TA, Brandt T, Dieterich M (2001) Multisensory cortical signal increases and decreases during vestibular galvanic stimulation (fMRI). J Neurophysiol 85:886–899

Bergenheim M, Johansson H, Granlund B, Pederson J (1996) Experimental evidence for a synchronization of sensory information to conscious experience. In: Hameroff SR, Kaszniak AW, Scott AC (eds) Toward a science of consciousness: the first Tucson discussions and debates. MIT Press, Cambridge, pp 303–310

Biguer B, Donaldson IML, Hein A, Jeannerod M (1988) Neck muscle vibration modifies the representation of visual motion and direction in man. Brain 111:1405–1424

Brandt T, Dieterich M (1999) The vestibular cortex: its locations, functions, and disorders. Ann N Y Acad Sci 871:293–312

Brantberg K, Magnusson M (1990) Galvanically induced asymmetric optokinetic after-nystagmus. Acta Otolaryngol 110:189–195

Bucher SF, Dieterich M, Wiesmann M, Weiss A, Zink R, Yousry TA, Brandt T (1998) Cerebral functional magnetic resonance imaging of vestibular, auditory, and nociceptive areas during galvanic stimulation. Ann Neurol 44:120–125

Buys E (1909) Beitrag zum Studium des galvanischen Nystagmus mit hilfe der Nystagmographie. Mschr Ohrenheilk 43:801–803

Capelli A, Israel I (2007) One-second interval production task during postrotatory sensation. J Vestib Res 17:239–249

Capelli A, Deborne R, Israël I (2007) Temporal intervals production during passive self-motion in darkness. Curr Psychol Lett 22

Corey DP, Hudspeth AJ (1979) Ionic basis of the receptor potential in a vertebrate hair cell. Nature 281:675–677

Craig JC, Baihua XU (1990) Temporal order and tactile patterns. Percept Psychophys 47:22–34

Day BL, Severac Cauquil A, Bartolomei L, Pastor MA, Lyon IN (1997) Human body-segment tilts induced by galvanic stimulation: a vestibularly driven balance protection mechanism. J Physiol 500:661–672

de Waele C, Baudonniere PM, Lepecq JC, Tran Ba Huy P, Vidal PP (2001) Vestibular projections in the human cortex. Exp Brain Res 141:541–551

Diederich A (1995) Intersensory facilitation of reaction time: evaluation of counter and diffusion coactivation models. J Math Psychol 39:197–215

Engel GR, Dougherty WG (1971) Visual–auditory distance constancy. Nature 234:308

Exner S (1868) Über die zu einer Gesichtswahrnehmung nöthige Zeit. Wiener Sitzungsberichte der Mathematisch-Naturwissenschaftlichen Classe der Kaiserlichen Akademie der Wissenschaften 58:601–632

Fernandez C, Goldberg JM (1971) Physiology of peripheral neurons innervating semicircular canals of the squirrel monkey. II. Response to sinusoidal stimulation and dynamics of peripheral vestibular system. J Neurophysiol 34:661–675

Figliozzi F, Guariglia P, Silvetti M, Siegler I, Doricchi F (2005) Effects of vestibular rotatory accelerations on covert attentional orienting in vision and touch. J Cogn Neurosci 17:1638–1651

Fitzpatrick RC, Day BL (2004) Probing the human vestibular system with galvanic stimulation. J App Physiol 96:2301–2316

Gibbon J, Rutschmann R (1969) Temporal order judgment and reaction time. Science 165:413–415

Goldberg JM, Smith CE, Fernandez C (1984) Relation between discharge regularity and responses to externally applied galvanic currents in vestibular nerve afferents of the squirrel monkey. J Neurophysiol 51:1236–1256

Grant P, Lee PTS (2007) Motion–visual phase-error detection in a flight simulator. J Aircr 44:927–935

Guldin WO, Grüsser OJ (1998) Is there a vestibular cortex? Trends Neurosci 21:254–259

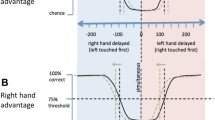

Harrar V, Harris LR (2005) Simultaneity constancy: detecting events with touch and vision. Exp Brain Res 166:465–473

Harrar V, Harris LR (2008) The effect of exposure to asynchronous audio, visual, and tactile stimulus combinations on the perception of simultaneity. Exp Brain Res 186:517–524

Hirsh IJ, Sherrick CE Jr (1961) Perceived order in different sense modalities. J Exp Psychol 62:423–432

Israël I, Capelli A, Sablé D, Laurent C, Lecoq C, Bredin J (2004) Multifactorial interactions involved in linear self-transport distance estimate: a place for time. Int J Psychophysiol 53:21–28

Jaekl PM, Harris LR (2007) Auditory–visual temporal integration measured by shifts in perceived temporal location. Neurosci Lett 417:219–224

Jaskowski P, Jaroszyk F, Hojan-Jezierska D (1990) Temporal-order judgments and reaction time for stimuli of different modalities. Psychol Res 52:35–38

Keetels M, Stekelenburg J, Vroomen J (2007) Auditory grouping occurs prior to intersensory pairing: evidence from temporal ventriloquism. Exp Brain Res 180:449–456

King AJ, Palmer AR (1985) Integration of visual and auditory information in bimodal neurones in the guinea-pig superior colliculus. Exp Brain Res 60:492–500

Kopinska A, Harris LR (2004) Simultaneity constancy. Perception 33:1049–1060

Kuffler SW (1953) Discharge patterns and functional organization of mammalian retina. J Neurophysiol 16:37–68

Lewald J, Karnath HO (2000) Vestibular influence on human auditory space perception. J Neurophysiol 84:1107–1111

Lewald J, Karnath HO (2001) Sound lateralization during passive whole-body rotation. Eur J NeuroSci 13:2268–2272

Lobel E, Kleine JF, Bihan DL, Leroy-Willig A, Berthoz A (1998) Functional MRI of galvanic vestibular stimulation. J Neurophysiol 80:2699–2709

Lorente de No R (1933) Vestibulo-ocular reflex arc. Arch Neurol Psychiat 30:245–291

Lund S, Broberg C (1983) Effects of different head positions on postural sway in man induced by a reproducible vestibular error signal. Acta Physiol Scand 117:307–309

Mitrani L, Shekerdjiiski S, Yakimoff N (1986) Mechanisms and asymmetries in visual perception of simultaneity and temporal order. Biol Cybern 54:159–165

Navarra J, Soto-Faraco S, Spence C (2007) Adaptation to audiotactile asynchrony. Neurosci Lett 413:72–76

Pfaltz CR (1967) Recherches nystagmographiques sur la réaction galvanique vestibulaire. Rev Neurol 117:309–315

Phillips-Silver J, Trainor LJ (2005) Feeling the beat: movement influences infant rhythm perception. Science 308:1430

Phillips-Silver J, Trainor LJ (2007) Hearing what the body feels: auditory encoding of rhythmic movement. Cognition 105:533–546

Pöppel E, Schill K, von Steinbüchel N (1990) Sensory integration within temporally neutral systems states: a hypothesis. Naturwissenschaften 77:89–91

Rains JD (1963) Signal luminance and position effects in human reaction time. Vis Res 61:239–251

Roll R, Velay JL, Roll JP (1991) Eye and neck proprioceptive messages contribute to the spatial coding of retinal input in visually oriented activities. Exp Brain Res 85:423–431

Roufs JAJ (1963) Perception lag as a function of stimulus luminance. Vis Res 3:81–91

Rutschmann J, Link R (1964) Perception of temporal order of stimuli differing in sense mode and simple reaction time. Percept Mot Skills 18:345–352

Schiefer U, Strasburger H, Becker ST, Vonthein R, Schiller J, Dietrich TJ, Hart W (2001) Reaction time in automated kinetic perimetry: effects of stimulus luminance, eccentricity, and movement direction. Vis Res 41:2157–2164

Schneider KA, Bavelier D (2003) Components of visual prior entry. Cogn Psychol 47:333–366

Shore DI, Barnes ME, Spence C (2006) Temporal aspects of the visuotactile congruency effect. Neurosci Lett 392:96–100

Snyder LH, Grieve KL, Brotchie P, Andersen RA (1998) Separate body- and world-referenced representations of visual space in parietal cortex. Nature 394:887–891

Spence C, Shore DI, Klein RM (2001) Multisensory prior entry. J Exp Psychol Gen 130:799–832

Spence C, Baddeley R, Zampini M, James R, Shore DI (2003) Multisensory temporal order judgments: when two locations are better than one. Percept Psychophys 65:318–328

Sugita Y, Suzuki Y (2003) Implicit estimation of sound-arrival time. Nature 421:911

Taylor JL, McCloskey DI (1991) Illusions of head and visual target displacement induced by vibration of neck muscles. Brain 114:755–759

Titchener EB (1908) Lectures on the elementary psychology of feeling and attention. Macmillan, New York

Trainor LJ, Gao X, Lei JJ, Lehtovaara K, Harris LR (2009) The primal role of the vestibular system in determining musical rhythm. Cortex 45:35–43

van Eijk RLJ, Kohlrausch A, Juola JF, van de Par S (2008) Audiovisual synchrony and temporal order judgments: effects of experimental method and stimulus type. Percept Psychophys 70:955–968

Vatakis A, Navarra J, Soto-Faraco S, Spence C (2008) Audiovisual temporal adaptation of speech: temporal order versus simultaneity judgments. Exp Brain Res 185:521–529

Watson SRD, Colebatch JG (1998) Vestibulocollic reflexes evoked by short-duration galvanic stimulation in man. J Physiol 513:587–597

Wilson JA, Anstis SM (1969) Visual delay as a function of luminance. Am J Psychol 82:350–358

Zampini M, Brown T, Shore DI, Maravita A, Röder B, Spence C (2005) Audiotactile temporal order judgments. Acta Psychol 118:277–291

Zeki S (1998) The asynchrony of consciousness. Proc R Soc B Biol Sci 265:1583–1585

Acknowledgments

This work was supported by the Natural Sciences and Engineering Research Council of Canada (NSERC). M. Barnett-Cowan was supported by a PGS-D3 NSERC Scholarship and a Canadian Institutes of Health Research Vision Health Science Training Grant. Our thanks go to Michael Jenkin for technical assistance, to Jeff Sanderson who helped conduct experiments and to David Shore for comments on this project.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Barnett-Cowan, M., Harris, L.R. Perceived timing of vestibular stimulation relative to touch, light and sound. Exp Brain Res 198, 221–231 (2009). https://doi.org/10.1007/s00221-009-1779-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00221-009-1779-4