Abstract

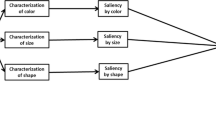

Real-time interaction in virtual environments composed of numerous objects modeled with a high number of faces remains an important issue in interactive virtual environment applications. A well-established approach to deal with this problem is to simplify small or distant objects where minor details are not informative for users. Several approaches exist in literature to simplify a 3D mesh uniformly. A possible improvement to this approach is to take advantage of a visual attention model to distinguish regions of a model which are considered important from the point of view of the human visual system. These regions can then be preserved during simplification to improve the perceived quality of the model. In the present article, we present an original application of biologically-inspired visual attention for improved perception-based representation of 3D objects. An enhanced visual attention model extracting information about color, intensity, orientation, as in the classical bottom-up visual attention model, but that also considers supplementary features believed to guide the deployment of human visual attention (such as symmetry, curvature, contrast, entropy and edge information), is introduced to identify such salient regions. Unlike the classical model where these features contribute equally to the identification of salient regions, a novel solution is proposed to adjust their contribution to the visual-attention model based on their compliance with points identified as salient by human subjects. An iterative approach is then proposed to extract salient points from salient regions. Salient points derived from images taken from best viewpoints of a 3D object are then projected to the surface of the object to identify salient vertices which will be preserved in the mesh simplification. The obtained results are compared with existing solutions from the literature to demonstrate the superiority of the proposed approach.

Similar content being viewed by others

References

Kietzmann, T. C., Lange, S., & Riedmiller, M. (2009). Computational object recognition: A biologically motivated approach. Biological Cybernetics, 100, 59–79.

Luebke, D., & Hallen, B. (2001). Perceptually driven simplification for interactive rendering. In S. J. Gortler & K. Myszkowski (Eds.), Rendering techniques. Eurographics. Vienna: Springer.

Itti, L., Koch, C., & Niebur, E. (1998). A model of saliency-sased visual attention for rapid scene analysis. IEEE Transactions on Pattern Analysis and Machine Intelligence, 20(11), 1254–1259.

Hadizadeh, H., & Bajic, I. V. (2014). Saliency-aware video compression. IEEE Transactions on Image Processing, 23(1), 19–33.

Frintrop, S., Rome, E., & Christensen, H. I. (2010). Computational visual attention systems and their cognitive foundations: A survey. ACM Transactions on Applied Perception (TAP), 7(1), 6.

Chagnon-Forget, M., Rouhafzay, G., Cretu, A.-M., & Bouchard, S. (2016). Enhanced visual-attention model for perceptually-improved 3d object modeling in virtual environments. 3D Research, 7(4), 1–18.

Rouhafzay, G., & Cretu, A. -M. (2017). Selectively-densified mesh construction for virtual environments using salient points derived from a computational model of visual attention. In 2017 IEEE international conference on computational intelligence and virtual environments for measurement systems and applications (CIVEMSA), Annecy, 2017 (pp. 99–104).

Luebke, D., Reddy, M., Cohen, J. D., Varshney, A., Watson, B., & Huebner, R. (2003). Level of details for 3D graphics. Amsterdam: Morgan Kaufmann.

Pojar, E., & Schmalstieg, D. (2003). User-controlled creation of multiresolution meshes. In Proceedings of the symposium on Interactive 3D graphics (pp. 127–130). Monterey, CA.

Kho, Y., & Garland, M. (2003). User-guided simplification. In Proceedings of ACM symposium on interactive 3D graphics (pp. 123–126).

Ho, T. -C., Lin, Y. -C., Chuang, J. -H., Peng, C. -H. & Cheng, Y. -J. (2006). User-assisted mesh simplification. In Proceedings of ACM international conference on virtual-reality continuum and its applications (pp. 59–66).

Lee, C. H., Varshney, A., & Jacobs, D. W. (2005). Mesh saliency. ACM SIGGRAPH, 174, 659–666.

Borji, A., & Itti, L. (2013). State-of-the-art in visual attention modeling. IEEE Transaction on Pattern Analysis and Machine Intelligence, 35(1), 185–207.

Frintrop, S. (2006). The visual attention system VOCUS: Top-down extension. In J. G. Carbonell & J. Siekmann (Eds.), VOCUS: A visual attention system for object detection and goal-directed search. Lecture notes in computer science (Vol. 3899, pp. 55–86). Berlin: Springer.

Castellani, U., Cristani, M., Fantoni, S., & Murino, V. (2008). Sparse points matching by combining 3D mesh saliency. Eurographics, 27, 643–652.

Zhao, Y., Liu, Y., Wang, Y., Wei, B., Yang, J., Zhao, Y., et al. (2016). Region-based saliency estimation for 3D shape analysis and understanding. Neurocomputing, 197(2016), 1–13.

Lavoué, G., Cordier, F., Seo, H., & Larabi, M.-C. (2018). Visual attention for rendered 3D shapes. Computer Graphics Forum, 37(2), 191–203.

Godil, A., & Wagan, A. I. (2011). Salient local 3D features for 3D shape retrieval. SPIE 3D Image Processing and Application, 7864, 78640S.

Sipiran, I., & Bustos, B. (2010). A robust 3D interest points detector based on Harris operator. In Eurographics 2010 Workshop on 3D Object Retrieval (3DOR’10) (pp. 7–14).

Novatnak, J., & Nishino, K. (2007). Scale-dependent 3D geometric features. In IEEE international conference on computer vision (pp. 1–8).

Sun, J., Ovsjanikov, M., & Guibas, L. (2009). A concise and provably informative multi-scale signature based on heat diffusion. In Eurographics symposium on geometry processing (Vol. 28, pp. 1383–1392).

Mirloo, M., & Ebrahimnezhad, H. (2018). Salient point detection in protrusion parts of 3D object robust to isometric variations. 3D Research, 9, 2.

Alliez, P., Cohen-Steiner, D., Devillers, O., Levy, B., & Desbrun, M. (2003). Anisotropic polygonal remeshing. ACM Siggraph, 22(3), 485–493.

Song, R., Liu, Y., Zhao, Y., Martin, R. R., & Rosin, P. L. (2012). Conditional random field-based mesh saliency. In IEEE international conference on image processing (pp. 637–640).

Howlett, S., Hammil, J., & O’Sullivan, C. (2005). An experimental approach to predicting saliency for simplified polygonal models. ACM Transaction on Applied Perception, 2(3), 1–23.

Harel, J., Koch, C., & Perona, P. (2006). Graph-based visual saliency. In Proceedings of the neural information processing systems (pp. 545–552).

Loy, G., & Eklundh, J. -O. (2006). Detecting symmetry and symmetric constellations of features. In IEEE ECCV (pp. 508–521).

Derrington, A. M., Krauskopf, J., & Lennie, P. (1984). Chromatic mechanisms in lateral geniculate nucleus of macaque. The Journal of Physiology, 357, 241–265.

Wolfe, J. M., & Horowitz, T. S. (2004). What attributes guide the deployment of visual attention and how do they do it? Nature Reviews Neuroscience, 5, 1–7.

Dutagaci, H., Cheung, C. -P., Godil, A. (2016) A benchmark for 3D interest points marked by human subjects. http://www.itl.nist.gov/iad/vug/sharp/benchmark/3DInterestPoint. Accessed August 1, 2017.

Hughes, H. C., & Zimba, L. D. (1987). Natural boundaries for the spatial spread of directed visual attention. Neuropsychologia, 25(1), 5–18.

Wang, Z., Bovik, A. C., Sheikh, H. R., & Simoncelli, E. P. (2004). Image quality assessment: From error visibility to structural similarity. IEEE Transactions on Image Processing, 13(4), 600–612.

Moller, T., & Trumbore, B. (1997). Fast, minimum storage ray/triangle intersection. Journal of Graphics Tools, 2(1), 21–28.

Garland, M., & Heckbert, P. S. (1997). Surface simplification using quadric error meshes. In SIGGRAPH '97 proceedings of the 24th annual conference on computer graphics and interactive techniques (pp. 209–216).

Cignoni, P., Rocchini, C., & Scopigno, R. (1998). Metro: Measuring error on simplified surfaces. Computer Graphics Forum, 17(2), 167–174.

Funding

This work is supported in part by the Natural Sciences and Engineering Research Council of Canada (NSERC).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Rights and permissions

About this article

Cite this article

Rouhafzay, G., Cretu, AM. Perceptually Improved 3D Object Representation Based on Guided Adaptive Weighting of Feature Channels of a Visual-Attention Model. 3D Res 9, 29 (2018). https://doi.org/10.1007/s13319-018-0181-z

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13319-018-0181-z