Abstract

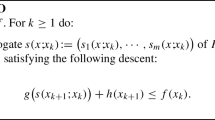

This paper is concerned with convex composite minimization problems in a Hilbert space. In these problems, the objective is the sum of two closed, proper, and convex functions where one is smooth and the other admits a computationally inexpensive proximal operator. We analyze a family of generalized inertial proximal splitting algorithms (GIPSA) for solving such problems. We establish weak convergence of the generated sequence when the minimum is attained. Our analysis unifies and extends several previous results. We then focus on \(\ell _1\)-regularized optimization, which is the ubiquitous special case where the nonsmooth term is the \(\ell _1\)-norm. For certain parameter choices, GIPSA is amenable to a local analysis for this problem. For these choices we show that GIPSA achieves finite “active manifold identification”, i.e. convergence in a finite number of iterations to the optimal support and sign, after which GIPSA reduces to minimizing a local smooth function. We prove local linear convergence under either restricted strong convexity or a strict complementarity condition. We determine the rate in terms of the inertia, stepsize, and local curvature. Our local analysis is applicable to certain recent variants of the Fast Iterative Shrinkage–Thresholding Algorithm (FISTA), for which we establish active manifold identification and local linear convergence. Based on our analysis we propose a momentum restart scheme in these FISTA variants to obtain the optimal local linear convergence rate while maintaining desirable global properties.

Similar content being viewed by others

Notes

In fact we expect the objective function values of I-FBS to reach the minimum with speed o(1 / k) if the parameters satisfy the conditions of Theorem 1. However this analysis is beyond the scope of this paper.

Setting \(A=\partial g\) and \(B=\nabla f\) recovers Problem (1).

Among first-order methods.

\(F^*\) is approximated by the smallest objective function value among all tested algorithms after 1500 iterations.

Despite having an additional function evaluation per iteration, FISTA-CD-RE only requires one matrix multiply per iteration, which is the same as FISTA-CD and FISTA since the matrix multiply is the dominant cost.

References

Afonso, M.V., Bioucas-Dias, J.M., Figueiredo, M.A.: Fast image recovery using variable splitting and constrained optimization. IEEE Trans. Image Process. 19(9), 2345–2356 (2010)

Agarwal, A., Negahban, S., Wainwright, M.J.: Fast global convergence of gradient methods for high-dimensional statistical recovery. Ann. Statist. 40(5), 2452–2482 (2012). doi:10.1214/12-AOS1032

Alvarez, F.: On the minimizing property of a second order dissipative system in Hilbert spaces. SIAM J. Control Optim. 38(4), 1102–1119 (2000)

Attouch, H., Chbani, Z., Peypouquet, J., Redont, P.: Fast convergence of inertial dynamics and algorithms with asymptotic vanishing viscosity. Math. Program. 1–53 (2016). doi:10.1007/s10107-016-0992-8.

Attouch, H., Peypouquet, J.: The rate of convergence of Nesterov’s accelerated forward-backward method is actually faster than \(1/k^{2}\). SIAM J. Optim. 26(3), 1824–1834 (2016)

Attouch, H., Peypouquet, J., Redont, P.: A dynamical approach to an inertial forward-backward algorithm for convex minimization. SIAM J. Optim. 24(1), 232–256 (2014)

Bach, F., Jenatton, R., Mairal, J., Obozinski, G., et al.: Convex optimization with sparsity-inducing norms. Optim. Mach. Learn. 5, 19–53 (2011)

Bauschke, H.H., Combettes, P.L.: Convex Analysis and Monotone Operator Theory in Hilbert Spaces. Springer, Heidelberg (2011)

Beck, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithm for linear inverse problems. SIAM J. Img. Sci. 2(1), 183–202 (2009). doi:10.1137/080716542

Bello Cruz, J.Y., Nghia, T.T.: On the convergence of the forward–backward splitting method with linesearches. Optim. Methods Software 31(6), 1209–1238 (2016)

Bertsekas, D.P.: Nonlinear programming, 2nd edn. Athena Scientific (1999)

Boţ, R.I., Csetnek, E.R., Hendrich, C.: Inertial Douglas–Rachford splitting for monotone inclusion problems. Appl. Math. Comput. 256, 472–487 (2015)

Bredies, K., Lorenz, D.A.: Linear convergence of iterative soft-thresholding. J. Fourier Anal. Appl. 14(5–6), 813–837 (2008)

Burachik, R.S., Svaiter, B.: \(\varepsilon \)-enlargements of maximal monotone operators in banach spaces. Set-Valued Anal. 7(2), 117–132 (1999)

Cevher, V., Becker, S., Schmidt, M.: Convex optimization for big data: Scalable, randomized, and parallel algorithms for big data analytics. Signal Process. Mag. IEEE 31(5), 32–43 (2014)

Chambolle, A., Dossal, C.: On the convergence of the iterates of the fast iterative shrinkage/thresholding algorithm. J. Optim. Theory Appl. 166(3), 968–982 (2015)

Chambolle, A., Pock, T.: On the ergodic convergence rates of a first-order primal-dual algorithm. Math. Program. 159(1–2), 253–287 (2016)

Choi, K., Wang, J., Zhu, L., Suh, T.S., Boyd, S., Xing, L.: Compressed sensing based cone-beam computed tomography reconstruction with a first-order method. Med. phys 37(9), 5113–5125 (2010)

Combettes, P.L., Pesquet, J.C.: Proximal splitting methods in signal processing. Fixed-Point Algorithms for Inverse Problems in Science and Engineering, pp. 185–212. Springer, New York (2011)

Combettes, P.L., Wajs, V.R.: Signal recovery by proximal forward-backward splitting. Multiscale Model. Simul. 4(4), 1168–1200 (2005)

Condat, L.: A primal–dual splitting method for convex optimization involving Lipschitzian, proximable and linear composite terms. J. Optim. Theory Appl. 158(2), 460–479 (2013)

Davis, D., Yin, W.: Convergence Rate Analysis of Several Splitting Schemes. Tech. rep, UCLA CAM Report (2014)

Eckstein, J., Yao, W.: Augmented Lagrangian and alternating direction methods for convex optimization: A tutorial and some illustrative computational results. RUTCOR Research Reports 32 (2012)

Friedman, J., Hastie, T., Tibshirani, R.: Regularization paths for generalized linear models via coordinate descent. J. Stat. Software 33(1), 1 (2010)

Güler, O.: New proximal point algorithms for convex minimization. SIAM J. Optim. 2(4), 649–664 (1992)

Hale, E.T., Yin, W., Zhang, Y.: Fixed-point continuation for \(\ell _1\)-minimization: methodology and convergence. SIAM J. Optim. 19(3), 1107–1130 (2008). doi:10.1137/070698920

Hare, W., Lewis, A.S.: Identifying active constraints via partial smoothness and prox-regularity. J Convex Anal. 11(2), 251–266 (2004)

Kim, S.J., Koh, K., Lustig, M., Boyd, S., Gorinevsky, D.: An interior-point method for large-scale \(\ell _1\)-regularized least squares. IEEE J. Sel. Top. Signal Process. 1(4), 606–617 (2007)

Liang, J., Fadili, J., Peyré, G.: Convergence rates with inexact non-expansive operators. Math. Program. 159(1–2), 403–434 (2016)

Liang, J., Fadili, J., Peyré, G.: Local linear convergence of forward–backward under partial smoothness. In: Advances in Neural Information Processing Systems, pp. 1970–1978 (2014)

Liang, J., Fadili, J., Peyré, G.: Activity identification and local linear convergence of inertial forward–backward splitting. arXiv preprint arXiv:1503.03703 (2015)

Lorenz, D.A., Pock, T.: An inertial forward-backward algorithm for monotone inclusions. J. Math. Imaging Vis. (2014). doi:10.1007/s10851-014-0523-2

Lustig, M., Donoho, D., Pauly, J.M.: Sparse MRI: the application of compressed sensing for rapid MR imaging. Magn. Reson. Med. 58(6), 1182–1195 (2007)

Maingé, P.E.: Convergence theorems for inertial KM-type algorithms. J. Comput. Appl. Math. 219(1), 223–236 (2008)

Monteiro, R.D., Ortiz, C., Svaiter, B.F.: An adaptive accelerated first-order method for convex optimization. Comput. Optim. Appl. 64(1), 31–73 (2016)

Moudafi, A., Oliny, M.: Convergence of a splitting inertial proximal method for monotone operators. J. Comput. Appl. Math. 155(2), 447–454 (2003). doi:10.1016/S0377-0427(02)00906-8

Nesterov, Y.: A method of solving a convex programming problem with convergence rate \(O(1/k^2)\). Soviet Math. Dokl. 27, 372–376 (1983)

Nesterov, Y.: Introductory Lectures on Convex Optimization: A Basic Course. Springer, Berlin (2004)

Nesterov, Y.: Gradient methods for minimizing composite functions. Math. Program. 140(1), 125–161 (2013)

O’Donoghue, B., Candès, E.: Adaptive restart for accelerated gradient schemes. Found. Comput. Math. 15(3), 715–732 (2015)

Opial, Z.: Weak convergence of the sequence of successive approximations for nonexpansive mappings. Bull. Am. Math. Soc 73(4), 591–597 (1967)

Passty, G.B.: Ergodic convergence to a zero of the sum of monotone operators in Hilbert space. J. Math. Anal. Appl. 72(2), 383–390 (1979)

Polyak, B.T.: Some methods of speeding up the convergence of iteration methods. USSR Comput. Math. Math. Phys. 4(5), 1–17 (1964)

Polyak, B.T.: Introduction to Optimization. Optimization Software Inc. New York (1987)

Raguet, H., Fadili, J., Peyré, G.: A generalized forward-backward splitting. SIAM J. Imaging Sci. 6(3), 1199–1226 (2013)

Shalev-Shwartz, S., Tewari, A.: Stochastic methods for \(\ell _1\)-regularized loss minimization. J. Mach. Learn. Res. 12, 1865–1892 (2011)

Su, W., Boyd, S., Candès, E.: A differential equation for modeling Nesterov’s accelerated gradient method: theory and insights. In: Advances in Neural Information Processing Systems, pp. 2510–2518 (2014)

Tao, S., Boley, D., Zhang, S.: Local linear convergence of ista and fista on the lasso problem. SIAM J. Optim. 26(1), 313–336 (2016)

Tibshirani, R.J.: The lasso problem and uniqueness. Electron. J. Stat. 7, 1456–1490 (2013)

Tseng, P.: On accelerated proximal gradient methods for convex–concave optimization. SIAM J. Opt. (2008, submitted)

Wen, Z., Yin, W., Zhang, H., Goldfarb, D.: On the convergence of an active-set method for \(\ell _1\) minimization. Optim. Methods Softw. 27(6), 1127–1146 (2012)

Zhang, H., Yin, W., Cheng, L.: Necessary and sufficient conditions of solution uniqueness in 1-norm minimization. J. Optim. Theory Appl. 164(1), 109–122 (2015)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Johnstone, P.R., Moulin, P. Local and global convergence of a general inertial proximal splitting scheme for minimizing composite functions. Comput Optim Appl 67, 259–292 (2017). https://doi.org/10.1007/s10589-017-9896-7

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-017-9896-7