Abstract

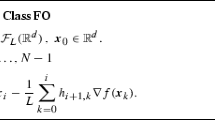

It has been conjectured that the conjugate gradient method for minimizing functions of several variables has a superlinear rate of convergence, but Crowder and Wolfe show by example that the conjecture is false. Now the stronger result is given that, if the objective function is a convex quadratic and if the initial search direction is an arbitrary downhill direction, then either termination occurs or the rate of convergence is only linear, the second possibility being more usual. Relations between the starting point and the initial search direction that are necessary and sufficient for termination in the quadratic case are studied.

Similar content being viewed by others

References

H.P. Crowder and P. Wolfe, “Linear convergence of the conjugate gradient method”,IBM Journal of Research and Development 16 (1972) 431–433.

R. Fletcher and C.M. Reeves, “Function minimization by conjugate gradients”,The Computer Journal 7 (1964) 149–154.

L.V. Kantorovich and G.P. Akilov,Functional analysis in normed spaces (Pergamon, Oxford, 1964).

E. Polak,Computational methods in optimization: a unified approach (Academic Press, New York, 1971).

M.J.D. Powell, “Some convergence properties of the conjugate gradient method”, Rept. C.S.S. 23, A.E.R.E., Harwell (1975).

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Powell, M.J.D. Some convergence properties of the conjugate gradient method. Mathematical Programming 11, 42–49 (1976). https://doi.org/10.1007/BF01580369

Received:

Issue Date:

DOI: https://doi.org/10.1007/BF01580369